Tag

AI benchmarks

AI benchmarks measure how models perform on reasoning, knowledge QA, coding, and long-context tasks. Scores from tests like ARC-AGI-2, GPQA, and MMLU help compare new releases, track real progress, and expose trade-offs between capability, cost, and reliability.

3 articles

Research/Apr 17

Stanford’s 2026 AI Index, explained with charts

Stanford’s 2026 AI Index shows faster adoption, rising costs, and thin US-China gaps. The charts tell a messier story than the hype.

Model Releases/Apr 3

Gemini 3.1 Pro: Google’s new top model in numbers

Gemini 3.1 Pro posts 77.1% on ARC-AGI-2, 94.3% on GPQA Diamond, and a 1M-token context window, while keeping Gemini 3 pricing.

Model Releases/Apr 2

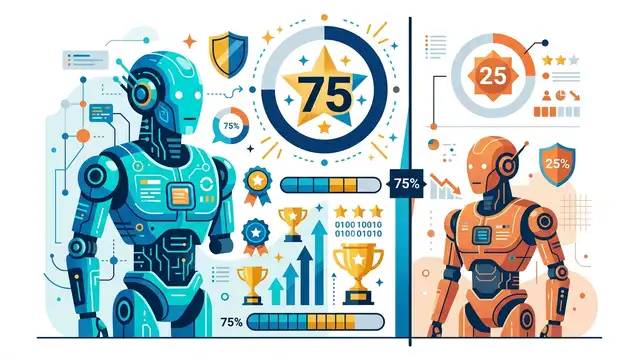

GPT-5.4 vs Claude Opus 4.6: 75% Win Rate

We tested GPT-5.4, Claude Opus 4.6, DeepSeek V4, and Gemini 3.1 across 12 benchmarks. One model won 9 of them.