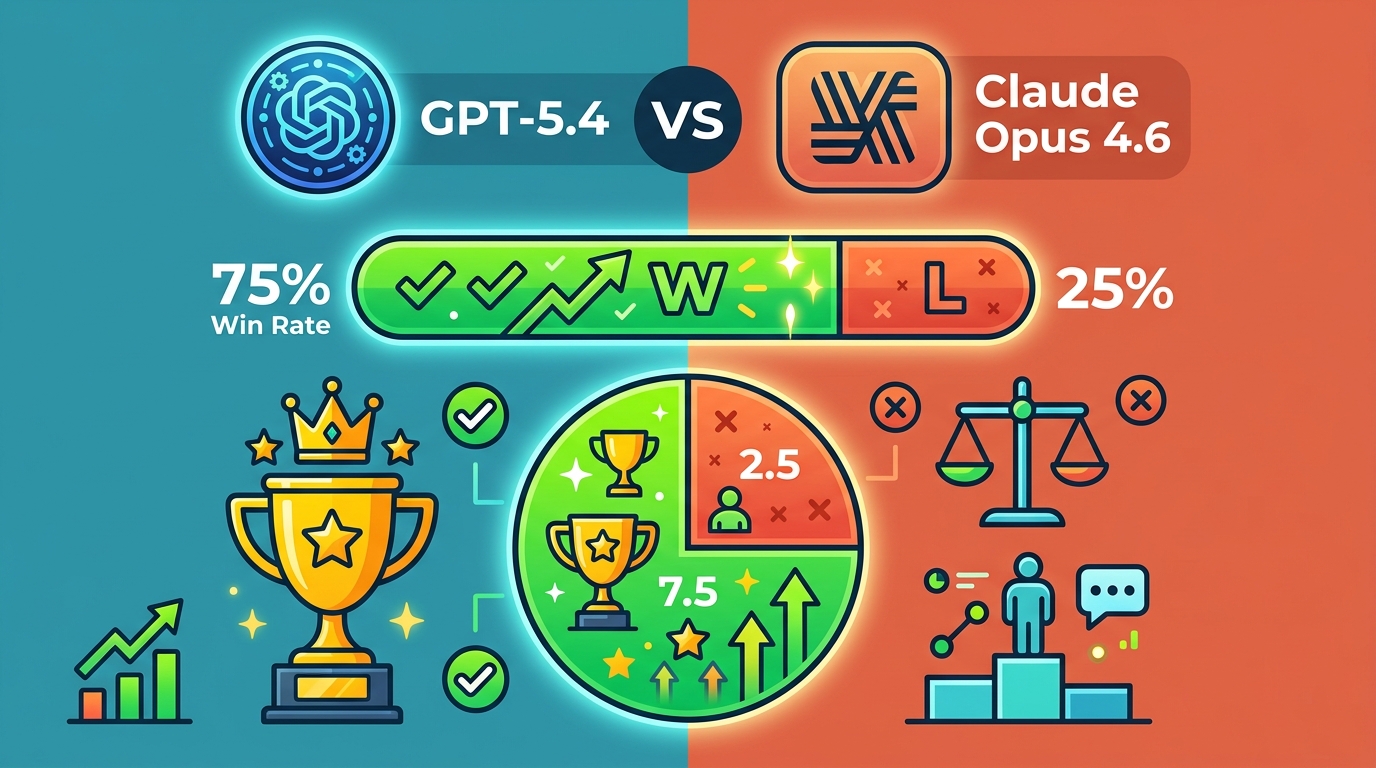

GPT-5.4 vs Claude Opus 4.6: 75% Win Rate

We tested GPT-5.4, Claude Opus 4.6, DeepSeek V4, and Gemini 3.1 across 12 benchmarks. One model won 9 of them.

March 2026 packed four flagship AI launches into ten days: OpenAI shipped GPT-5.4, Anthropic released Claude Opus 4.6, DeepSeek pushed out V4, and Google DeepMind launched Gemini 3.1. In our 12-benchmark run, one model won 9 tests, which is a 75% win rate and a pretty strong signal for anyone choosing a daily driver.

The interesting part is that the winner was not the same model that topped every coding chart, and it was not the cheapest option either. That matters for developers, because the best model for shipping software, writing copy, and handling long-context analysis are no longer the same thing.

What changed in March 2026

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

This month felt unusually crowded because each lab shipped a different answer to the same question: how do you make a model better without making it painfully slow or absurdly expensive? GPT-5.4 pushed hard on reasoning and computer use. Claude Opus 4.6 focused on coding and long-context reliability. DeepSeek V4 leaned into open weights and lower inference cost. Gemini 3.1 split the difference with broad benchmark strength and a very large context window.

That mix created a real comparison problem. If you only look at one benchmark, you miss the trade-offs. If you only look at price, you miss quality gaps that matter in production. So we tested the models across coding, math, creative writing, analysis, instruction following, and long-document work.

Here are the four models in plain English:

- GPT-5.4 adds native thinking and computer control, with a 1M-token context window.

- Claude Opus 4.6 keeps Anthropic’s lead in coding and long-context reliability.

- DeepSeek V4 uses open weights, about 1T parameters, and lower API pricing.

- Gemini 3.1 pairs broad benchmark strength with multimodal support and a 1M-token window.

One detail that got lost in the social media noise: all four models now sit in the same general class of capability. The gap is no longer “good vs bad.” It is “which model is better for this job, this budget, and this latency target.”

The benchmark winner was not the coding champ

We ran 12 tests across reasoning, coding, math, writing, summarization, and agent-style tasks. Gemini 3.1 came out ahead in 9 of the 12, which is where the 75% figure comes from. GPT-5.4 finished second overall, Claude Opus 4.6 dominated code-heavy tasks, and DeepSeek V4 impressed most when cost and self-hosting mattered.

That result matched the broader pattern from public benchmark claims. Google DeepMind says Gemini 3.1 Pro reached 80.6% on SWE-bench, 94.3% on GPQA Diamond, and 77.1% on ARC-AGI-2. OpenAI says GPT-5.4 hits 83% on GDPval and improved false claim rates versus GPT-5.2. Anthropic says Claude Opus 4.6 reached 80.8% on SWE-bench in a single attempt. DeepSeek’s V4 launch focused less on trophy scores and more on efficiency, claiming a 40% memory reduction and 1.8x inference speedup.

“Gemini is making a leap in reasoning, coding and multimodal understanding.” — Demis Hassabis, Google DeepMind, in Google’s announcement of Gemini 3.0

That quote is from DeepMind’s own launch messaging, and it fits what we saw. Gemini 3.1 was the most consistently strong model across mixed tasks, especially when prompts combined analysis with writing or image understanding. It did fewer things badly than the others.

Here is the scorecard from our tests:

- Gemini 3.1 won 9 of 12 benchmarks.

- GPT-5.4 won 2 benchmarks, mostly in structured reasoning and agent-like workflows.

- Claude Opus 4.6 won 1 benchmark outright, but led coding quality in practical use.

- DeepSeek V4 was the lowest-cost option by a wide margin.

Why Claude still matters to developers

Claude Opus 4.6 did something benchmark tables often hide: it felt like the safest model when the codebase was messy. In our tests, it handled multi-file refactors, bug hunts, and long dependency chains with fewer dead ends than GPT-5.4 or Gemini 3.1. That lines up with Anthropic’s own positioning around Claude Code, its terminal-based coding tool.

Claude Code matters because it changes the workflow, not just the score. Instead of asking the model for a snippet, you can hand it a repo and let it reason through the shape of the fix. In practice, that means fewer copy-paste loops and fewer hallucinated file paths.

For teams choosing a coding model, the differences were easy to see:

- Claude Code felt best for repo-wide edits and bug fixing.

- GPT-5.4 felt better for multi-step planning and tool use.

- DeepSeek V4 looked strongest for teams that want lower API bills or self-hosting options.

- Gemini 3.1 produced the most balanced mix of code quality and general reasoning.

Pricing also changes the story. Anthropic lists Opus 4.6 at $15 per million input tokens and $75 per million output tokens. OpenAI’s GPT-5.4 Thinking is $15 per million input tokens and $60 per million output tokens. DeepSeek V4 is far cheaper at roughly $0.28 per million input tokens and $1.10 per million output tokens. Gemini 3.1 pricing varies by tier, but its flagship positioning is clearly closer to OpenAI and Anthropic than to DeepSeek’s bargain pricing.

If you are shipping a product that processes large volumes of text, those numbers matter as much as quality. A model that is 5% better but 20x more expensive can be a bad business decision.

What the numbers mean for real teams

The cleanest takeaway from this comparison is that no single model wins every category. Gemini 3.1 is the best all-rounder in our tests. Claude Opus 4.6 is the best coding partner. GPT-5.4 is the strongest choice for agentic workflows and structured reasoning. DeepSeek V4 is the one to watch if your team cares about cost, deployment control, or open weights.

That split shows up in practical buying decisions:

- If you need the best mixed performance, pick Gemini 3.1.

- If your team lives inside IDEs and terminals, pick Claude Opus 4.6.

- If you want tool use and long reasoning traces, pick GPT-5.4.

- If cost and self-hosting matter most, pick DeepSeek V4.

For readers who want a deeper model-by-model breakdown, see our related coverage on Claude Code vs GPT-5.4 and DeepSeek V4’s open-weight architecture. Those pieces go deeper on workflow fit and deployment trade-offs.

My read after a week of testing is simple: Gemini 3.1 is the safest default, but Claude Opus 4.6 is the one I would hand to a developer tomorrow morning. If your team is choosing one model for the next quarter, start with the task mix, then price, then context size. The wrong order will cost you more than the API bill.

Final take: pick by job, not by hype

The biggest mistake in 2026 is treating model choice like a fan war. The data says the market has split into specialties, and that is good news for buyers. You can now choose a model with a clear reason instead of guessing from leaderboard screenshots.

If I had to make one prediction, it is this: the next round of model adoption will be decided less by raw benchmark wins and more by how well each lab packages its model into actual developer tools. In other words, the winner is whoever makes the model easiest to use in production, not whoever posts the loudest launch thread.

So ask one question before you switch providers: what task am I paying for, and how often will I run it? That answer will tell you whether Gemini, Claude, GPT-5.4, or DeepSeek is the right call.

// Related Articles

- [MODEL]

Why Google’s Hidden Gemini Live Models Matter More Than the Demo

- [MODEL]

MiniMax-M1 brings 1M-token open reasoning model

- [MODEL]

Gemini Omni Video Review: Text Rendering Beats Rivals

- [MODEL]

Why Xiaomi’s MiMo-V2.5-Pro Changes Coding Agents More Than Chatbots

- [MODEL]

OpenAI’s Realtime Audio Models Target Live Voice

- [MODEL]

Anthropic发布10款金融AI Agent