Google's AI Leap with Gemini Innovations in 2026

In 2026, Google launched the Gemini 3.1 Pro, improving AI capabilities in reasoning and context comprehension, among other advancements.

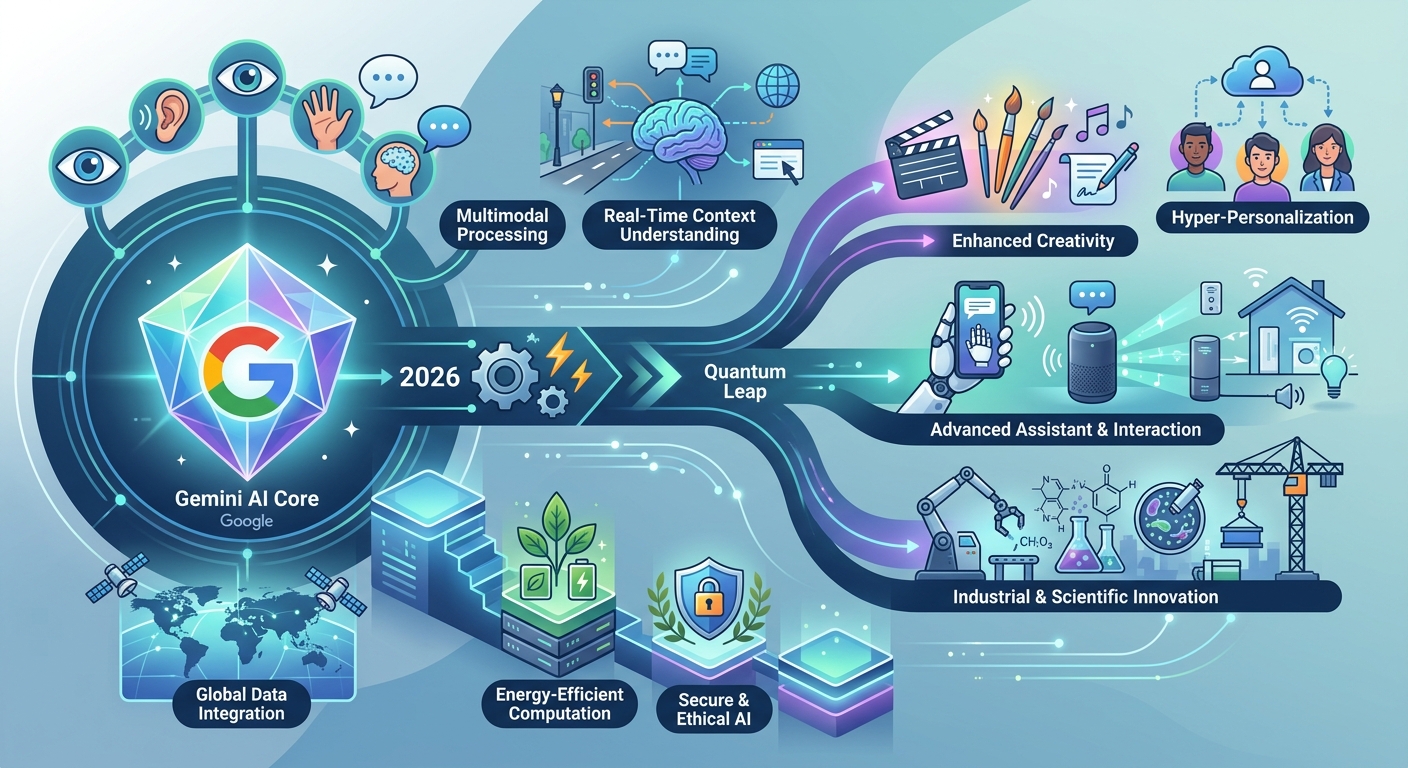

In 2026, Google, valued at a staggering $456 billion, has made significant strides in artificial intelligence, unveiling a series of advanced products and enhancements. This year, the spotlight is on the Gemini 3.1 Pro, Agentic Vision for Gemini 3 Flash, and the Gemini Enterprise for Customer Experience. These innovations are designed to tackle complex challenges and enhance user experience, making them essential for customers, investors, and partners.

Gemini 3.1 Pro: A Leap in AI Comprehension

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The Gemini 3.1 Pro model expands on the Gemini 3 series, offering enhanced reasoning, long-context comprehension, and agentic workflows. This AI model is particularly adept at tasks that demand more than straightforward answers. It excels in synthesizing complex data, providing clear explanations, and facilitating creative projects. The 3.1 Pro supports multiple formats, including text, images, audio, video, and code, with a context window of up to 1 million tokens. It's available on the Gemini app and can be accessed via various platforms, including AI Studio and Android Studio.

- Supports up to 1 million tokens

- Available on multiple platforms like AI Studio and Android Studio

- Caters to advanced reasoning tasks

Gemini Enterprise: Revolutionizing Customer Experience

The Gemini Enterprise for Customer Experience (CX) integrates shopping and customer service into a single interface, featuring prebuilt agents that can be deployed swiftly. It includes a Google Shopping agent that connects frontend interfaces to backend tools and a Food Ordering agent for enhanced conversational AI capabilities across various channels like mobile apps and kiosks.

"The goal is to merge advanced AI with everyday business needs, streamlining customer interactions," said Sundar Pichai, CEO of Google.

Agentic Vision: Transforming Image Processing

Agentic Vision for Gemini 3 Flash introduces a dynamic approach to image understanding, transforming it from a static process into an active investigation. It employs a Think, Act, Observe loop to enhance visual reasoning. By executing Python code, the model actively manipulates and analyzes images, grounding its responses in visual evidence.

- Employs Think, Act, Observe loop

- Available via Gemini API

- Enhances image processing accuracy

TranslateGemma: Bridging Language Barriers

TranslateGemma, built on Gemma 3, offers translation across 55 languages using models with parameter sizes of 4B, 12B, and 27B. This allows developers to achieve high-fidelity translation with fewer resources. TranslateGemma is designed for further adaptation, enabling researchers to refine models for specific language pairs or improve low-resource languages.

- Supports 55 languages

- Available in multiple parameter sizes

- Focuses on efficiency without quality loss

Gemini 3 Deep Think: Enhancing Scientific and Engineering Solutions

The Gemini 3 Deep Think mode has been upgraded to address modern scientific, research, and engineering challenges. It combines deep scientific knowledge with practical engineering applications. This mode is now accessible to Google AI Ultra subscribers and select researchers via the Gemini API.

- Targets scientific and engineering challenges

- Available to Ultra subscribers and select researchers

- Facilitates complex data interpretation

Looking Ahead

As Google continues to expand its AI capabilities, the company's focus on integrating advanced reasoning and context comprehension into practical applications is clear. These innovations not only enhance the user experience but also set a high bar for future technological developments. As these tools become more widely available, they promise to redefine how businesses and individuals interact with AI.

// Related Articles

- [MODEL]

MiniMax-M1 brings 1M-token open reasoning model

- [MODEL]

Gemini Omni Video Review: Text Rendering Beats Rivals

- [MODEL]

Why Xiaomi’s MiMo-V2.5-Pro Changes Coding Agents More Than Chatbots

- [MODEL]

OpenAI’s Realtime Audio Models Target Live Voice

- [MODEL]

Anthropic发布10款金融AI Agent

- [MODEL]

Why Claude’s “Infinite” Context Window Still Won’t Make AI Autonomous