Why Claude’s “Infinite” Context Window Still Won’t Make AI Autonomous

Claude’s new context, coordination, and infrastructure upgrades are real, but they do not make AI autonomous.

Claude’s new context, coordination, and infrastructure upgrades are real, but they do not make AI autonomous.

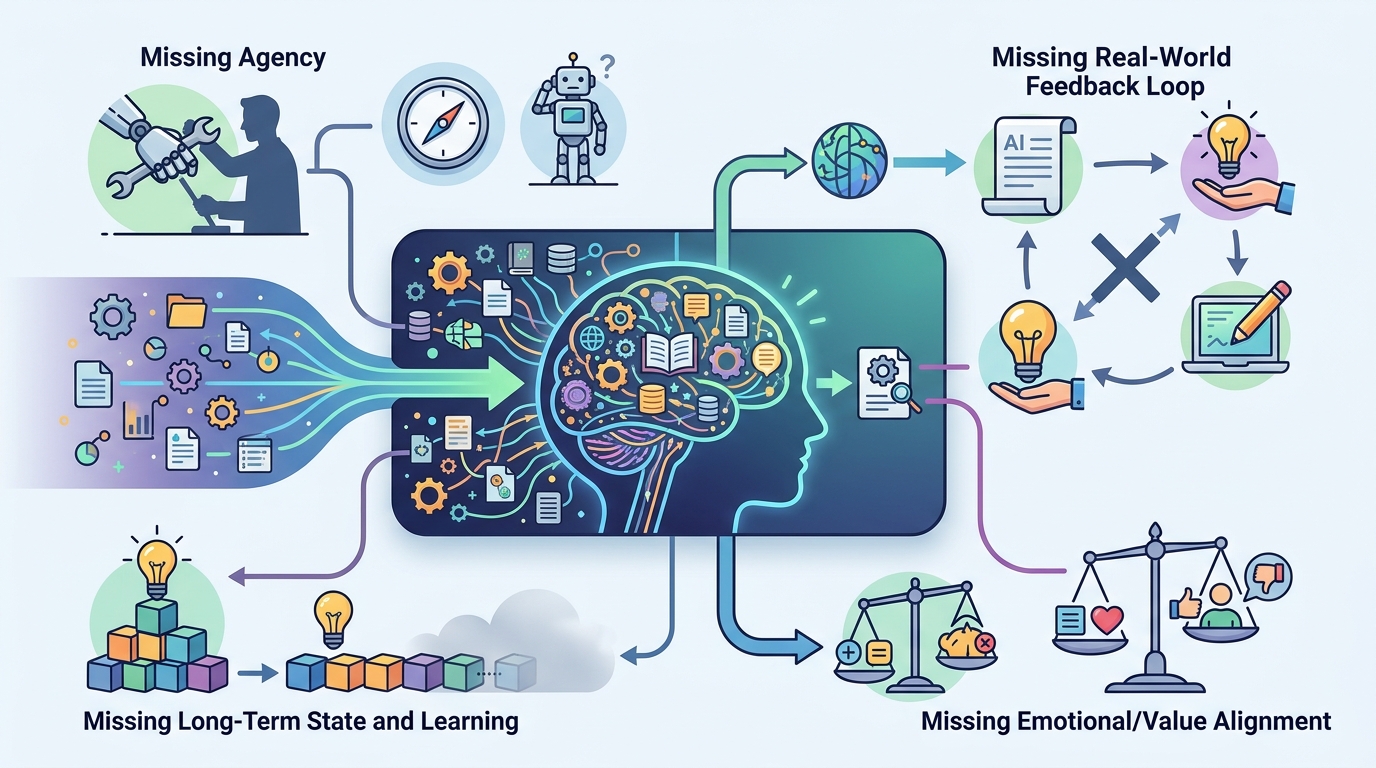

Anthropic’s latest Claude updates are being sold as a step toward autonomy, but the real story is narrower: better memory, better orchestration, and more throughput do not equal a self-running software engineer. That distinction matters because the headline feature, an “infinite” context window, sounds like a breakthrough in reasoning when it is really a breakthrough in persistence. Claude can now carry more history, coordinate more work, and operate at larger scale, yet the hard problems of judgment, verification, and accountability remain human problems.

More context fixes continuity, not understanding

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The strongest case for the update is obvious. Long-running work breaks when the model forgets earlier decisions, so a larger context window helps with tasks like codebase review, project planning, and research synthesis. If a model can keep a design discussion, a bug trail, and a set of requirements in view at once, it will make fewer dumb mistakes caused by amnesia.

But continuity is not comprehension. A system that remembers more of the conversation can still misunderstand the task, overfit to stale instructions, or confidently carry forward a bad assumption for hours. In software engineering, that is dangerous because the cost of a wrong but persistent plan is higher than the cost of a short-lived error. Memory reduces friction; it does not create judgment.

Multi-agent coordination scales work, not trust

Anthropic’s push into multi-agent coordination is the more interesting change because it reflects how real teams work: one agent can draft, another can test, another can summarize. In practice, this is useful. Parallel execution can speed up analysis, and specialized agents can reduce the time a single model spends switching between roles. That is a genuine productivity gain for developers and researchers.

Still, coordination only helps if the system can tell the difference between useful delegation and synchronized failure. Multiple agents can amplify the same blind spot across a workflow, especially when they share the same model family and the same flawed assumptions. The more you distribute the work, the more important the supervisor becomes. If no one is reliably checking the chain of reasoning, you have a busy system, not a trustworthy one.

The infrastructure story is bigger than the product story

The doubled API limits, expanded compute, and reported access to vast GPU resources matter because they remove a practical constraint that has been holding back serious usage. Developers do not just want smarter models; they want models that stay available under load, handle larger jobs, and do not collapse when the workload becomes real. For teams building on Claude, this is the difference between a demo and a dependable tool.

But infrastructure scale is not the same as product maturity. More GPUs let Anthropic serve more requests and run heavier workloads, yet they do not solve the core problem that makes autonomous coding hard: models still need bounded goals, acceptance tests, rollback plans, and human sign-off. The market often treats raw capacity as proof of capability. It is not. Capacity is what lets a system fail at larger scale unless the workflow is designed correctly.

The counter-argument

The best argument for Anthropic’s framing is that autonomy is not a switch, it is a continuum. If a model can remember more, coordinate more, self-correct in real time, and operate inside toolchains through webhooks, then it is plainly moving toward systems that can handle longer tasks with less intervention. The “dreaming” and iterative self-correction features strengthen that case because they suggest a model that learns from its own outputs instead of treating each prompt as isolated.

That is a serious point, and it deserves respect. A system that can maintain state across sessions and refine its own work is materially different from a chatbot that forgets everything after each turn. But the leap from better workflow automation to autonomous software engineering is still too large. Self-correction only works when the model has a reliable signal for what counts as correct, and in open-ended engineering work that signal is often ambiguous. The system can iterate forever and still optimize the wrong objective. So yes, Anthropic is building the pieces of autonomy. No, it has not built autonomy.

What to do with this

If you are an engineer, treat Claude as a high-throughput collaborator, not an independent operator. Use the longer context for project memory, use multi-agent setups for parallel analysis, and use webhook integrations to reduce manual glue work. But keep human review at the point where requirements become code, and keep automated tests as the final judge. If you are a PM or founder, do not buy the autonomy narrative before you have a workflow that measures correctness, not just speed. The winning pattern is not “let the model do everything”; it is “let the model do more, while the system keeps it honest.”

// Related Articles

- [MODEL]

MiniMax-M1 brings 1M-token open reasoning model

- [MODEL]

Gemini Omni Video Review: Text Rendering Beats Rivals

- [MODEL]

Why Xiaomi’s MiMo-V2.5-Pro Changes Coding Agents More Than Chatbots

- [MODEL]

OpenAI’s Realtime Audio Models Target Live Voice

- [MODEL]

Anthropic发布10款金融AI Agent

- [MODEL]

Why Midjourney 8.1 Raw Mode Is Better Than Default Style