Why Google’s Hidden Gemini Live Models Matter More Than the Demo

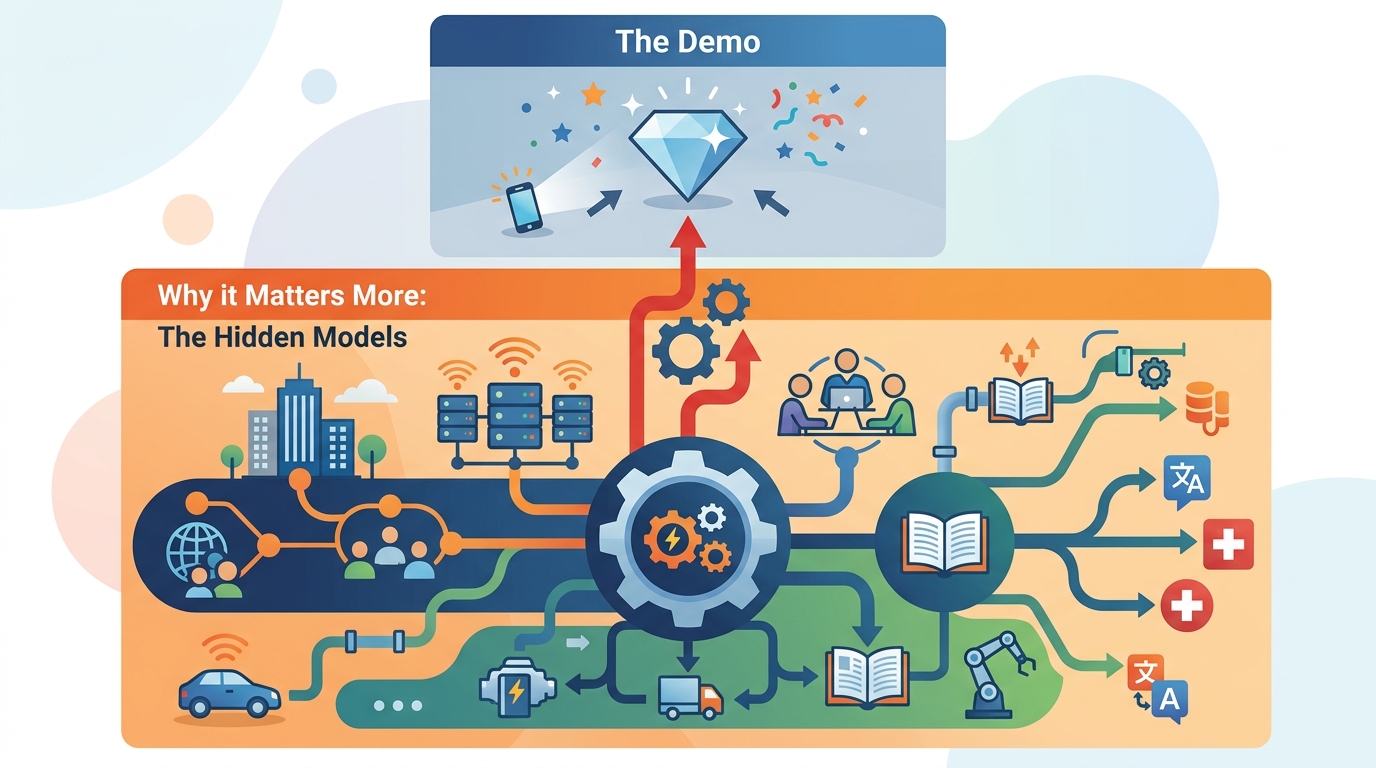

Google’s hidden Gemini Live models show the company is building a switchable AI platform, not just a chatbot.

Google is building a switchable Gemini Live platform, not just a single chatbot.

Google is not quietly tuning Gemini Live for a nicer demo; it is laying the groundwork for a multi-model product that changes voice, reasoning, memory, and input handling on demand. That matters because the hidden selector in Google App 17.18.22 already exposes seven options, two of them marked RC2, and those variants behave differently in ways users can feel: one model pulls live weather, another remembers prior details, another identifies itself as Gemini 3.1 Pro, and a thinking variant adds a reasoning layer. This is not cosmetic polish. It is the shape of a platform.

Google is turning Gemini Live into a routed system, not a monolith

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The strongest evidence is the model picker itself. A server-delivered menu means Google can swap capabilities without shipping a new app, which is exactly how a platform team thinks. The list includes Default, A2A_Rev25_RC2, A2A_Rev25_RC2_Thinking, A2A_Rev23_P13n, A2A_Nitrogen_Rev23, A2A_Capybara, A2A_Capybara_Exp, and A2A_Native_Input. That is a product architecture, not a lab artifact.

The RC2 tags matter even more than the names. Release Candidate 2 means Google is not merely experimenting with a concept; it is staging models for production. When two entries appear overnight and behave differently in controlled tests, the company is signaling that Gemini Live is moving from one generic assistant to a router that chooses the right model for the job. That is how you build a durable AI surface that can absorb new capabilities without breaking the user experience.

The hidden variants reveal Google’s real product strategy

The personalization model is the clearest clue. In tests, the P13n variant asked which time zone the user was in instead of assuming one, then remembered earlier personal details and used them later. The default Gemini Live model refused to do that. That difference is not a gimmick. It shows Google is separating generic assistant behavior from memory-aware, context-sensitive behavior, which is the only sane way to offer personalization without forcing every conversation into the same privacy and latency tradeoffs.

Capybara is just as revealing. It identified itself as Gemini 3.1 Pro rather than the usual Flash Live model, which suggests Google is testing model-tiering inside the same conversational shell. In plain English: users will not just get “Gemini Live.” They will get a stack of specialized engines for speed, memory, reasoning, and input mode. That is the right move because live voice, live retrieval, and deeper reasoning are different workloads. Pretending one model should do all three is how products get sluggish, inconsistent, and expensive.

Google is preparing for I/O with infrastructure, not slogans

The timing is the tell. The model list is server-side, two variants are already at RC2, and I/O 2026 starts May 19. That combination points to a staged reveal, likely one that shows Gemini Live switching between voices and capabilities in real time. Google has done this before: it often uses developer events to turn backend work into a product story. Here, the backend is the story. The company wants to demonstrate that Gemini can route between models the way a modern app routes between services.

The broader context reinforces that reading. Technobezz also reports Gemini Omni, Google’s next-generation video model, and usage limits are already biting hard on the AI Pro plan. That tells you Google is not only adding features, it is managing compute like a scarce resource. Multi-model routing is the answer. If one task needs a lightweight voice model and another needs a thinking model, Google can allocate the expensive path only when needed. That is how it keeps AI features shippable at consumer scale.

The counter-argument

The best objection is that hidden models do not guarantee shipping quality. Google has a long record of teasing features, running server-side experiments, and then changing course. A menu full of codenames can become a graveyard of abandoned ideas. And even if the models launch, splitting Gemini Live into many variants can confuse users, create inconsistent behavior, and make the assistant feel less reliable than a single default model.

That criticism is fair on one point: model sprawl can absolutely wreck the user experience if Google exposes too much complexity. But that is not what this evidence shows. The selector is hidden, server-controlled, and already segmented by task. That is exactly how you manage complexity before exposing it. Google is not asking users to pick from seven nerd knobs; it is building the routing layer that will let the product choose for them. The risk is real, but the architecture is right.

What to do with this

If you are an engineer, PM, or founder watching Google, treat this as a blueprint for AI product design: separate the interface from the model, route by task, and keep the expensive reasoning path off the default path unless it is needed. Build for server-side model swaps, explicit capability tiers, and memory policies that are visible in testing before they are visible in the UI. The lesson is not that Google has seven hidden models. The lesson is that the winning AI products will stop pretending one model can do everything.

// Related Articles

- [MODEL]

MiniMax-M1 brings 1M-token open reasoning model

- [MODEL]

Gemini Omni Video Review: Text Rendering Beats Rivals

- [MODEL]

Why Xiaomi’s MiMo-V2.5-Pro Changes Coding Agents More Than Chatbots

- [MODEL]

OpenAI’s Realtime Audio Models Target Live Voice

- [MODEL]

Anthropic发布10款金融AI Agent

- [MODEL]

Why Claude’s “Infinite” Context Window Still Won’t Make AI Autonomous