Tag

context window

Context window is the amount of text, code, or conversation a model can hold at once. It shapes long-document analysis, debugging, agent memory, and multi-step workflows, while forcing trade-offs between token limits, cost, latency, and retained state.

3 articles

Model Releases/Apr 13

GPT-5.4 Scores 97.6 in Knowledge Benchmarks

GPT-5.4 tops knowledge benchmarks with 97.6, ranks #2 overall on BenchLM, and posts a 1.05M-token context window.

Tools & Apps/Apr 1

Claude Code Setup Guide for Research Workflows

A practical setup guide for Claude Code in research workflows, with terminal tips, context-window advice, and pricing details.

AI Agent/Apr 1

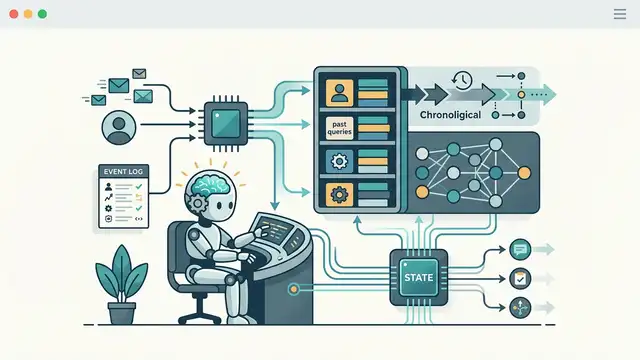

Agent Memory: How AI Agents Keep State

Agent memory lets AI agents retain state across tasks. Here’s how short-, long-, and external memory shape real agent systems.