Tag

distillation

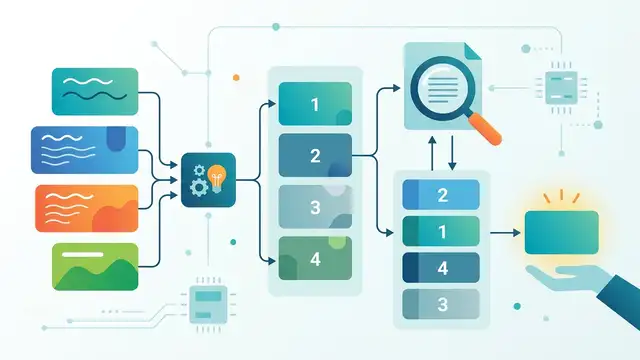

Distillation transfers a larger model’s behavior—ranking preferences, generation patterns, or reasoning signals—into a smaller student model. It matters because teams use it to cut inference cost and latency while keeping SLMs useful for reranking, generation, and cross-architecture alignment.

2 articles

Research/Apr 30

Select-to-Think: Let SLMs Re-rank Themselves

A new method lets small language models re-rank their own candidates instead of calling an LLM at inference time.

Research/Apr 30

TIDE distills diffusion LLMs across architectures

TIDE distills diffusion LLMs across architectures, adding noise-aware weighting and tokenizer-aware objectives to improve a 0.6B student.