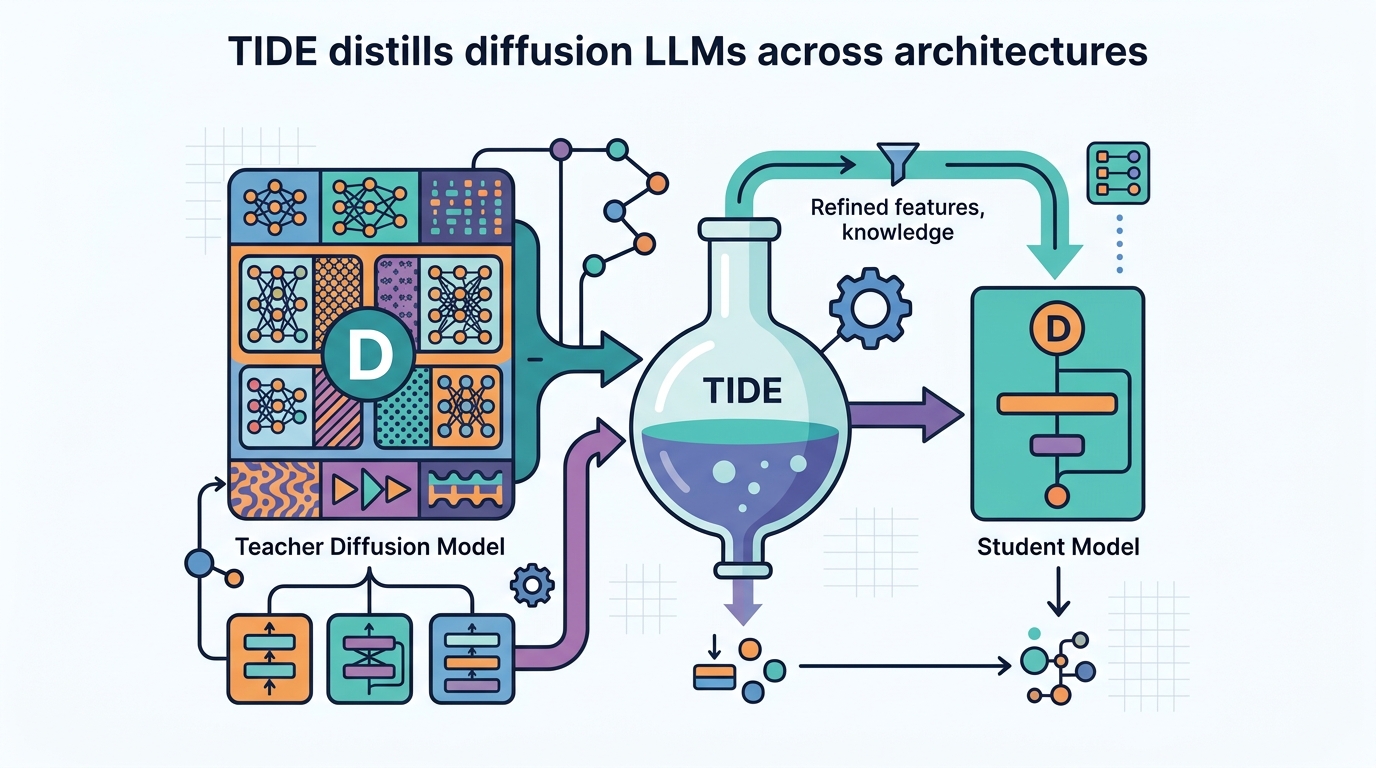

TIDE distills diffusion LLMs across architectures

TIDE distills diffusion LLMs across architectures, adding noise-aware weighting and tokenizer-aware objectives to improve a 0.6B student.

TIDE distills diffusion LLMs across architectures with noise-aware and tokenizer-aware training.

Turning the TIDE: Cross-Architecture Distillation for Diffusion Large Language Models is about a gap that most distillation work has ignored: what happens when the teacher and student do not share the same architecture. In diffusion LLMs, that mismatch matters because the models may differ in attention design, tokenizer, and even how they represent text. TIDE is the paper’s answer to that problem.

The practical angle is straightforward. Diffusion large language models already promise parallel decoding and bidirectional context, but the strongest systems still tend to be huge. If you want a smaller model that keeps more of that quality, you need distillation. The catch is that existing methods mostly assume the student is just a shrunken version of the teacher. This paper argues that assumption breaks down once the architectures diverge.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Traditional distillation methods for dLLMs focus on reducing inference steps inside one architecture. That can help speed and efficiency, but it does not solve cross-architecture transfer, where the teacher and student are structurally different. The abstract specifically calls out differences in architecture, attention mechanism, and tokenizer as the hard parts.

That matters because a student model is not only trying to copy answers. It is trying to learn from a teacher whose internal representation of text may not line up cleanly with its own. If the token boundaries differ, or if the masking and decoding behavior differ, the teacher’s guidance can become noisy or misleading. In other words, the usual “just imitate the logits” approach is not enough.

TIDE is presented as the first framework aimed at this cross-architecture dLLM distillation setting. The paper’s goal is not to make a single model faster in isolation, but to make knowledge transfer work when the source and target models are genuinely heterogeneous.

How TIDE works in plain English

The framework has three modular pieces, and each one targets a different failure mode in distillation. The first is TIDAL, which modulates distillation strength across both training progress and diffusion timestep. That design is meant to reflect the teacher’s noise-dependent reliability: the teacher is not equally trustworthy at every timestep, so the student should not be trained as if it were.

The second component is CompDemo, which enriches the teacher’s context through complementary mask splitting. The intuition is simple: if a diffusion model is asked to predict under heavy masking, the teacher can struggle because it sees too little context. By splitting masks in a complementary way, CompDemo tries to give the teacher a better contextual view and improve predictions where masking is severe.

The third piece, Reverse CALM, is a cross-tokenizer objective. The abstract says it inverts chunk-level likelihood matching, and the stated benefits are bounded gradients and dual-end noise filtering. In practical terms, this sounds like a way to make training more stable when teacher and student tokenization schemes do not match neatly.

Put together, the three modules address three different issues: when to trust the teacher, how to make the teacher’s context more useful, and how to compare outputs across different tokenizers without the optimization blowing up. That is a more realistic distillation story than assuming architecture mismatch is a minor detail.

What the paper actually shows

The concrete result in the abstract is that TIDE distills 8B dense and 16B MoE teachers into a 0.6B student through two heterogeneous pipelines. Across eight benchmarks, the distilled system outperforms the baseline by an average of 1.53 points. The abstract does not list all eight benchmark names or the full per-benchmark breakdown, so those details are not available here.

The clearest single number is in code generation. On HumanEval, the score reaches 48.78 compared with 32.3 for the AR baseline. That is a notable jump, and it suggests the method is not just improving aggregate benchmark averages but can materially help a downstream task that developers actually care about.

Still, the source material is limited to the abstract and notes, so we should be careful about overreading the result. We do not get training cost, wall-clock time, ablation details, or a comparison against a broader set of distillation baselines. We also do not know how the method behaves outside the reported heterogeneous pipelines, or whether the same gains carry over to other student sizes.

- Teacher sizes reported: 8B dense and 16B MoE

- Student size reported: 0.6B

- Reported average gain: 1.53 points across eight benchmarks

- HumanEval: 48.78 vs. 32.3 for the AR baseline

Why developers should care

If you build or deploy language models, the interesting part here is not just that distillation works. It is that distillation can be adapted to the messy reality of model heterogeneity. Teams rarely get to choose a teacher and student that share the same tokenizer, the same attention pattern, and the same architecture. In practice, you often want to compress across model families, not within one neatly aligned family.

TIDE suggests that cross-architecture compression may need explicit handling at multiple levels: timestep-aware weighting, context enrichment under masking, and tokenizer-aware matching. That is useful design guidance even if you never use this exact framework. It points to a broader lesson: when a teacher and student disagree on representation, the distillation objective itself probably needs to become representation-aware.

The limitation is also important. This is an arXiv abstract, so the evidence we can verify here is narrow. We know the method exists, we know the three modules, and we know the headline results. We do not yet know how robust the gains are under different datasets, how sensitive the method is to hyperparameters, or whether the added complexity is worth it for every deployment scenario.

Even so, the paper is a useful signal for anyone following diffusion LLMs. The field is moving from “can we distill these models at all?” to “can we distill them when the teacher and student do not even speak the same internal language?” TIDE’s answer is yes, if you make the distillation process aware of noise, masking, and tokenization differences.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset