A simpler beamspace denoiser for mmWave MIMO

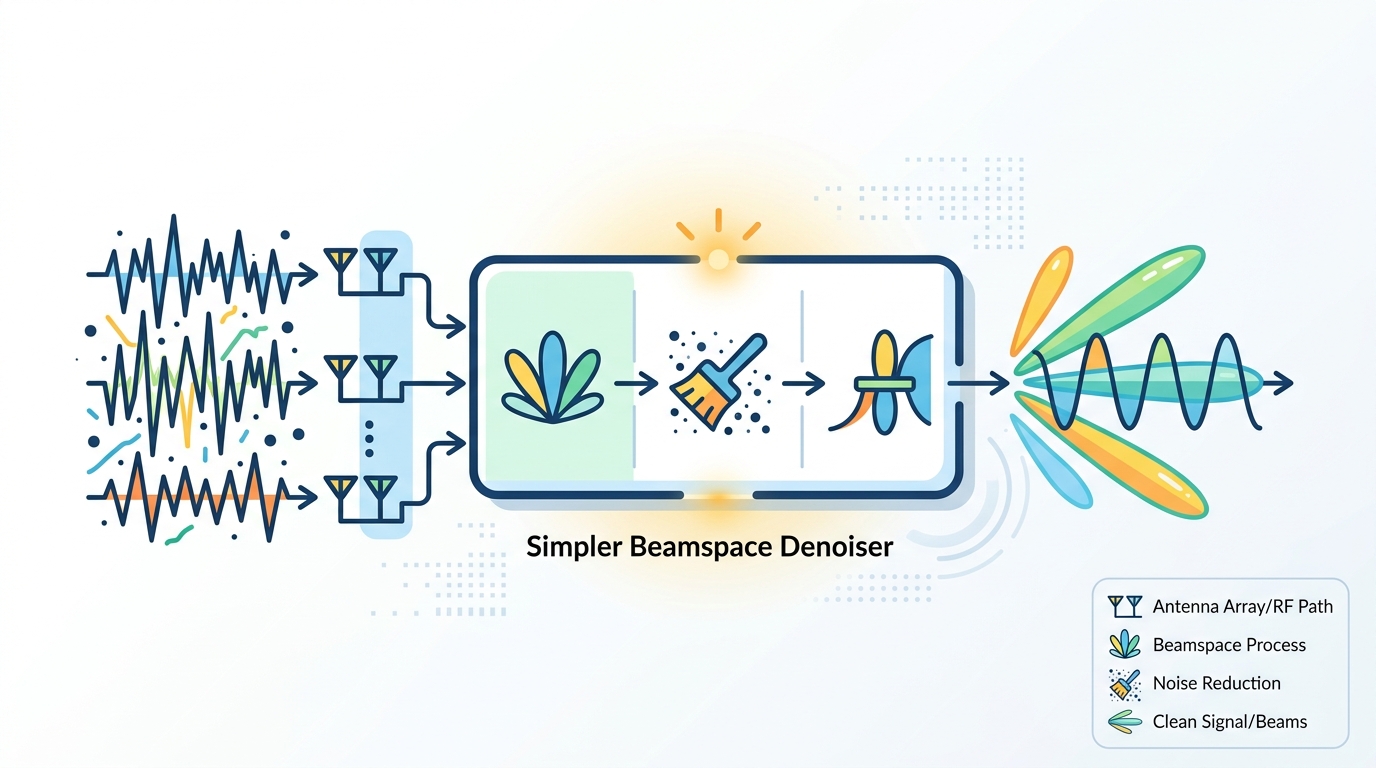

A beamspace denoiser for mmWave MIMO that avoids heavy math, models low-resolution ADC noise, and targets FPGA-friendly deployment.

This paper proposes a low-complexity beamspace denoiser for mmWave massive MIMO.

In mmWave massive MIMO, channel estimation gets messy fast: the channel is sparse, the antenna count is high, and low-resolution ADCs add extra distortion. This paper tackles that exact combination with a denoising method designed to be simple enough for real hardware, not just elegant on paper.

The practical angle matters. If you are building receivers, baseband pipelines, or FPGA prototypes, the difference between an algorithm that needs matrix inversions and one that uses a hard threshold can be the difference between “interesting” and “deployable.”

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The paper focuses on mmWave massive MIMO systems that use low-resolution analog-to-digital converters. Those ADCs help reduce power and hardware cost, but they also introduce quantization noise that makes channel estimation harder.

At the same time, mmWave channels are naturally sparse in the beamspace domain. That sparsity is useful, because it means most beamspace components are weak or noise-dominated, and only a smaller set carry meaningful signal energy. The challenge is to separate those two groups without paying a big computational price.

The authors’ goal is to denoise the beamspace channel in a way that respects both sides of the problem: the sparse structure of the channel and the extra distortion caused by low-resolution ADCs.

How the method works in plain English

The core idea is to treat denoising as a Bayesian binary hypothesis testing problem. In simple terms, each beamspace component is judged as either signal-dominant or noise-dominant.

To make that decision, the method assumes a Bernoulli-complex Gaussian prior. That is a statistical way of saying that the beamspace channel is mostly sparse, with a small number of active components following a complex Gaussian distribution when they are present.

Instead of modeling thermal noise and quantization noise separately, the paper combines them into a single composite noise term. That simplification is important because it keeps the analysis manageable while still accounting for the distortion introduced by the ADCs.

From this model, the authors derive a closed-form threshold and a hard-thresholding denoising rule. In practice, that means the algorithm can make a keep-or-discard decision for each component without iterative optimization or parameter searching.

That design choice is the main engineering win. The paper explicitly says the method avoids computationally intensive operations such as matrix inversion, iterative optimization, and parameter searching, and that it achieves near-linear computational complexity with respect to the number of antennas.

What the paper actually shows

The paper says the resulting algorithm achieves performance comparable to computationally intensive existing approaches while significantly reducing computational complexity. That is the central tradeoff: similar denoising quality, lower cost.

On the implementation side, the authors also develop a hardware-efficient VLSI architecture and implement it on an AMD-Xilinx Kintex UltraScale+ KCU116 FPGA platform. The FPGA work is not an afterthought here; it is part of the claim that the algorithm is practical for real systems.

The hardware design uses simplifications that are aware of implementation constraints and an efficient processing structure. According to the abstract, this leads to significantly lower latency and reduced hardware resource utilization compared with existing hardware implementations, along with sublinear scaling as the number of antennas increases.

One thing the abstract does not give is benchmark numbers. There are no explicit latency figures, resource counts, or performance metrics in the source text provided here, so you should read the paper itself for the exact evaluation setup and results.

- Beamspace sparsity is the key structural assumption.

- Low-resolution ADC distortion is folded into a composite noise model.

- A closed-form threshold replaces heavier optimization steps.

- FPGA implementation is part of the contribution, not just a proof of concept.

Why developers should care

If you work on wireless DSP, this paper is interesting because it attacks the usual gap between algorithm quality and hardware cost. A lot of channel estimation methods look good in simulation but become awkward once you have to map them onto an FPGA or other constrained platform.

This work points in the opposite direction: start with a sparse beamspace model, keep the decision rule simple, and design for hardware from the start. That makes it relevant for teams building mmWave receivers, prototyping baseband accelerators, or evaluating low-power designs where ADC resolution is intentionally limited.

The paper also reinforces a broader lesson: sometimes the best way to handle hardware distortion is not to model every effect in full detail, but to build a simplified statistical model that is good enough to support a fast decision rule.

Limitations and open questions

The source material is clear about the method and its hardware orientation, but it leaves several questions open. The abstract does not specify the exact simulation settings, the size of the performance gap versus baselines, or how the method behaves across different channel conditions and ADC resolutions.

It also does not tell us how sensitive the closed-form threshold is to modeling assumptions. Since the method depends on a Bernoulli-complex Gaussian prior and a composite noise approximation, the real-world fit of those assumptions will matter.

Finally, the paper claims sublinear scaling in the FPGA implementation as antenna counts increase, but the abstract does not provide the detailed resource breakdown needed to judge how that scales in practice. For engineers, that means the idea is promising, but the implementation details still matter a lot.

Even with those caveats, the direction is useful: a beamspace denoiser that is built around sparsity, low-resolution ADCs, and hardware efficiency is exactly the kind of approach that can move mmWave signal processing closer to deployable systems.

// Related Articles

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset

- [RSCH]

Judge Reliability Harness Stress-Tests LLM Judges

- [RSCH]

Taming Black-Box LLM Inference Scheduling

- [RSCH]

AISafetyBenchExplorer maps AI safety benchmarks