Taming Black-Box LLM Inference Scheduling

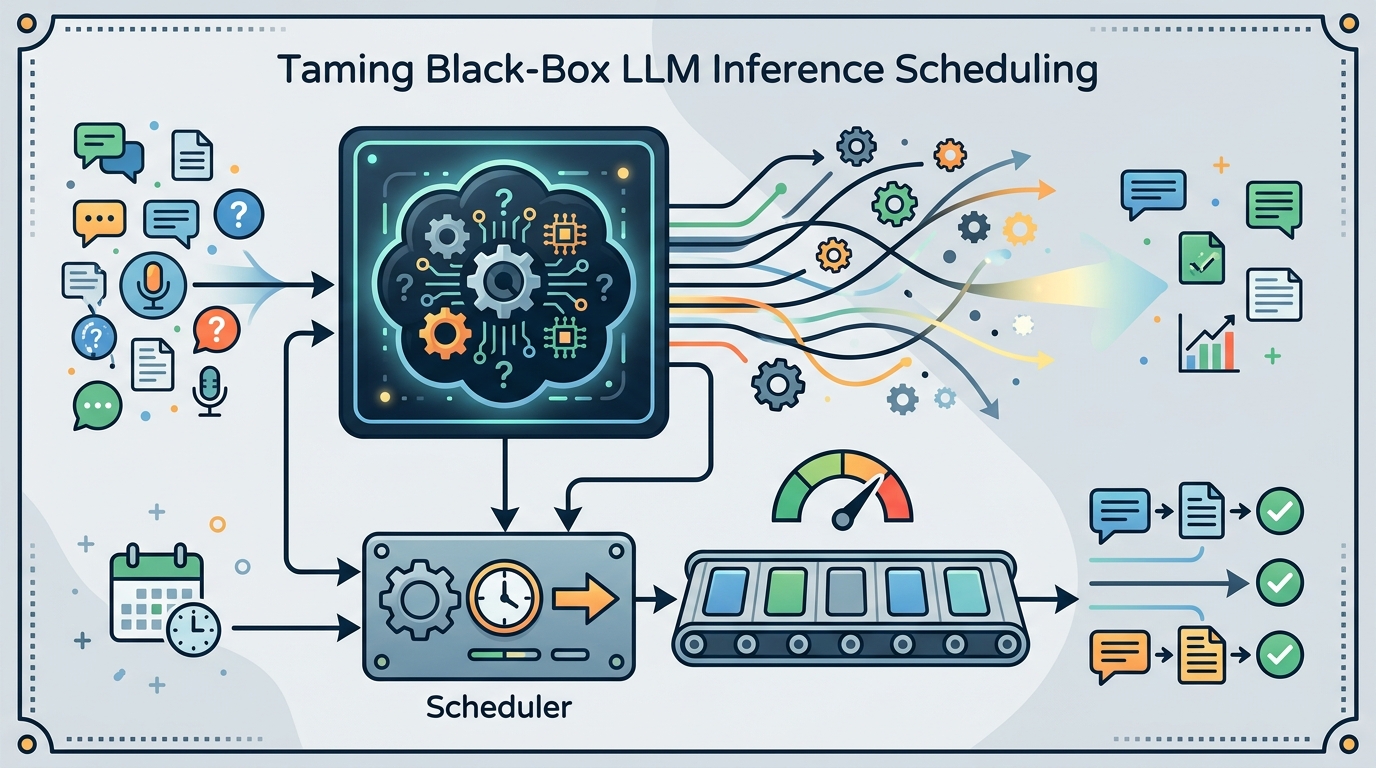

A scheduling approach for black-box LLM inference that uses predicted output lengths to reduce queueing friction at scale.

This paper explores scheduling black-box LLM inference using predicted output lengths.

Scheduling the Unschedulable: Taming Black-Box LLM Inference at Scale looks at a practical bottleneck in LLM serving: once a request is submitted, the system often does not know how long generation will run. That makes scheduling hard, especially when you are trying to keep throughput high and latency under control at scale.

The core idea is simple enough to matter operationally. If output token counts can be predicted at submission time, the scheduler can make better decisions before the request starts running. The paper is about turning that partial foresight into a more workable inference pipeline for black-box models, where the server may not have deep visibility into the model internals.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

LLM inference is not like serving a fixed-size API response. One prompt can finish quickly, another can keep generating for much longer, and the server only learns the true length as decoding progresses. That uncertainty makes standard scheduling harder, because the system has to decide which requests to run, when to batch them, and how to avoid long jobs clogging the line.

This is especially painful for black-box LLM inference. In that setting, the operator may not control the model internals or have access to detailed runtime signals. The result is a scheduling problem that is, in the paper's framing, almost unschedulable in the usual sense: the system has to act with incomplete information.

For developers, this is not an abstract research issue. It shows up any time you are running shared inference infrastructure, building an API for multiple tenants, or trying to keep latency predictable when request lengths vary widely. If the scheduler guesses wrong, short requests wait behind long ones, and the whole service feels slower than it should.

How the method works in plain English

The paper's key assumption is that output token counts can be predicted at submission time. That gives the scheduler a rough estimate of how expensive each request will be before execution starts. Instead of treating every request as equally opaque, the system can use those predictions to shape queue order and resource allocation.

In practical terms, this means the scheduler is no longer flying blind. It can prioritize, group, or sequence requests using an estimate of generation length rather than discovering that length only after the model has already consumed compute. The paper positions this as a way to tame black-box inference rather than fully solve it.

That distinction matters. The method is not about changing the model itself. It is about making the serving layer smarter when model behavior is only partially predictable. For teams operating LLM infrastructure, that is often the layer they can actually modify.

What the paper actually shows

The source material available here does not include benchmark tables, numeric results, or a detailed experimental section. So there are no concrete latency, throughput, or cost figures to report from the abstract alone.

What we can say is that the paper is making a systems argument: prediction of output length at submission time is enough to improve scheduling decisions for black-box LLM inference at scale. The contribution is therefore about feasibility and system design, not about a specific model architecture or training recipe.

Because the abstract excerpt is thin, it is also not clear from the provided notes which prediction method is used, how accurate it is, or how robust it remains across different workloads. Those are the details practitioners would want before adopting anything like this in production.

Why developers should care

Inference scheduling is one of the places where small improvements can have outsized effects. If you can avoid letting long generations dominate the queue, you can improve perceived responsiveness for users, reduce head-of-line blocking, and make capacity planning less painful.

There is also a broader operational lesson here. As black-box LLM usage grows, teams increasingly have to optimize around limited observability. Papers like this point toward a future where serving stacks rely more on prediction and less on perfect introspection.

- Useful when request lengths are highly variable.

- Relevant for shared LLM serving systems and API gateways.

- Most helpful when the model is black-box and the scheduler has limited visibility.

- Depends on how well output length can be predicted at submission time.

Limitations and open questions

The biggest limitation in the source material is that it does not give us the actual evaluation story. Without benchmark numbers, it is hard to judge how much improvement the approach delivers or where it breaks down.

Another open question is prediction quality. The whole approach rests on being able to estimate output token counts early enough to matter. If those estimates are noisy, the scheduler may still make poor decisions, just with a slightly better guess than before.

There is also a deployment question. Even if the idea works well in a controlled setup, integrating it into a real serving stack means dealing with bursty traffic, mixed workloads, fairness concerns, and the usual tradeoffs between latency and throughput. The abstract does not tell us how far the paper goes on those fronts.

Still, the paper is pointing at a real systems pain point. For engineers building or operating LLM infrastructure, the message is straightforward: if you can predict even one hard-to-see property of a request, you can make the scheduler much less blind.

That makes this paper worth watching even from the abstract alone. It is not promising magic. It is trying to make an inherently messy serving problem a little more tractable by using whatever signal is available before execution starts.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset