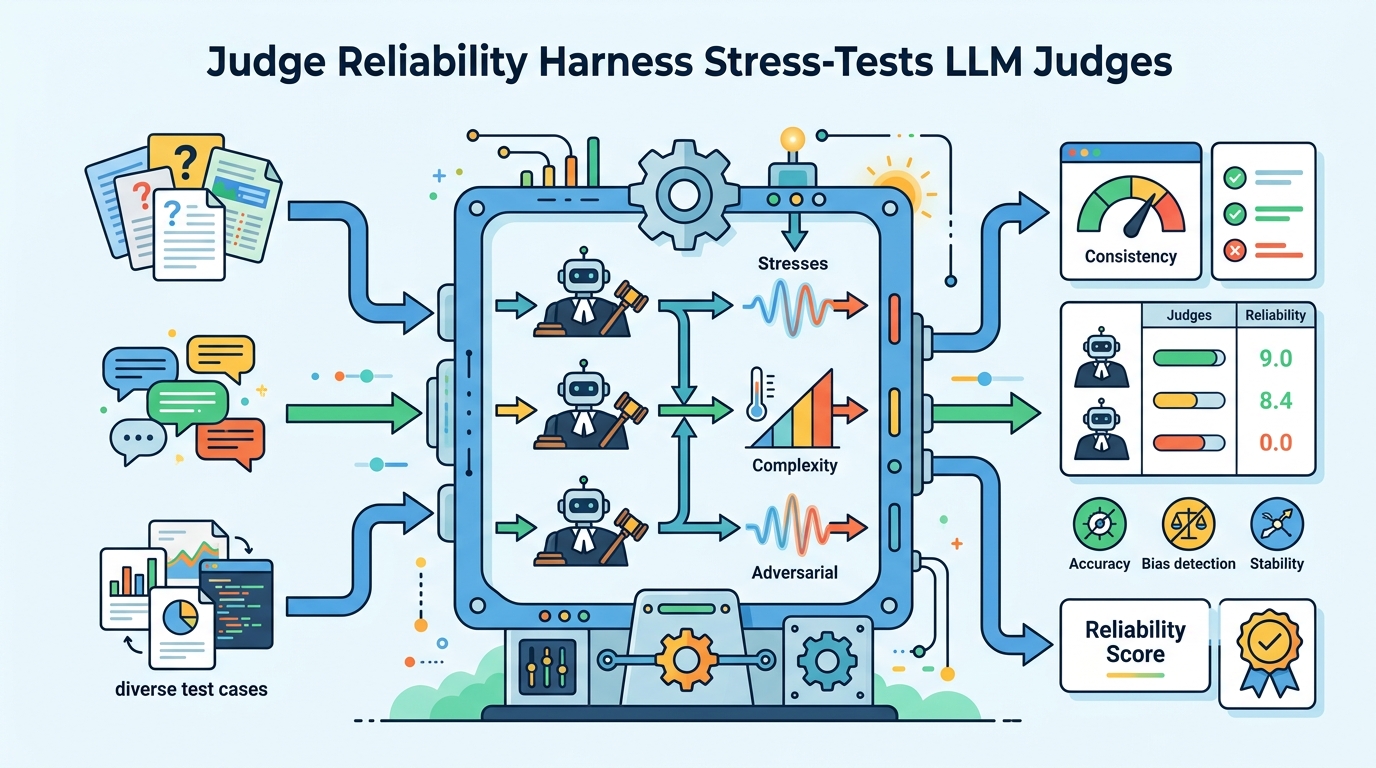

Judge Reliability Harness Stress-Tests LLM Judges

A harness probes how LLM judges change under formatting, paraphrasing, verbosity, and flipped labels.

A harness tests whether LLM judges stay consistent under simple input changes.

Judge Reliability Harness: Stress Testing the Reliability of LLM Judges is about a practical problem developers are starting to run into: if you use one model to judge another model, how stable is that judgment when the wording changes a little? The paper’s preliminary experiments suggest that the answer can be “less stable than you’d want,” even when the underlying task has not changed.

The core takeaway is simple. LLM judges can show consistency issues when exposed to text formatting changes, paraphrasing, changes in verbosity, and even flipped ground-truth labels in LLM-produced responses. That matters because more teams are using model-as-judge setups for evaluation, ranking, and automated review, and weak judge reliability can quietly distort those workflows.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Model judges are attractive because they can scale evaluation without requiring humans for every decision. Instead of manually scoring outputs, you ask an LLM to assess whether another model completed a task correctly. In practice, that only works if the judge is dependable across small variations in how the same answer is presented.

This paper focuses on that reliability gap. The authors are not claiming that LLM judges are useless; they are showing that even simple surface-level changes can affect judgment consistency. For engineers, that is a real operational risk, because evaluation pipelines often assume that formatting, paraphrasing, or response length should not change the score if the underlying meaning is the same.

The note also points to a tool: the Judge Reliability Harness. Based on the abstract, its purpose is to stress test judges rather than to replace them. That makes it more of a diagnostic layer for evaluation systems than a new benchmark or a new judge model.

How the method works in plain English

The abstract is brief, so the paper does not spell out a full experimental protocol here. What it does make clear is the basic idea: present judges with variations of LLM-generated responses and see whether the judge’s decision stays consistent.

Those variations include:

- simple text formatting changes

- paraphrasing

- changes in verbosity

- flipping the ground truth label in LLM-produced responses

In other words, the harness appears to be designed to ask a very practical question: if the substance of an answer is the same, does the judge still behave the same way? If the answer changes just because the text looks different, that suggests the judge may be over-sensitive to presentation details rather than reasoning about task completion.

That distinction matters. A judge that is easily swayed by formatting or verbosity can introduce noise into any downstream process that depends on its scores, from offline evaluation to automated gating. The paper’s framing suggests the harness is intended to make those failure modes visible.

What the paper actually shows

The concrete result available in the abstract is narrow but important: the authors report preliminary experiments that revealed consistency issues. The metric named in the abstract is accuracy in judging another LLM’s ability to complete a task.

What the abstract does not provide is just as important. There are no benchmark tables, no numeric accuracy values, no model names, and no detailed comparison against other judge systems in the source text provided here. So while the paper clearly reports that reliability problems exist, this summary cannot claim how large those problems were or whether one judge was better than another.

That means the paper should be read as an early warning and a tooling contribution, not as a full empirical leaderboard. The useful part is the failure mode itself: judgments changed under conditions that many developers would normally consider superficial.

For teams building evaluation pipelines, that is enough to justify caution. If a judge can be nudged by formatting or verbosity, then evaluation results may reflect prompt shape as much as answer quality. And if ground-truth label flips can expose inconsistency, then the judge may not be reliably tracking the task signal you think it is.

Why developers should care

Anyone using LLM-as-judge systems is effectively turning model outputs into infrastructure. Once that happens, reliability becomes an engineering problem, not just a research one. A judge that drifts with presentation details can create false confidence, unstable rankings, or noisy regression tests.

That is especially relevant in workflows where model outputs are automatically compared, filtered, or promoted based on judge scores. If the judge is sensitive to superficial changes, then small prompt edits or formatting tweaks can change evaluation outcomes even when the underlying answer quality has not changed.

For practitioners, the likely lesson is to test judges the same way you test other production dependencies: with perturbations, adversarial cases, and repeatability checks. The Judge Reliability Harness appears to be aimed at exactly that kind of stress testing.

Limitations and open questions

The source material is thin, so there are several things it does not establish. We do not know the size of the experiments, the specific tasks used, the judge models tested, or whether the harness covers more than the failure modes named in the abstract.

We also do not know from the provided text whether the tool is meant for end users, research teams, or both. The abstract mentions that the code is available, but the source here does not include a usable link or any implementation details beyond that.

There is also a bigger open question the abstract leaves unanswered: what makes a judge robust enough for real deployment? The paper highlights inconsistency, but the source does not yet tell us what mitigation strategies work best, how to measure acceptable reliability, or how much variation is tolerable before a judge becomes untrustworthy.

Still, the practical value is clear. If you rely on LLM judges, you need to know whether they are scoring the task or reacting to the wrapper around the task. This paper’s harness is a reminder to check that before you build on top of the results.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset