Tag

LLM architecture

2 articles

Research/May 8

UniPool shares MoE experts across layers

UniPool replaces per-layer MoE experts with one shared pool, cutting redundancy and improving validation loss in five LLaMA-scale models.

Research/Apr 2

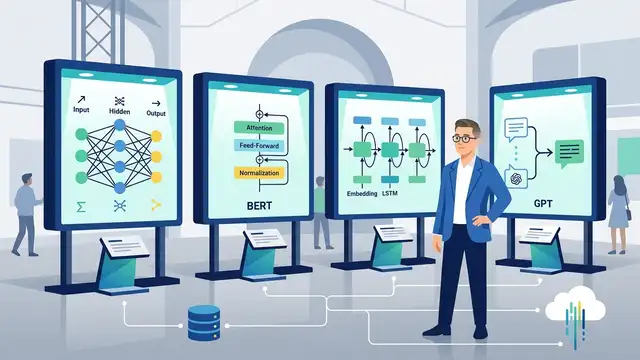

Sebastian Raschka’s LLM Architecture Gallery

Raschka’s gallery compares GPT-2, Llama 3, OLMo 2, DeepSeek, and Qwen stacks with exact layer, cache, and attention data.