Act Wisely: Teaching Agents When Not to Call Tools

A new training scheme, HDPO, aims to cut blind tool use in multimodal agents by separating accuracy from tool efficiency.

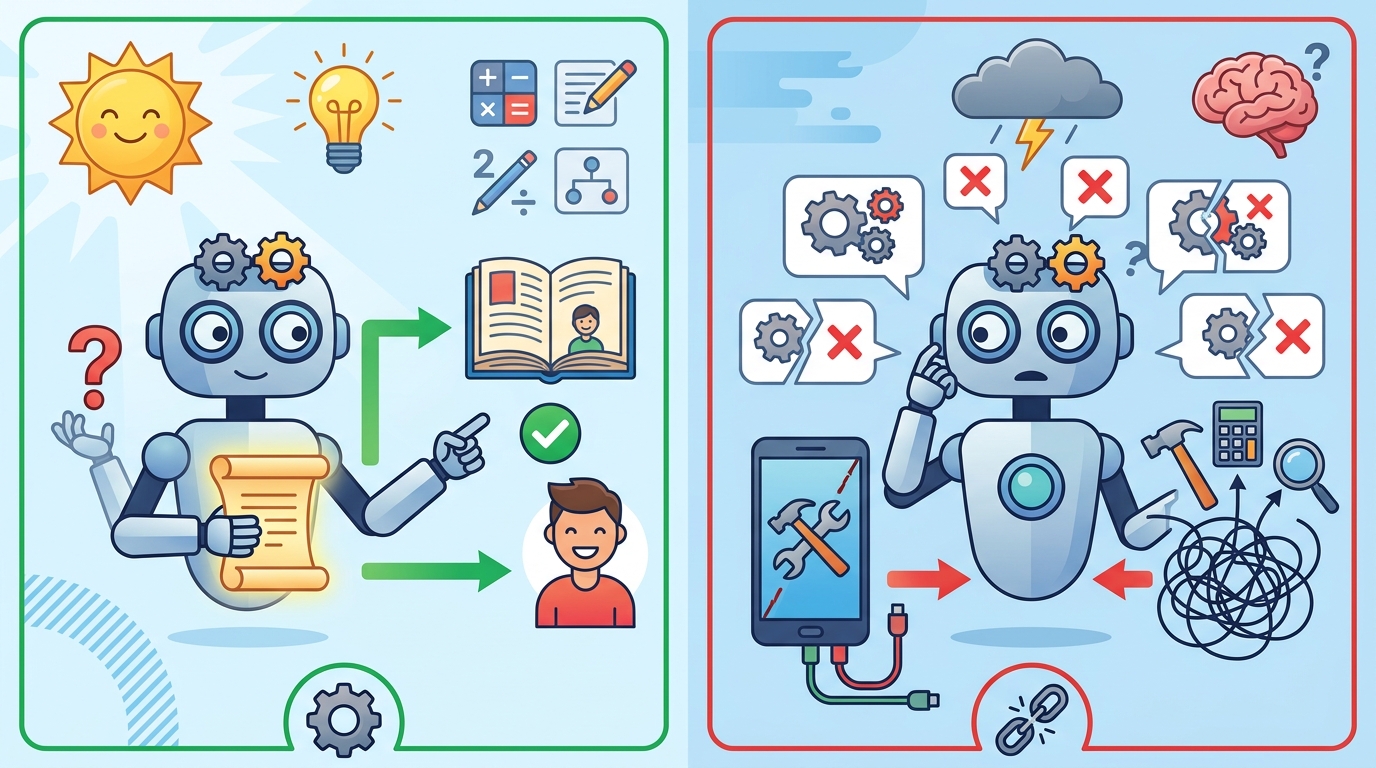

Act Wisely: Cultivating Meta-Cognitive Tool Use in Agentic Multimodal Models tackles a problem that shows up fast in real agent systems: models often reach for tools even when the answer is already visible in the input. That habit wastes time, adds noise, and can push reasoning off course.

The paper’s main idea is simple to state but tricky to train: instead of treating tool usage as just another penalty inside a single reward score, separate correctness from efficiency. The authors propose HDPO, a framework designed to teach agents to solve tasks first and only then learn when tool calls are actually worth it.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Agentic multimodal models can interact with external environments, which makes them useful for tasks that go beyond plain text generation. But the paper argues that these systems have a meta-cognitive deficit: they are weak at deciding whether to rely on internal knowledge or query an external utility.

In practice, that shows up as blind tool invocation. The model calls a tool reflexively, even when the raw visual context already contains enough information to answer the question. According to the paper, this creates two concrete issues: latency bottlenecks and extra noise that can derail reasoning.

This matters to developers because tool use is not free. Every unnecessary call adds orchestration overhead, can introduce failure points, and makes the system harder to reason about. In multimodal agents especially, overusing tools can turn a direct perception problem into a slower, less stable workflow.

Why standard reward shaping falls short

The paper says existing reinforcement learning approaches try to reduce tool overuse with a scalar reward that penalizes tool usage. That sounds reasonable, but the authors argue it creates a tradeoff that is hard to optimize away.

If the penalty is too strong, the model starts avoiding tools even when they are genuinely needed. If the penalty is too weak, it gets washed out by the variance of the accuracy reward during advantage normalization. In the paper’s framing, that makes the tool penalty effectively powerless against overuse.

For engineers, this is a familiar systems problem: when two objectives are crammed into one score, one of them can dominate training in ways that are hard to predict. The result is a policy that may look optimized on paper but still behaves badly in deployment.

How HDPO works in plain English

HDPO reframes tool efficiency as a conditional objective rather than a competing scalar one. Instead of asking the model to balance accuracy and tool thriftiness in a single blended reward, it keeps them on separate optimization channels.

The first channel is the accuracy channel, which focuses on task correctness. The second is the efficiency channel, which only applies within trajectories that are already accurate. The paper describes this as conditional advantage estimation, meaning the model is only pushed toward more economical execution after it has already demonstrated it can solve the task correctly.

That decoupling is the core contribution. It avoids forcing the model to choose between “be correct” and “use fewer tools” as if those were always in direct conflict. Instead, the training process says: first learn to solve the task, then learn to solve it with less external help.

The authors also describe this as creating a cognitive curriculum. In other words, the agent is guided to master task resolution before refining self-reliance. That sequencing is important because it matches how many developers would want to train a practical agent anyway: competence first, efficiency second.

What the paper actually shows

The paper says extensive evaluations show that the resulting model, Metis, reduces tool invocations by orders of magnitude while simultaneously improving reasoning accuracy. That is the headline result, and it is strong enough to be interesting for anyone building multimodal agents or tool-using assistants.

What the abstract does not provide is the benchmark list, dataset names, or exact numeric scores. It also does not spell out the task mix in detail. So while the direction of the result is clear, the source material here does not give enough information to compare Metis against specific baselines or reproduce the magnitude of the gains from the abstract alone.

Even with that limitation, the claim is notable because it cuts against the usual tradeoff. The paper is not saying “we saved tool calls at the cost of quality.” It says the model did both better: fewer invocations and higher reasoning accuracy.

- Problem: agents overuse tools even when the answer is already in the input.

- Consequence: extra latency, extra noise, and weaker reasoning.

- Proposed fix: HDPO, which separates accuracy optimization from efficiency optimization.

- Reported outcome: Metis uses tools far less and reasons more accurately.

Why developers should care

If you are building multimodal agents, this paper points at a practical failure mode that is easy to miss in demos and expensive in production. A model that calls tools too often can look capable while quietly inflating response time and operational complexity.

HDPO’s design suggests a useful training principle: do not treat tool usage as a generic penalty attached to the main reward. If the system is already struggling to decide when external help is needed, a blended scalar objective may not be enough to teach that judgment cleanly.

For product teams, the appeal is obvious. Better tool discipline can mean lower latency, fewer unnecessary API calls, less orchestration overhead, and more stable reasoning paths. For ML engineers, the interesting part is the training structure: conditional optimization may be a better fit than a single reward knob when the behavior you want is context-dependent.

There are also open questions. The abstract does not tell us how broadly the method transfers across tasks, how sensitive it is to the choice of tools, or whether the same approach works outside multimodal settings. It also does not show the full evaluation setup, so it is hard to know where the gains come from most strongly.

Still, the paper’s message is clear: tool use should be learned as a judgment problem, not just a cost problem. That is a useful lens for anyone designing agents that need to know when to act on their own and when to reach outside themselves for help.

Bottom line

Act Wisely argues that the real challenge in agentic multimodal systems is not just making them smarter, but making them more selective. HDPO tries to teach that selectivity by separating correctness from efficiency, and the reported result is a model that uses tools far less while reasoning better.

For developers, that makes this paper worth watching: it is less about a new tool and more about a better way to train agents not to overuse tools in the first place.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset