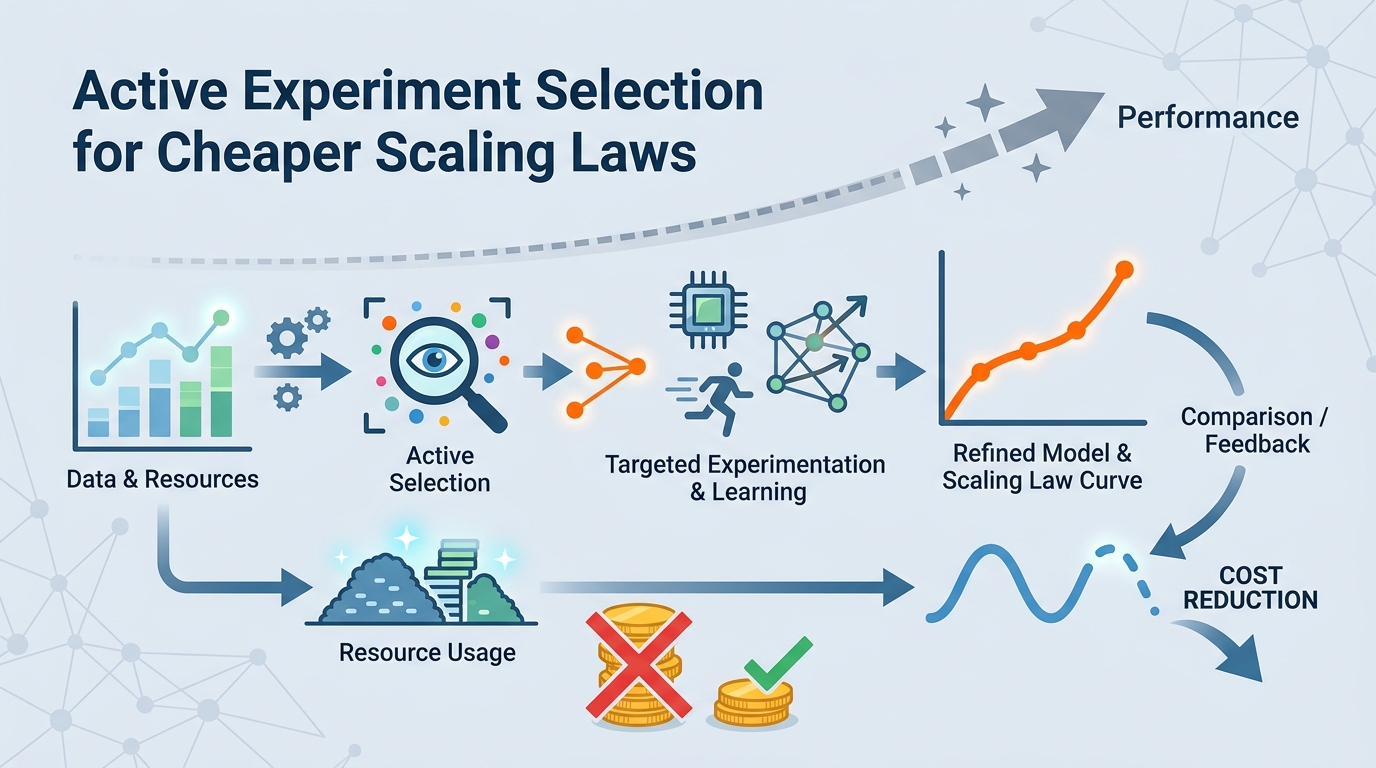

Active Experiment Selection for Cheaper Scaling Laws

A budget-aware method picks the most useful pilot runs for scaling-law fitting, often matching full-data fits with about 10% of the training budget.

Scaling laws help teams estimate how model performance will change as they spend more compute, data, or training time. The catch is that fitting those laws can be expensive too, because you need pilot experiments that are informative enough to support reliable extrapolation. This paper asks a practical question: if experiment runs have different costs, how do you spend a limited budget on the runs that matter most? The authors answer with a sequential, uncertainty-aware selection method for budget-efficient scaling-law fitting.

For engineers, the appeal is straightforward. If you are planning large training runs, you usually do not want to waste budget on pilot experiments that barely improve the final fit. This work treats pilot selection itself as an optimization problem, rather than a fixed preprocessing step. The paper’s core claim is that you can often get close to the accuracy of fitting on the full experimental set while using only about 10% of the total training budget.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Scaling laws are often used to make expensive decisions before the expensive runs happen. They help teams estimate where performance is headed and how much more compute might be worth spending. But in modern workflows, gathering the data needed to fit those laws is not cheap. The paper points out that assembling a sufficiently informative set of pilot experiments can already become a major budget-allocation problem.

That matters because not every experiment costs the same. Some runs are cheap, others are expensive, and some are much more valuable than others for learning about the target region you actually care about. If you choose the wrong experiments, you can burn budget without improving extrapolation accuracy in the region where your final training decisions will be made.

The paper frames this as a sequential experimental design problem with a finite pool of runnable experiments and heterogeneous costs. In plain terms: given a limited budget, which experiments should you run next so the scaling law you fit is most useful for predicting the high-cost target region?

How the method works in plain English

The proposed method is uncertainty-aware and sequential. That means it does not pick a one-shot subset of runs upfront and stop there. Instead, it allocates budget step by step, using uncertainty to decide which candidate runs are likely to reduce extrapolation error the most.

The key idea is to prioritize experiments that are most informative for the target region, not just experiments that look generally useful. That distinction matters because scaling-law fitting is usually about extrapolation. You are not just trying to describe the runs you already have; you are trying to predict performance where you have not yet trained.

In practice, the method works on a finite pool of possible experiments with different costs. At each step, it chooses runs that best improve the fit for the target region under the remaining budget. The paper describes this as budget-aware sequential experimental design, which is a useful way to think about it if you are used to active learning or adaptive sampling.

There is an important implementation takeaway here: the method is not trying to maximize raw data volume. It is trying to maximize the value of each dollar spent on pilot runs. That makes it a better fit for settings where compute is the bottleneck and where experiment costs are uneven.

What the paper actually shows

The paper evaluates the method across a diverse benchmark of scaling-law tasks. The abstract does not list the individual benchmark names, task details, or per-task numbers, so those specifics are not available from the source text here. What it does say is that the method consistently outperforms classical design-based baselines.

That is the main empirical result: compared with standard design-oriented approaches, the proposed selection strategy gives better extrapolation accuracy under budget constraints. The paper also reports that it often approaches the performance of fitting on the full experimental set while using only about 10% of the total training budget.

That last point is the most practical headline. If it holds in your setting, it suggests you may not need to run the full set of pilot experiments to get a useful scaling-law fit. Instead, a carefully chosen subset can deliver much of the same value at a fraction of the cost.

The abstract does not provide benchmark numbers beyond that budget figure, so there are no detailed accuracy metrics, confidence intervals, or task-by-task comparisons to quote here. For a research note like this, that means the best honest reading is: promising evidence across multiple tasks, but the precise magnitude of improvement is not available from the abstract alone.

Why developers and ML teams should care

If you work on model training, capacity planning, or evaluation strategy, this paper speaks directly to a common pain point: the cost of uncertainty. Before you commit to a large run, you need some way to estimate whether the next jump in compute will pay off. Scaling laws are one of the main tools for that, but the data needed to fit them can itself become expensive.

This work suggests a more disciplined way to spend pilot budget. Instead of treating every candidate run as equally useful, you can actively choose the runs that improve extrapolation where it matters most. That is especially relevant when experiment costs vary widely, because cost-aware selection can prevent you from overspending on low-value runs.

There is also a broader workflow lesson here. As training pipelines get larger and more expensive, “data collection” for planning purposes starts to look like a first-class optimization problem. This paper makes that explicit and provides a method for handling it with sequential selection rather than static sampling.

- Useful when pilot runs have different costs.

- Useful when you care about extrapolation into a specific target region.

- Useful when full fitting is too expensive to justify.

- Less informative if you need full benchmark details, since the abstract does not provide them.

Limitations and open questions

The biggest limitation in the source material is also the most obvious one: the abstract is high level. It does not spell out the exact uncertainty model, the selection rule, the benchmark composition, or the concrete metrics used to measure extrapolation accuracy. So while the method sounds practical, the implementation details matter and are not visible here.

Another open question is generality. The paper says it evaluates a diverse benchmark of scaling-law tasks, but the abstract does not tell us how broad that diversity is or where the method might struggle. In real systems, the usefulness of active selection can depend heavily on the cost structure, the shape of the scaling curve, and how well the uncertainty estimates match reality.

There is also a practical tradeoff to keep in mind. Sequential design adds decision-making overhead. Even if it saves training budget, teams still need a workflow that can support iterative selection, execution, and refitting. That overhead may be worth it for expensive campaigns, but less so for smaller projects.

Still, the direction is compelling. If you are already using scaling laws to guide expensive training decisions, this paper argues that the fitting process itself should be budget-aware. That is a useful shift in mindset: do not just ask how to fit a scaling law well, ask how to fit it well enough for the least possible spend.

The paper is available as Spend Less, Fit Better: Budget-Efficient Scaling Law Fitting via Active Experiment Selection. The source also notes code availability at the project repository, but the only link provided in the raw material is the paper URL above. For teams planning large-scale training, the main takeaway is simple: active experiment selection may let you buy most of the value of scaling-law fitting without paying for the full experimental set.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset