Why AI reading assistants need guardrails

A minimal prototype tests whether LLM reading assistants stay honest when users push them beyond retrieval into interpretation.

A minimal prototype tests whether LLM reading assistants stay honest when users push them beyond retrieval into interpretation.

Large language model reading assistants are moving into tasks where “just answer the question” is not enough. When people use them for interpretation, the bigger risk is not missing a fact — it is saying something confident that the model cannot actually support.

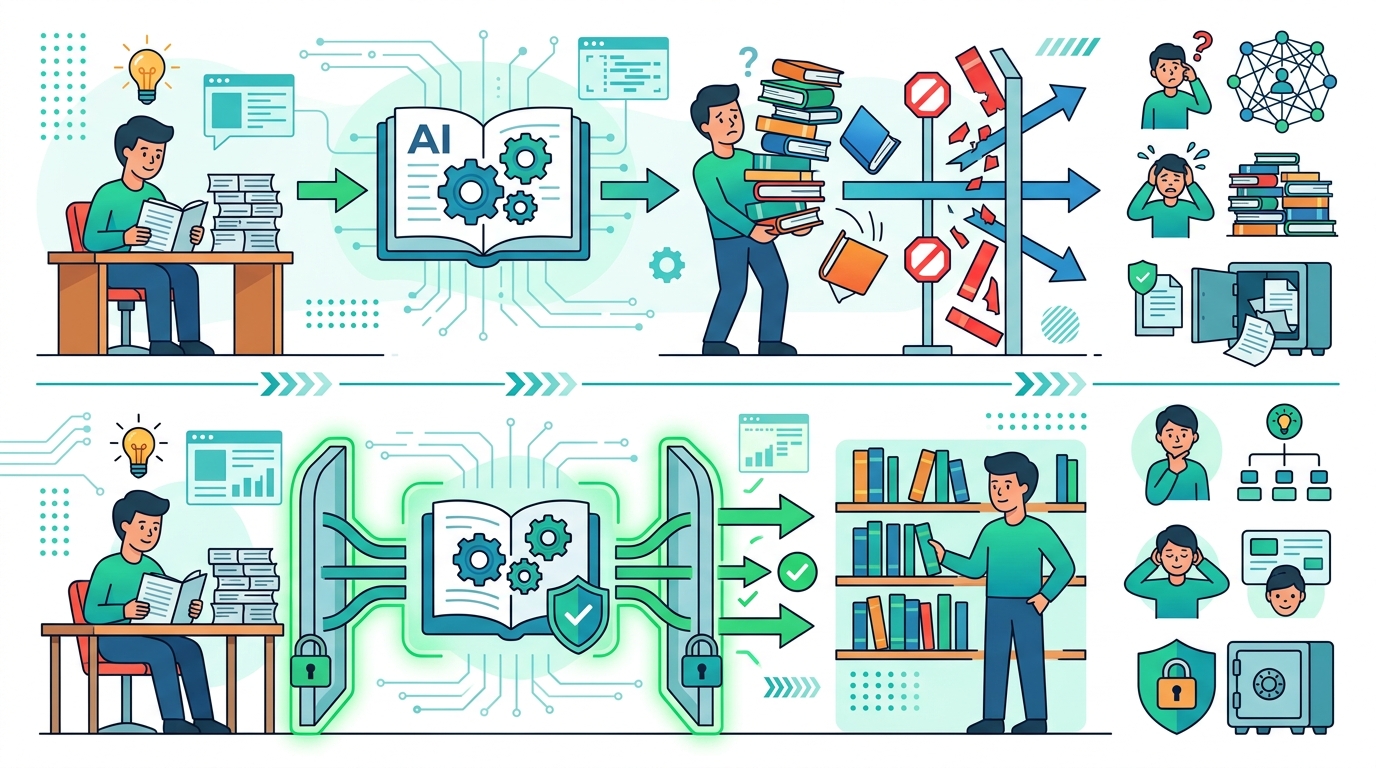

This paper, Evaluating Epistemic Guardrails in AI Reading Assistants: A Behavioral Audit of a Minimal Prototype, looks at that problem directly. The core question is simple: what happens when a reading assistant is built to be careful about what it knows, what it infers, and what it should refuse to overstate?

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Reading assistants are often treated like smarter search tools, but the paper frames a more demanding use case: interpretation rather than simple retrieval. That matters because interpretation invites synthesis, uncertainty, and judgment calls, all of which create room for hallucination or overconfident framing.

For developers, this is the gap between a system that can point to text and a system that can explain what the text means. The second version is much more useful, but it is also much easier to get wrong in ways that are hard to notice during casual testing.

The paper focuses on “epistemic guardrails,” meaning the behavioral constraints that keep an assistant aligned with what it can actually justify from the source material. In plain terms: can the model separate direct evidence from inference, and can it avoid presenting speculation as fact?

How the method works in plain English

The source describes this as a behavioral audit of a minimal prototype. That tells us two important things. First, the authors are not claiming a giant product system or a fully finished assistant. Second, they are trying to observe behavior, not just measure static output quality.

A minimal prototype is useful here because it strips the problem down to the core interaction: a user asks for help reading or interpreting text, and the assistant has to decide how far it can go. That makes the guardrail question visible. If the assistant is careful, it should show restraint when evidence is thin. If it is not, the failure mode becomes obvious.

The abstract available in the source does not provide implementation details, dataset size, benchmark setup, or evaluation metrics. So while we can say the paper audits behavior, we cannot responsibly claim how many examples were tested, what tasks were used, or how performance was scored.

What the paper actually shows

From the abstract snippet in the source, the strongest concrete claim is the paper’s scope: it examines LLM reading assistants in contexts that require interpretation, and it does so through a behavioral audit of a minimal prototype. That already signals a shift away from pure accuracy metrics and toward examining model conduct under ambiguity.

What is not present in the source matters just as much. There are no benchmark numbers in the abstract, no reported percentage improvements, and no comparison table to quote. If you were hoping for a neat scorecard, the provided material does not include one.

That does not make the work unimportant. In fact, papers like this are often most useful when they identify the kinds of failures that standard benchmarks miss. A reading assistant can look strong on retrieval-style questions and still be unreliable when asked to interpret, qualify, or synthesize.

Because the abstract is short, the safest reading is that the paper is evaluating whether epistemic guardrails can change assistant behavior in a meaningful way. But the source does not tell us the outcome in detail, so we should not overstate the results.

Why developers should care

If you are building AI features for documents, research, support, compliance, education, or internal knowledge work, this paper points to a practical design issue: your assistant should not just answer, it should signal confidence boundaries. That is especially important when users may treat the output as a summary of truth rather than a hypothesis.

Epistemic guardrails can shape product behavior in a few ways:

- They can force the assistant to distinguish direct evidence from inference.

- They can reduce the chance of confident but unsupported interpretation.

- They can make uncertainty visible instead of hiding it in fluent prose.

- They can help teams audit whether the assistant is behaving safely in real reading workflows.

That said, guardrails are not a free win. A system that refuses too often can become frustrating or useless. A system that allows too much inference can sound helpful while quietly drifting away from the source. The real engineering challenge is balancing usefulness and epistemic discipline.

Limitations and open questions

The biggest limitation in the source material is that the abstract does not include concrete experimental details. We do not know the prototype architecture, the evaluation protocol, the exact guardrails used, or whether the study compared multiple assistant behaviors side by side.

We also do not know how general the findings are. A minimal prototype is a good test bed, but it is not the same as a production system with long-context retrieval, tool use, user memory, or domain-specific constraints. Results from a small behavioral audit may not transfer cleanly to real deployments.

That leaves several useful open questions for practitioners: Which guardrails are most effective? How do you measure whether an assistant is being appropriately cautious rather than merely evasive? And how do you preserve helpful interpretation without letting the model present unsupported conclusions as if they were grounded in the text?

Even with sparse details, the paper is pointing at a real product problem. As AI reading assistants move from lookup tools to interpretation tools, the bar changes. The system has to know not only what it can say, but how sure it should sound when it says it.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset