Fast Spatial Memory for Long 4D Sequences

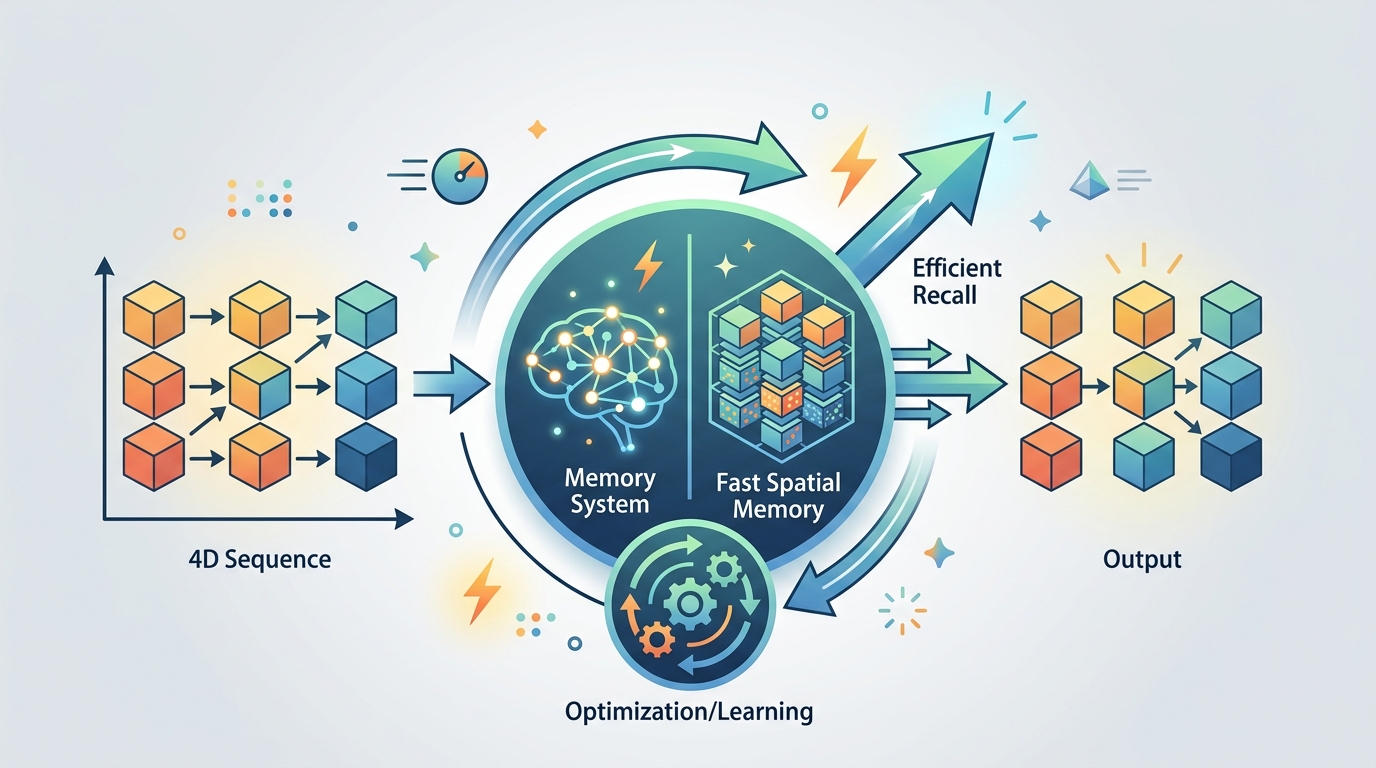

A new 4D reconstruction model uses elastic test-time training to reduce forgetting and memory bottlenecks in long-sequence spatial learning.

Long-context 3D and 4D reconstruction has a familiar problem: the more you let a model adapt at inference time, the more likely it is to forget earlier information or overfit to what it just saw. This paper tackles that tradeoff head-on with Fast Spatial Memory with Elastic Test-Time Training, a method designed to make test-time updates more stable while still keeping them fast and useful for long observation sequences.

For engineers working on spatial AI, robotics, embodied perception, or any system that has to build a scene representation from a long stream of views, the practical issue is not just accuracy. It is also whether the model can keep adapting without blowing up memory usage or collapsing into shortcuts. The authors argue that their approach pushes test-time training beyond the usual single-chunk setup and toward multi-chunk adaptation for genuinely long sequences.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The paper starts from Large Chunk Test-Time Training, or LaCT, which has shown strong performance on long-context 3D reconstruction. But LaCT’s fully plastic inference-time updates come with a cost: they can suffer from catastrophic forgetting and overfitting. In practice, that means the model may adapt well to recent observations while losing useful information from earlier parts of the sequence.

Because of that instability, LaCT is usually run with one large chunk that spans the full input sequence. That setup limits the broader goal of handling arbitrarily long sequences in a single pass. It also leaves an important systems-level problem unresolved: the activation-memory bottleneck. If you want a model that can ingest longer and longer streams, you need something that does not require everything to stay live in memory at once.

The authors frame this as a gap between what test-time training can do in theory and what it can do robustly in a real long-sequence pipeline. Their goal is not just to make the model more accurate, but to make the adaptation process itself less fragile.

How Elastic Test-Time Training works

The core idea is Elastic Test-Time Training, which borrows inspiration from elastic weight consolidation. Instead of letting the fast weights drift freely during inference, the method stabilizes them with a Fisher-weighted elastic prior around a maintained anchor state.

In plain English: the model still updates itself at test time, but those updates are pulled back toward a reference point so they do not wander too far. The anchor is not fixed forever. It evolves as an exponential moving average of past fast weights, which gives the system a way to balance stability and plasticity over time.

That matters because long sequences are not just “more of the same.” New views can add genuinely new information, but they can also tempt the model into overfitting to a narrow local pattern. The elastic prior is meant to keep the updates useful without letting them erase what the model already learned from earlier chunks.

On top of this updated architecture, the paper introduces Fast Spatial Memory, or FSM. FSM is positioned as an efficient and scalable model for 4D reconstruction. It learns spatiotemporal representations from long observation sequences and can render novel view-time combinations, which is the kind of capability you want when the scene is changing over time rather than staying static.

What the paper actually shows

The abstract says the authors pre-trained FSM on large-scale curated 3D and 4D data so it could capture both the dynamics and semantics of complex spatial environments. That pretraining is part of the story: the model is not just doing clever inference-time adaptation on its own, but doing so from a representation base that was built to understand spatial and temporal structure.

The paper reports extensive experiments, and the high-level takeaway is that FSM supports fast adaptation over long sequences and delivers high-quality 3D/4D reconstruction with smaller chunks. The abstract also says it mitigates the camera-interpolation shortcut, which suggests the model is less likely to rely on an easier but less generalizable path when rendering novel view-time combinations.

What the abstract does not provide are concrete benchmark numbers, dataset names, or comparison tables. So while the paper claims strong experimental results, the raw material here does not let us quantify them. If you are evaluating the method for production use, you would still need to inspect the full paper’s experiments, ablations, and runtime measurements.

- LaCT is strong, but fully plastic updates can forget earlier information.

- Elastic Test-Time Training adds a Fisher-weighted prior to keep updates anchored.

- The anchor itself moves via an exponential moving average of past fast weights.

- FSM uses this setup for efficient 4D reconstruction over long sequences.

- The paper claims smaller chunks, better adaptation, and less camera-interpolation shortcutting.

Why developers should care

If you build systems that need to model environments over time, the interesting part here is not just the reconstruction task. It is the training and inference pattern. Many spatial models work well when the sequence is short or when the whole context can be processed as one block. That breaks down when you want to scale to longer streams, lower memory pressure, or online adaptation.

This paper is relevant because it treats test-time adaptation as something that needs regularization, not just more freedom. That is a useful design lesson for engineers: if a model updates itself during inference, you need a mechanism that keeps those updates from destroying earlier state. The elastic anchor is one concrete way to do that.

It also points toward a more practical multi-chunk future for long-context spatial models. Instead of assuming one giant chunk is the only safe way to run inference-time learning, the paper argues for a setup that can adapt across chunks while reducing activation-memory costs. That is especially appealing for systems where memory is the real constraint, not just compute.

Limitations and open questions

The abstract is promising, but it leaves several questions open. We do not get benchmark numbers, latency figures, memory savings measurements, or details on how FSM compares against baselines beyond the general claim of extensive experiments. We also do not see failure cases, sensitivity to chunk size, or how robust the method is across different scene types.

Another open question is operational complexity. Elastic Test-Time Training adds a Fisher-weighted prior and a maintained anchor state, which sounds lightweight conceptually, but the practical cost depends on implementation details that are not visible in the abstract. Developers would want to know how much extra bookkeeping it adds, whether it changes throughput, and how it behaves under noisy or sparse observations.

Still, the direction is clear: if you want long-sequence spatial models to be usable beyond a single giant context window, you need better control over test-time plasticity. This paper’s main contribution is to show one way to do that while keeping the model adaptable, memory-conscious, and aimed at 4D reconstruction rather than just static scene understanding.

In short, FSM is less about a flashy new benchmark claim and more about a systems-minded fix for a real scaling problem. For engineers building spatial memory, embodied perception stacks, or long-horizon scene reconstruction pipelines, that is the kind of idea worth tracking closely.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset