Hierarchical Planning Cuts World-Model Search Cost

A hierarchical latent world-model planner improves long-horizon control and cuts planning compute, with zero-shot gains on real robots.

Hierarchical Planning with Latent World Models tackles a familiar problem in model predictive control: learned world models can work well for short-horizon decisions, but they often get brittle when the task stretches out. Prediction errors accumulate, the search space blows up, and planning becomes expensive right when you need it most.

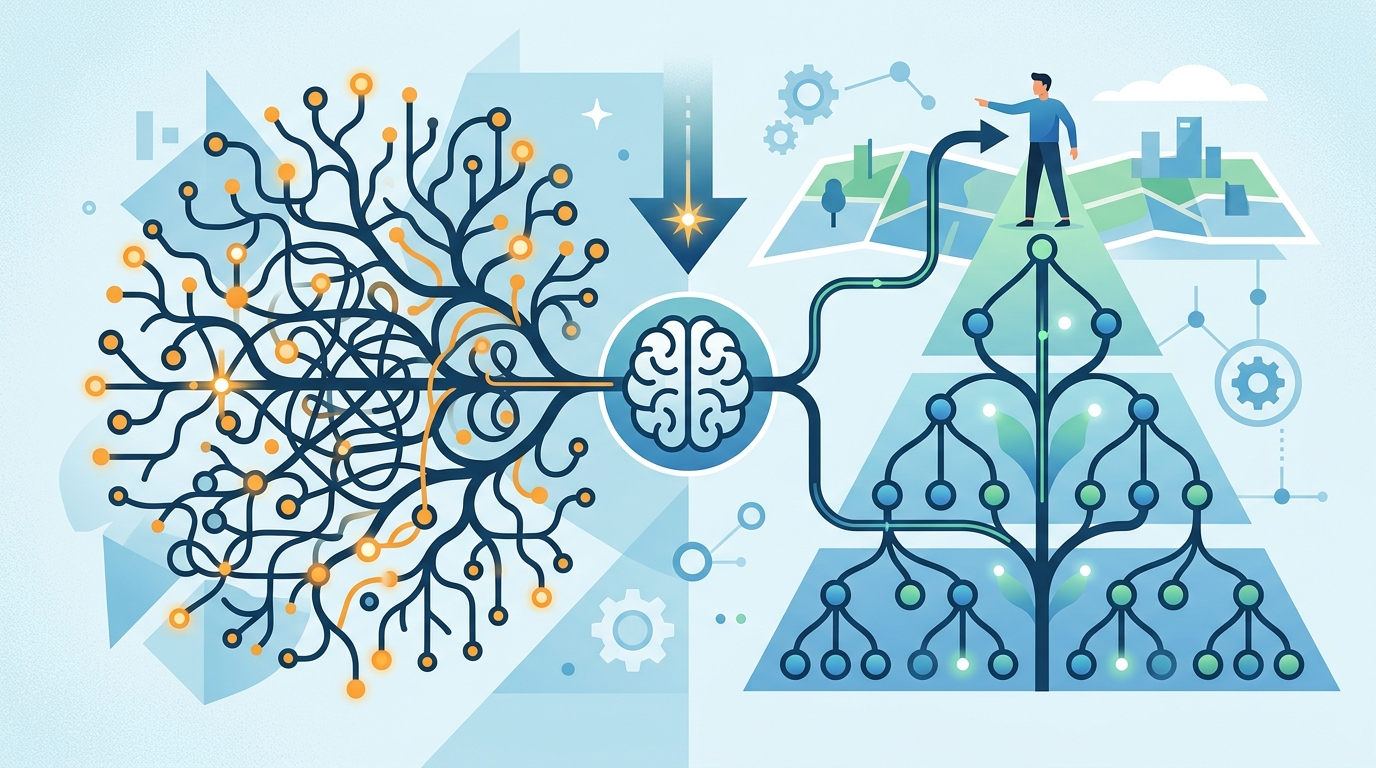

The paper’s core idea is straightforward: instead of planning at one temporal scale, learn latent world models at multiple scales and plan hierarchically across them. That gives the system a way to reason over long horizons without brute-forcing every low-level step, and it does so in a way the authors describe as modular across different latent world-model architectures and domains.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Learned world models are attractive because they can support zero-shot control in new environments. For engineers, that means a policy can sometimes adapt at inference time using the model’s internal predictions instead of needing a full retrain for every deployment setting. In embodied control, that’s a big deal: robots rarely get to operate in the same conditions they were trained on.

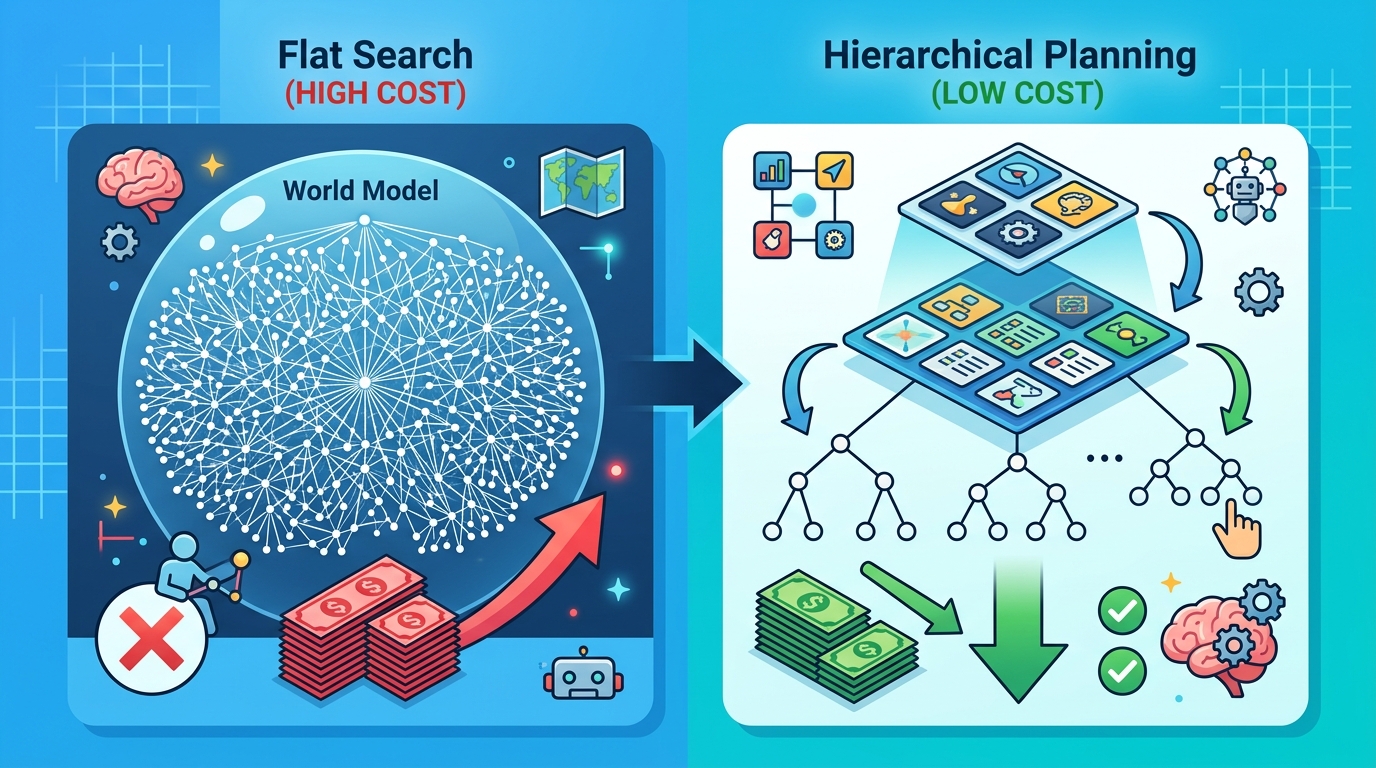

But there’s a catch. The longer the planning horizon, the more the model’s prediction mistakes can stack up. At the same time, the planner has to search through a growing tree of possible actions and outcomes. In practice, that makes long-horizon MPC with a learned model harder to use for tasks that are not neatly greedy or short-term.

This paper is aimed squarely at that failure mode. The authors focus on long-horizon control for embodied systems, where the planner has to stay useful over many steps instead of just making the next move look good.

How the method works in plain English

The proposal is to learn latent world models at multiple temporal scales. Think of it as giving the planner both a coarse map and a detailed map of the same environment. The coarse level helps with broad, long-range reasoning, while the finer levels handle local action selection.

On top of those multi-scale models, the system performs hierarchical planning. Rather than searching all possible low-level action sequences directly, it plans across the scales, which reduces inference-time planning complexity. The result is a planning abstraction that is meant to plug into different latent world-model setups instead of depending on one specific architecture.

That modularity matters. A lot of planning research is tightly coupled to a particular model or environment. Here, the authors position hierarchical planning as a layer that can sit on top of diverse latent world-model architectures and domains, which makes the idea more practical for experimentation and integration.

In simple terms: the model does not need to think about every step in the same way. It can make long-range decisions at a higher level, then refine them at lower levels when needed. That is the main trick for keeping planning tractable.

What the paper actually shows

The paper reports zero-shot control on real-world non-greedy robotic tasks. The headline result is a 70% success rate on pick-and-place when the system is given only a final goal specification. The comparison point is a single-level world model, which achieved 0% in that setting.

That is a strong signal that the hierarchical setup is doing something useful beyond just shaving off compute. It suggests the approach can help when a task cannot be solved by greedily optimizing immediate reward or short-term progress.

The authors also evaluate the method in physics-based simulated environments, including push manipulation and maze navigation. In those settings, hierarchical planning achieved higher success while requiring up to 4x less planning-time compute. The abstract does not provide more detailed benchmark numbers, so there is no additional performance table to pull from here.

For a paper at this stage, that combination of results is important: real-world zero-shot success plus lower inference cost in simulation. It points to both effectiveness and efficiency, which is exactly what you want from a planning abstraction.

Why developers should care

If you build robotics systems, embodied agents, or any control stack that has to plan under uncertainty, this paper is worth a look because it addresses the two pain points that usually show up together: long-horizon instability and expensive search. A planner that is more robust over longer timescales and cheaper to run at inference time is easier to deploy.

There is also a broader software-engineering angle. The paper frames hierarchical planning as modular, which makes it more appealing than a one-off algorithm tied to a single benchmark. If the abstraction really transfers across latent world-model architectures and domains, then it could become a reusable planning layer rather than a research prototype locked to one setup.

- Useful when the task requires long-horizon reasoning, not just next-step optimization.

- Potentially easier to deploy than a flat planner because it reduces planning-time compute.

- Relevant for zero-shot control scenarios where retraining is not practical.

- Still needs validation beyond the tasks and environments reported in the paper.

Limitations and open questions

The abstract gives a promising headline, but it does not answer everything. We do not get details on training cost, model size, dataset scale, or how sensitive the method is to the choice of temporal scales. We also do not see whether the hierarchical planner adds complexity elsewhere, such as in implementation or tuning.

Another open question is how broadly the modular claim holds in practice. The paper says the approach applies across diverse latent world-model architectures and domains, but the abstract only names a limited set of environments: a real-world pick-and-place task, plus push manipulation and maze navigation in simulation. That is encouraging, but not the same as proving broad generality.

There is also the usual planning tradeoff to keep in mind. Hierarchy can reduce search space, but it can also introduce abstraction errors if the coarse level misses important details. The paper’s results suggest the balance works well in the reported tasks, but engineers should still treat it as a method to evaluate in their own control loop, not a universal fix.

Even with those caveats, the direction is compelling. If learned world models are going to be used for real control problems, they need to handle long horizons without turning inference into an expensive search problem. This paper’s answer is to plan at multiple temporal scales, and the reported results suggest that is a practical way to get both better success and lower compute.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset