HyCOP makes PDE surrogates modular and interpretable

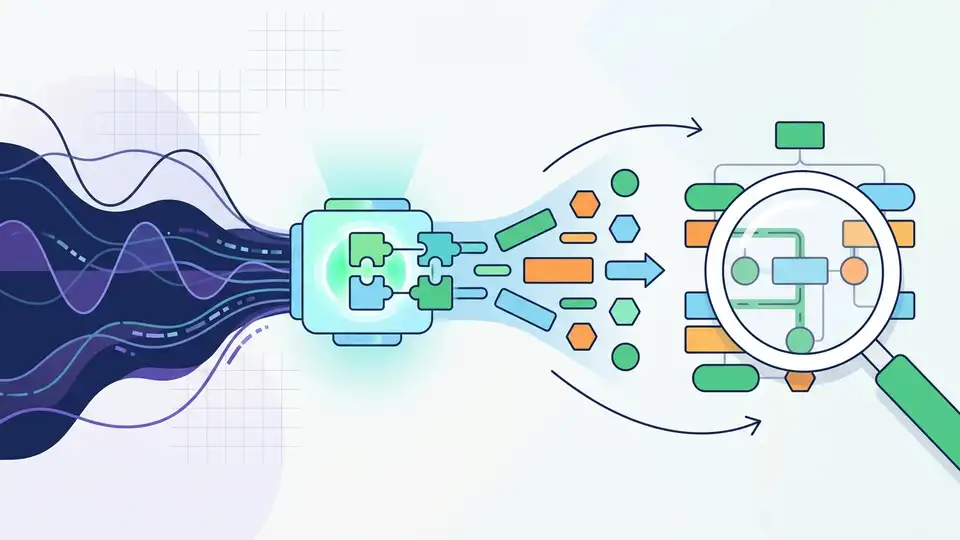

HyCOP learns PDE solution operators as short, query-conditioned programs, aiming for more interpretable surrogates and better OOD transfer.

HyCOP learns PDE surrogates as short, query-conditioned programs instead of one big neural map.

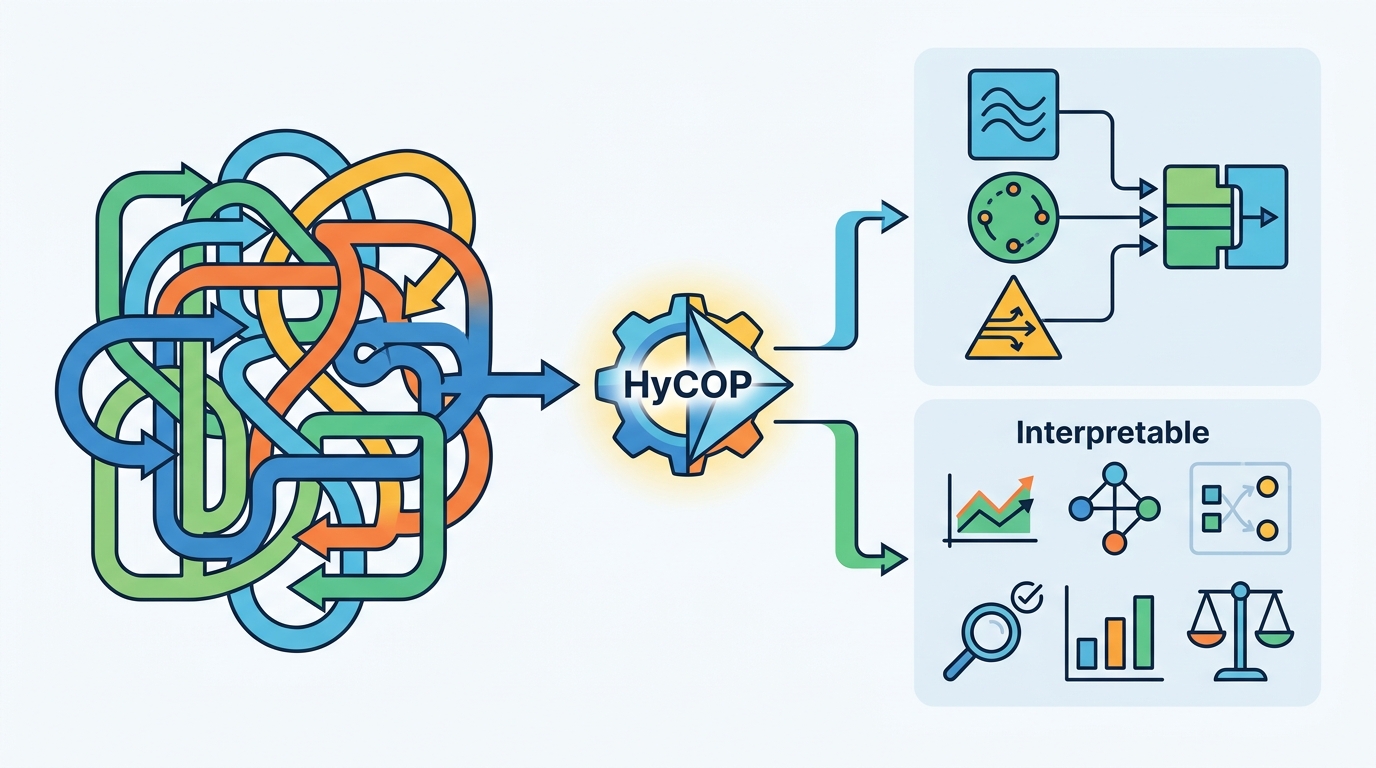

HyCOP: Hybrid Composition Operators for Interpretable Learning of PDEs takes a familiar pain point in scientific ML and attacks it head-on: monolithic neural operators are hard to interpret, hard to adapt, and often brittle when the regime changes. HyCOP proposes a modular alternative that composes simple pieces like advection, diffusion, learned closures, and boundary handling into short programs that are chosen on the fly.

For developers building surrogate models for PDEs, the interesting part is not just that HyCOP predicts solutions. It is that the model is structured to explain itself in terms of which module ran, for how long, and under what query conditions. That makes it closer to a controllable system than a black box.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Neural operators are often trained as a single end-to-end map from inputs to PDE solutions. That can work well in-distribution, but the paper argues that this style of model is difficult to inspect and can struggle when the test regime shifts. In practical terms, that means you may not know which physical behavior the model is capturing, which part is failing, or how to reuse pieces of the model when the problem changes.

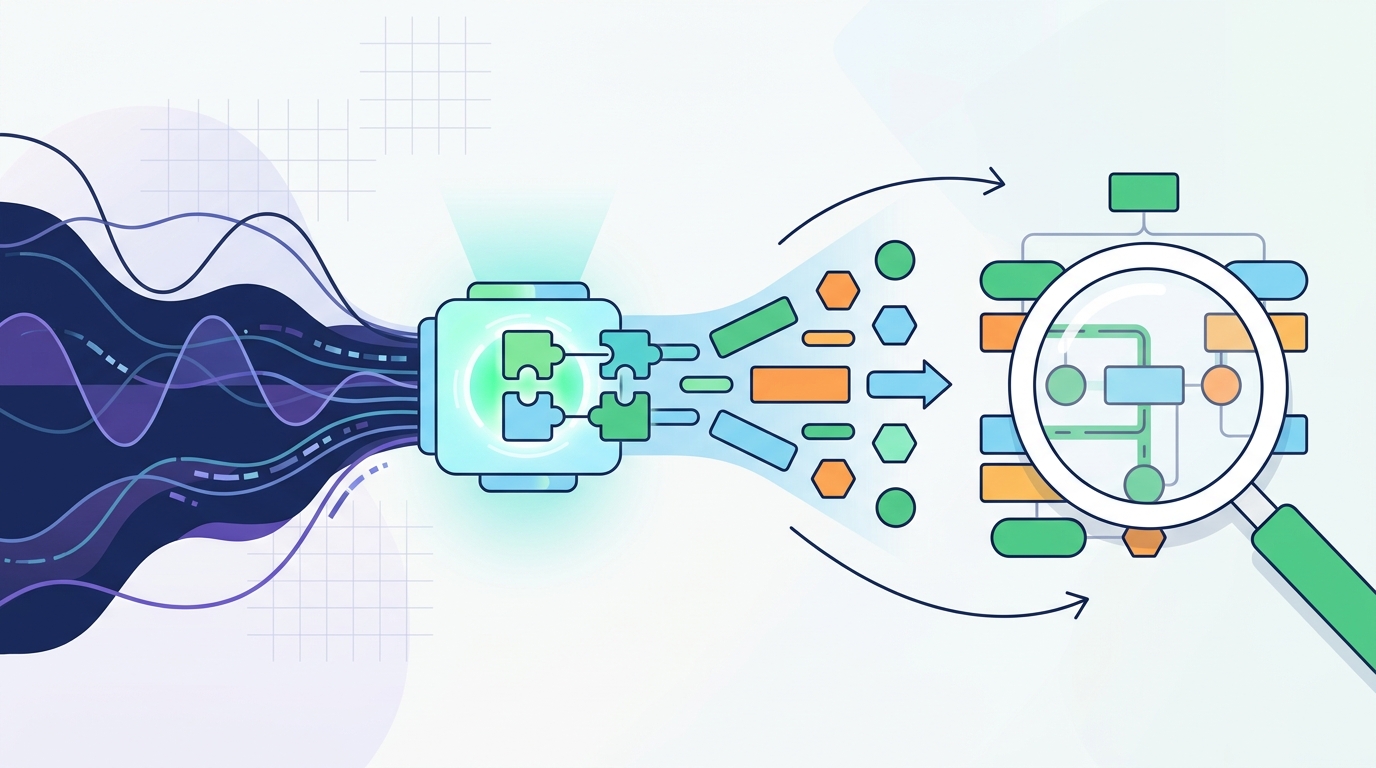

HyCOP is designed around that gap. Instead of asking one network to learn everything, it breaks the operator into modules that correspond to recognizable ingredients of PDE dynamics. The framework then learns a policy that decides which module to apply and for how long, based on regime features and state statistics. That gives the model a procedural structure that is easier to reason about than a dense latent map.

This matters because many real PDE workloads are not fixed, one-off benchmarks. They evolve across boundary conditions, forcing terms, residual structure, and operating regimes. A system that can swap parts without retraining from scratch is more useful than one that only performs well on the exact distribution it saw during training.

How HyCOP works in plain English

HyCOP is described as a modular framework for learning parametric PDE solution operators by composing simple modules in a query-conditioned way. The key idea is to learn a policy over short programs rather than a monolithic function. Each program specifies which module to use and for how long, and the choice is conditioned on the current regime and state summary.

The modules themselves can be either numerical sub-solvers or learned components. That hybrid design is important: it means HyCOP is not limited to purely neural pieces, and it does not force every physical effect into the same representation. If a part of the PDE is well understood, it can stay explicit; if a part is harder to model, a learned closure can fill the gap.

Another practical detail is that HyCOP supports evaluation at arbitrary query times without autoregressive rollout. In other words, the model is not forced to step forward one tiny prediction at a time just to answer a query. For engineers, that can reduce the mismatch between training-time rollout behavior and inference-time usage, especially when you need solution values at specific times rather than a full step-by-step trajectory.

The paper also frames the model as a dictionary of modules that can be updated modularly. That is where transfer comes in: if a problem changes, you may be able to swap boundary handling or enrich the residual module instead of rebuilding the whole system. The paper specifically mentions boundary swaps and residual enrichment as examples.

What the paper actually shows

The abstract says HyCOP was evaluated across diverse PDE benchmarks and that it produces interpretable programs. It also claims order-of-magnitude out-of-distribution improvements over monolithic neural operators. Those are the strongest headline results in the source material, but the abstract does not provide the benchmark names, exact metrics, or the numerical values behind the improvement, so those details are not available here.

What the paper does make concrete is the type of benefit it is aiming for. The improvement is not just raw accuracy in a single setting; it is robustness under distribution shift, plus a structure that can be inspected after the fact. That is a different bar from standard benchmark chasing, because it tries to make the operator useful when the problem changes in ways that matter operationally.

The paper also claims a theory component. According to the abstract, the theory characterizes expressivity and gives an error decomposition that separates composition error from module error. That decomposition is useful because it turns a vague failure mode into two distinct questions: did the system choose the wrong composition, or did one of the modules itself approximate the PDE poorly?

That same error split is described as a process-level diagnostic. For practitioners, that means the framework is not only a predictor but also a debugging aid. If performance drops, the model structure may help identify whether the issue is in the policy over programs or in the underlying sub-solvers.

Why developers should care

If you are building scientific ML systems, HyCOP points toward a more maintainable design pattern. The paper’s core bet is that PDE surrogates should look less like opaque regressors and more like composable systems with explicit behaviors. That can make models easier to audit, easier to adapt, and easier to extend when new physics or new boundary conditions show up.

There are a few concrete takeaways for implementation-minded readers:

- Use explicit modules when part of the physics is already well understood.

- Let a policy decide composition instead of hard-coding a single rollout path.

- Prefer query-conditioned evaluation when users need answers at arbitrary times.

- Keep error sources separable so failures are easier to diagnose.

At the same time, the abstract leaves open several important questions. We do not get the benchmark list, the exact training cost, the size of the module dictionary, or how sensitive the method is to the quality of its regime features and state statistics. We also do not know how much engineering effort is needed to define the initial module set or how often dictionary updates are required in practice.

That is the main tradeoff with a modular approach: you gain interpretability and transfer hooks, but you may also inherit more design decisions up front. HyCOP looks like a strong attempt to make PDE operators more like reusable software components, but the source material does not yet show how far that idea scales across tasks, teams, or production constraints.

Even with those limits, the paper is notable because it treats interpretability as a functional requirement, not a nice-to-have. For teams working on surrogate modeling, inverse problems, or simulation acceleration, that is a useful direction: make the model’s structure match the structure of the domain, and you get a system that is easier to trust and easier to evolve.

Bottom line

HyCOP proposes a hybrid, modular way to learn PDE solution operators that can explain its choices, support transfer, and improve out-of-distribution behavior over monolithic neural operators. The abstract is light on benchmark specifics, but the architecture and theory make the paper relevant to anyone trying to build scientific models that are both accurate and maintainable.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset