In-Place TTT Lets LLMs Adapt at Inference

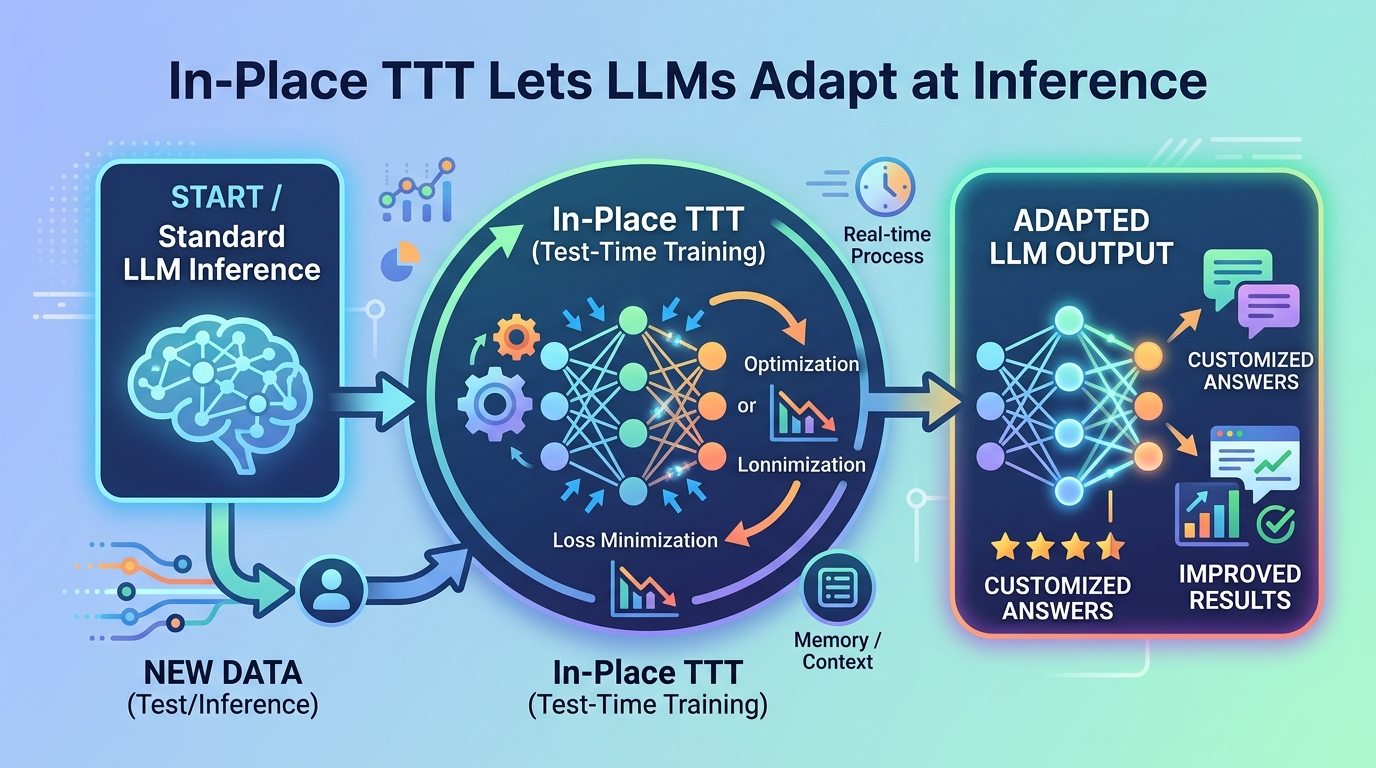

A new test-time training setup lets LLMs update fast weights in place, aiming for better long-context adaptation without full retraining.

Large language models are usually frozen after training: you train, deploy, and hope the model stays useful as the world changes. In-Place Test-Time Training argues that this static workflow is a bad fit for real-world tasks that keep producing new information, especially when context gets long and the model needs to adapt on the fly.

The paper’s core idea is practical: instead of retraining an entire model, it updates a small part of it during inference. That makes test-time training less like a research curiosity and more like a possible engineering pattern for continual adaptation in LLM systems.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The authors start from a familiar limitation of today’s LLM stack: models are trained once, then deployed as fixed systems. That works fine when the task distribution stays stable, but it becomes awkward when the model is expected to absorb fresh information continuously, or when the input context is so long that static weights are not enough to keep up with the signal in the stream.

Test-Time Training, or TTT, is one proposed answer. The basic idea is to update a subset of parameters at inference time so the model can adapt while it is being used. But the paper says current LLM ecosystems run into three major barriers: architectural incompatibility, computational inefficiency, and fast-weight objectives that do not line up well with language modeling.

That last point matters. If the adaptation objective is generic reconstruction, it may not match the actual job of an autoregressive language model, which is to predict the next token. The paper’s argument is that if you want inference-time adaptation to work in LLMs, the training signal has to be aligned with the model’s real objective.

How In-Place TTT works in plain English

In-Place TTT treats the final projection matrix in the standard MLP blocks as the part of the network that can change at test time. The paper calls these adaptable parameters “fast weights.” The rest of the model stays in place, which is why the method is described as a drop-in enhancement rather than something that requires rebuilding the whole architecture.

That design choice is important because it avoids one of the biggest practical headaches with test-time training: if the method requires a special architecture, adoption becomes much harder. By using a component that already exists in common LLM blocks, the authors are trying to make the approach compatible with existing models rather than forcing the ecosystem to move around it.

The second part of the method is the objective. Instead of using a generic reconstruction loss, In-Place TTT uses a tailored objective that is explicitly aligned with next-token prediction. In other words, the model is not just learning to reconstruct inputs in some abstract sense; it is adapting in a way that matches the language modeling task it is already supposed to perform.

The third piece is the update mechanism. The paper says it uses an efficient chunk-wise update scheme, which makes the algorithm scalable and compatible with context parallelism. For engineers, that is the difference between an idea that works in a toy setting and one that might fit into a real inference stack that has to process long contexts efficiently.

- Adaptable part: the final projection matrix in MLP blocks

- Objective: next-token-prediction-aligned, not generic reconstruction

- Update style: chunk-wise, designed for scalability

- Compatibility goal: works as a drop-in enhancement for LLMs

What the paper actually shows

The abstract does give concrete results, but not a full benchmark table. It says that as an in-place enhancement, the method enables a 4B-parameter model to achieve superior performance on tasks with contexts up to 128k tokens. It also says that when the model is pretrained from scratch with this framework, it consistently outperforms competitive TTT-related approaches.

That is useful, but it is also limited. The abstract does not name the tasks, does not list exact scores, and does not provide the comparison details behind “competitive TTT-related approaches.” So the safe reading is that the method appears to help, especially in long-context settings, but the summary alone does not let us quantify the gain.

The paper also mentions ablation studies, which suggests the authors tested how different design choices affect performance. The abstract does not spell out the ablation results, but it does say they provide deeper insight into the method’s design. That usually means the paper tries to justify why the objective, the fast-weight choice, and the chunk-wise update all matter.

One especially relevant detail is that the method is presented in two modes. First, it can enhance an existing model in place. Second, it can be used when pretraining a model from scratch. That dual framing matters because it gives the approach a migration path: you do not necessarily need to throw away existing checkpoints to try it.

Why developers should care

If you build LLM systems, the appeal here is straightforward: adaptation without full retraining. A method that updates only a subset of weights at inference time could be useful anywhere the model has to keep up with changing context, evolving documents, or task-specific signals that appear after deployment.

It also speaks to a broader engineering question: how do you make models less static without turning inference into a training job? In-Place TTT is one attempt to answer that by keeping the update surface small and by aligning the adaptation objective with autoregressive language modeling.

There is also a systems angle. The paper emphasizes compatibility with context parallelism, which suggests the authors are thinking about long-context throughput and implementation practicality, not just algorithmic novelty. For teams working on long-context inference, that is the kind of detail that determines whether a technique stays in the lab or makes it into production experiments.

Still, the paper leaves open several practical questions. The abstract does not tell us the compute overhead of the update step, how stable the fast weights are over long sessions, how often the weights need to change, or what kinds of tasks benefit most. It also does not show whether the method introduces any risk of drift or forgetting during extended inference.

So the honest takeaway is this: In-Place TTT looks like a serious attempt to make test-time training fit modern LLMs by changing only a small, strategically chosen part of the network and by using a language-model-aligned objective. The abstract claims strong long-context results and better performance than related methods, but the engineering tradeoffs still need the full paper to be understood.

Bottom line

This paper is about making LLMs adapt while they are being used, without requiring full retraining or a custom architecture. The method is simple to describe but meaningful in practice: fast weights in the final MLP projection, a next-token-oriented objective, and a chunk-wise update path that aims to scale.

For developers, the interesting part is not just that test-time training is possible. It is that the authors are trying to make it fit the realities of LLM deployment: long contexts, existing model architectures, and the need for something that can be added rather than rebuilt.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset