LLM Biases in Agentic AI Systems

This paper looks at bias in transformer-based agentic AI now used for shopping, video, and navigation tasks.

This paper examines bias in transformer-based agentic AI used across consumer platforms.

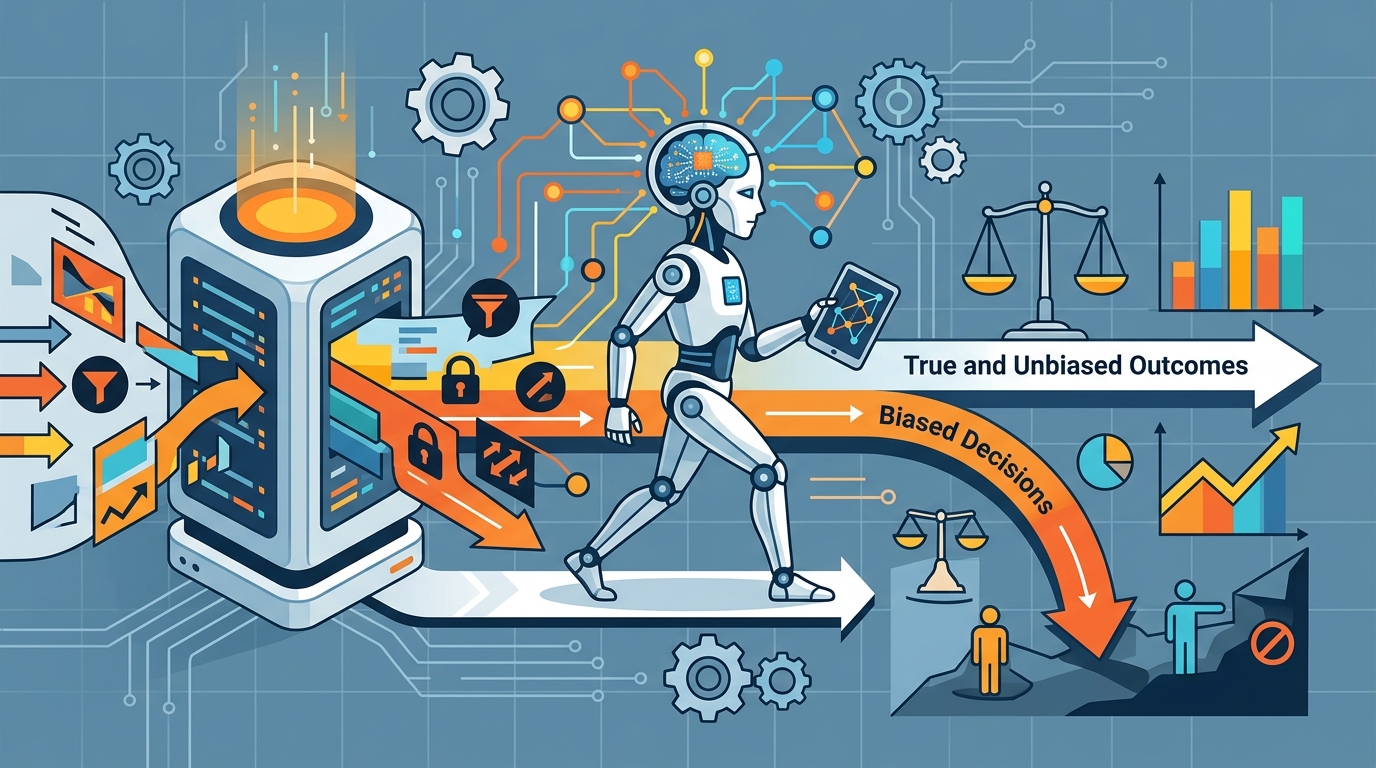

The paper LLM Biases is about a problem that matters immediately to anyone building or shipping AI features: transformer-based agentic systems are being deployed on major platforms to help users shop, watch, and navigate content with less effort. That makes their behavior more than a research curiosity. If these systems carry bias into recommendations or actions, they can shape what people see and choose at scale.

The raw abstract is thin, so the safest reading is also the most honest one: this paper is framing bias as a practical issue in agentic LLM systems, not just a theoretical concern. It points to a class of models that are already being used in user-facing workflows, which means the consequences of skewed outputs are likely to show up in product behavior, not just in benchmark tables.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

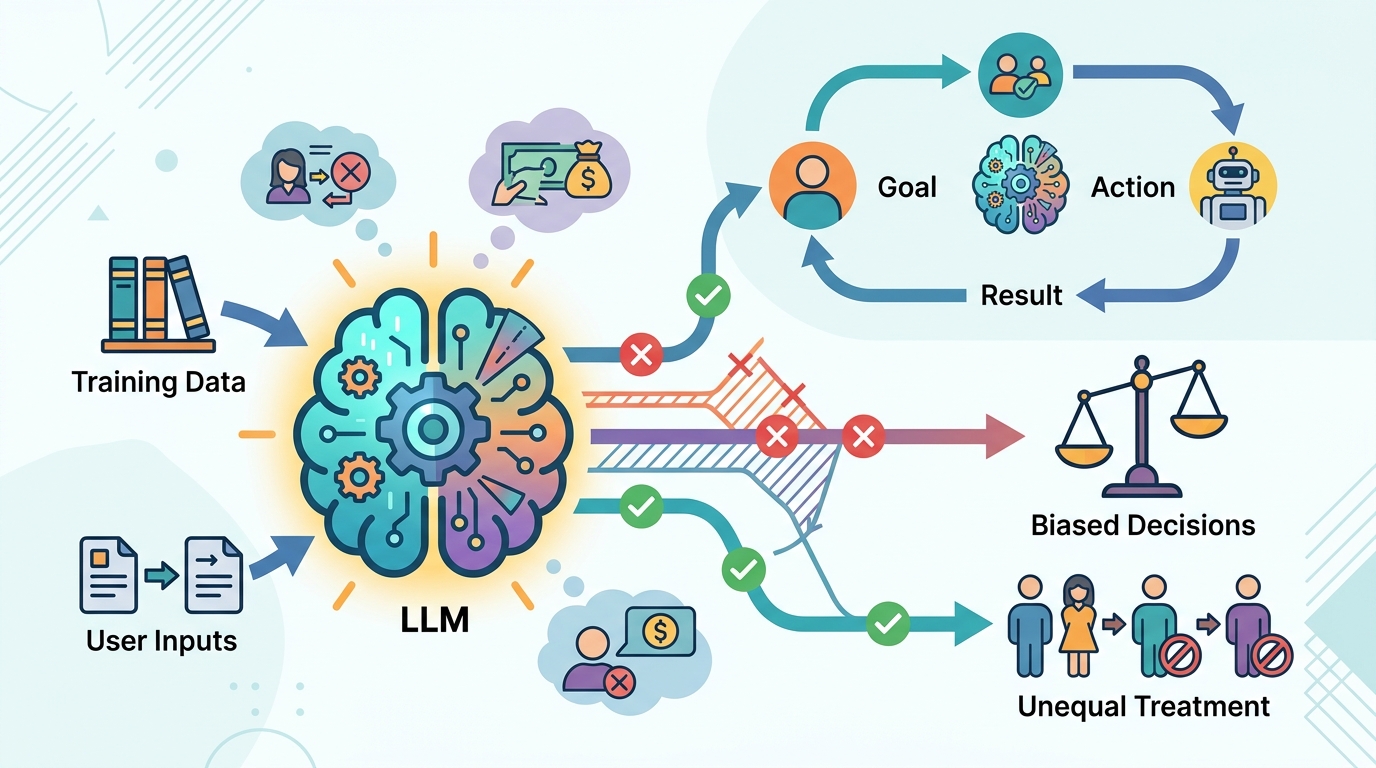

Bias in LLMs is not new, but the setting here is different. The abstract specifically calls out transformer-based agentic AI on major platforms, which suggests systems that do more than answer questions. They help users make decisions, move through interfaces, and discover content. In that environment, bias can affect ranking, suggestions, and the path a user takes through a product.

For developers, that matters because agentic behavior can amplify small model preferences into visible product outcomes. A biased model in a chat demo is one thing. A biased model embedded in shopping or content navigation can influence what gets recommended, what gets skipped, and what options users are nudged toward.

The paper title signals that the central topic is LLM bias itself, but the abstract does not spell out the exact bias type, the evaluation setup, or the target domain beyond those platform examples. So while the problem statement is clear, the source does not give enough detail to say whether the paper is focused on demographic bias, preference bias, safety bias, or some other category.

How the method works in plain English

The abstract does not describe the method, so there is no basis for claiming a specific training recipe, dataset, intervention, or evaluation protocol. That said, the framing tells us the paper is likely treating bias as something that can be observed in agentic behavior, not just in static text generation.

In practical terms, papers in this area usually try to answer questions like: does the model treat different users or content types differently, does it make uneven recommendations, and does its behavior change when it is acting as an assistant rather than a plain chatbot? But to be clear, those are implementation possibilities, not confirmed details from this source.

If you are reading this as an engineer, the important takeaway is that “LLM bias” in an agentic setting is probably about end-to-end behavior. That means the evaluation may need to look at the whole loop: prompt, reasoning, action selection, and user-facing output. The abstract does not confirm that, but that is the right mental model for the problem space the paper points at.

What the paper actually shows

The source material does not include benchmark numbers, results tables, or concrete performance metrics. It also does not include a summary of experiments, so there is no way to report measured gains, error rates, or comparative results without inventing them.

That absence matters. From the abstract alone, we can say the paper is about the deployment context and the bias concern, but not whether it proposes a fix, identifies a failure mode, or demonstrates a measurable reduction in bias. If you need evidence for adoption decisions, you would have to read the full paper rather than rely on the abstract.

What we can say is that the paper is positioned around a real deployment trend: transformer-based agentic AI is already being used on major platforms. That makes the topic operationally relevant even before any numbers enter the picture. The paper is pointing at a class of systems where bias has direct product consequences.

Why developers should care

For product teams, the key lesson is that bias is not just a model-quality issue; it is a system behavior issue. Once an LLM starts acting as an agent inside a consumer workflow, bias can affect discovery, choice architecture, and user trust. That is especially important when the model is helping people shop, watch, or navigate content.

Developers should also care because agentic systems are harder to reason about than single-turn prompts. A model can appear harmless in isolation and still produce skewed outcomes when it is allowed to choose actions or optimize for a user goal. That means testing needs to happen at the product level, not only at the model API level.

- Audit agentic flows, not just chat responses.

- Check whether recommendations or actions differ across user groups or content types.

- Look for bias introduced by the orchestration layer, not only the base model.

- Validate behavior in realistic product scenarios like shopping and navigation.

Limitations and open questions

The biggest limitation here is the source itself: the abstract is too short to reveal the paper’s method, dataset, or findings. There are no benchmark numbers to summarize, no quoted conclusions, and no documented mitigation strategy in the material provided.

That leaves several open questions unanswered. What kind of bias is being studied? Is the paper diagnosing an existing problem, proposing a mitigation, or both? Does it focus on a specific platform workflow, or on agentic systems more broadly? None of that is available in the raw note.

Even so, the paper is worth paying attention to because it sits at the intersection of two things developers are already shipping: transformer-based LLMs and agentic user experiences. If bias in those systems is not measured carefully, it can become part of the product behavior users experience every day.

So the practical read is simple: this paper flags bias in deployed agentic AI as an engineering problem, but the abstract does not yet provide the evidence needed to judge a solution. For now, it is a reminder to treat bias testing as part of system design, not an afterthought.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset