LLMs Can Nudge Duopoly Prices Up

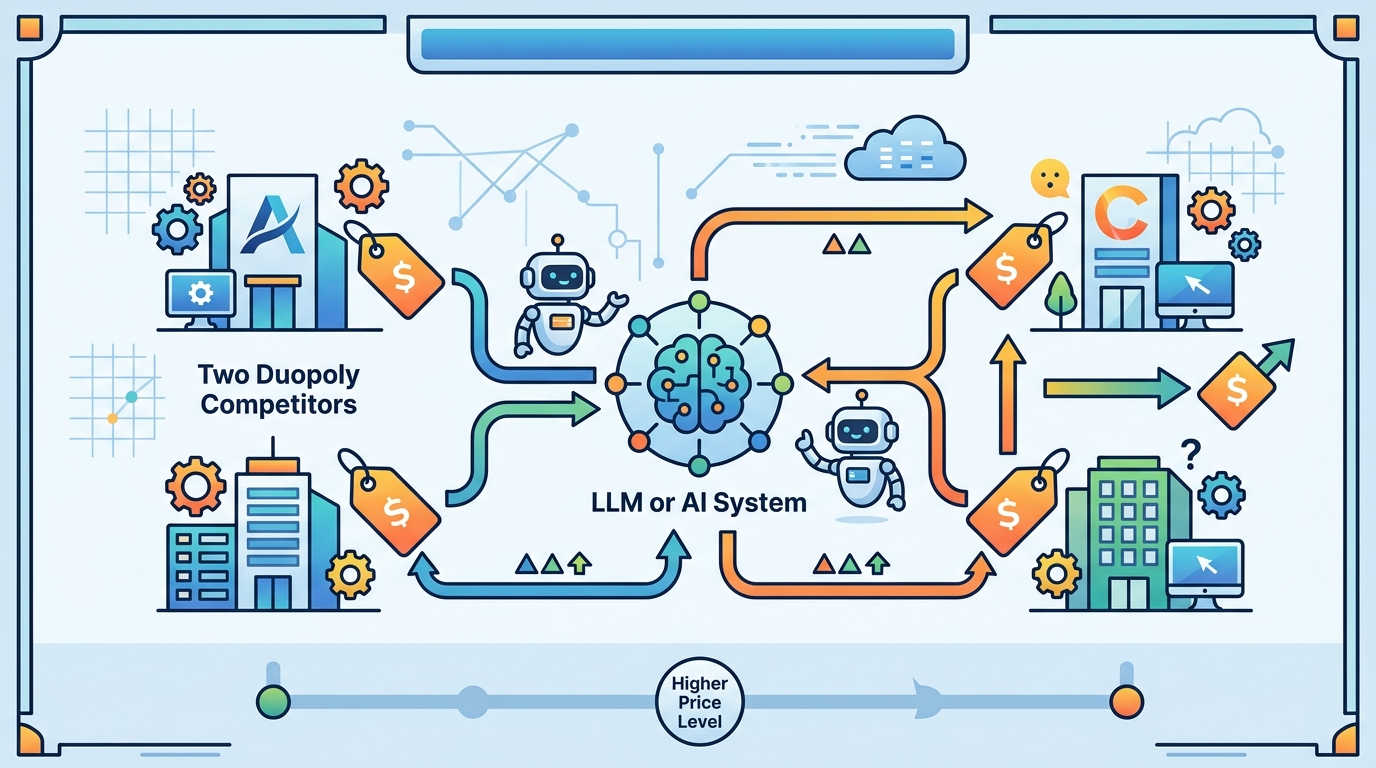

A study examines how shared LLM pricing can facilitate collusion in a duopoly when both sellers use the same pre-trained model.

Shared LLM pricing can make duopoly sellers easier to coordinate.

Delegating pricing to large language models sounds like a straightforward automation win: fewer manual decisions, faster response times, and more consistent execution. This paper, Collusive Pricing Under LLM, looks at the darker side of that setup. The authors study how using the same pre-trained model for pricing can facilitate collusion when two sellers compete in a duopoly.

That matters because pricing is one of the most sensitive knobs in any marketplace, ecommerce stack, or SaaS business. If an LLM is not just optimizing a single seller’s revenue but also reacting to the behavior of a competing seller, the model may create conditions that make coordinated high prices easier to sustain. The paper’s core question is not whether LLMs can set prices, but whether they can unintentionally change the competitive dynamics between sellers.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The paper is focused on a specific market structure: a duopoly, where two sellers compete. In that setting, each seller’s pricing choices affect the other’s outcomes. If both sellers rely on the same pre-trained LLM to make pricing decisions, the model becomes a shared decision layer across competitors.

That shared layer is the concern. Instead of two independent pricing policies making separate judgments, both sellers may be pulling from the same model behavior. The paper studies whether that common dependence can facilitate collusion, meaning prices rise or remain elevated in a way that benefits sellers at the expense of competition.

For developers, the practical issue is simple: if you deploy the same foundation model across multiple agents in the same market, you may be introducing correlated behavior that changes the game itself. Even without explicit coordination, a shared model can shape outcomes in ways that look more cooperative than competitive.

How the method works in plain English

The abstract is brief, so the source does not spell out the full experimental setup, implementation details, or the exact pricing policy used. What it does make clear is the basic design: the authors study a duopoly where both sellers rely on the same pre-trained LLM for pricing decisions.

In plain English, that means the model is being used as the decision engine for both sides of a competitive market. The research then asks what happens to pricing behavior when the same model influences both sellers. The key mechanism under study is not a hand-coded collusion strategy, but the emergent effect of shared model use in a competitive environment.

This is an important distinction. The paper is not describing a special-purpose antitrust model or a pricing optimizer with explicit collusion logic. It is asking whether general-purpose LLM delegation, by itself, can make collusive outcomes more likely under the right market conditions.

What the paper actually shows

Based on the abstract and notes provided, the paper’s main claim is qualitative: delegating pricing to LLMs can facilitate collusion in a duopoly when both sellers rely on the same pre-trained model. The source does not include benchmark numbers, quantitative results, or specific performance metrics, so there is nothing concrete to compare in terms of price levels, profit deltas, or collusion frequency.

That lack of numbers is worth stating plainly. From the provided material, we can say the paper studies the phenomenon and reports that shared LLM usage can facilitate collusion, but we cannot say how large the effect is, under which exact parameter settings it appears, or whether it holds across different model families.

Still, the result is useful because it points to a system-level risk that is easy to miss in production. A pricing model does not need to be explicitly trained to collude for competition to weaken. If the same model behavior is reused across rivals, the market may drift toward outcomes that are less adversarial than expected.

- Same pre-trained model on both sides can create correlated pricing behavior.

- The setting studied is a duopoly, not a broad multi-seller market.

- The abstract does not provide benchmark numbers or detailed metrics.

- The main takeaway is about market dynamics, not model accuracy.

Why developers should care

If you build pricing systems, agentic commerce tools, or market-facing automation, this paper is a reminder that model choice is not just an engineering decision; it is a competitive one. Reusing the same LLM across multiple market participants can create unintended coupling between agents that are supposed to act independently.

That has implications for product design, compliance review, and simulation testing. Teams often evaluate pricing agents in isolation, but this paper suggests you also need to test how they behave when competitors are powered by similar or identical models. The risk is not only bad predictions, but emergent coordination-like behavior that shifts outcomes in the market.

The source does not tell us whether the effect depends on prompt design, temperature, fine-tuning, or access to shared data. Those are open questions left unanswered by the abstract. It also does not show whether using different models, adding randomness, or constraining the policy would reduce the collusion risk.

Even with those gaps, the paper is a strong signal for practitioners: if your system delegates pricing to LLMs, you should think beyond single-agent optimization. The competitive context matters, and shared foundation models may change the equilibrium in ways that are hard to spot until they show up in real prices.

What is still unclear

Because the source material is limited to the abstract and basic paper metadata, several important details remain unknown. We do not have the authors’ full methodology, the exact market model, the training or inference setup, or any numerical evidence. We also do not know whether the study is theoretical, simulation-based, or both.

Those missing details matter for implementation. A practitioner deciding whether to use LLMs for pricing would want to know how robust the effect is, whether it depends on model scale, and what safeguards might blunt it. The paper’s abstract does not answer those questions, but it does establish the risk category clearly enough to justify further scrutiny.

In short, this is not a story about better pricing automation. It is a warning that shared LLMs can alter competitive behavior in ways that may make collusion easier in a duopoly. For anyone building AI-driven pricing, that is a problem worth modeling before it reaches production.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset