Microsoft’s GoalCover finds fine-tuning gaps

Microsoft Research’s GoalCover spots missing capabilities in fine-tuning data before training, and improved Qwen-3-14B reward scores.

Microsoft Research’s GoalCover finds capability gaps in fine-tuning data before training starts.

Fine-tuning data can look complete on paper and still miss the skills a model needs in production. In a new Microsoft Research paper published in April 2026, the team reports that GoalCover caught those gaps early and improved a financial-summarization reinforcement fine-tuning run on Qwen-3-14B.

| Metric | Result | Context |

|---|---|---|

| Target subgoal degradation | 25.6% | Average drop in controlled corruption tests |

| Non-target subgoal degradation | 2.1% | Average drop in the same tests |

| Cohen’s d | 1.24 | Separation between targeted and non-targeted impacts |

| LLM-judge reward | 3.77 → 4.12 | Unfiltered data versus GoalCover-filtered data |

| Best reward | 4.20 | Filtered data plus goal-conditioned synthetic samples |

What GoalCover is trying to solve

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Anyone who has trained a domain model knows the usual pain point: the dataset looks large enough, but the model still misses a few behaviors that matter in the real task. Microsoft Research says that identifying those missing capabilities before an expensive fine-tuning run is still mostly manual work, and often too late.

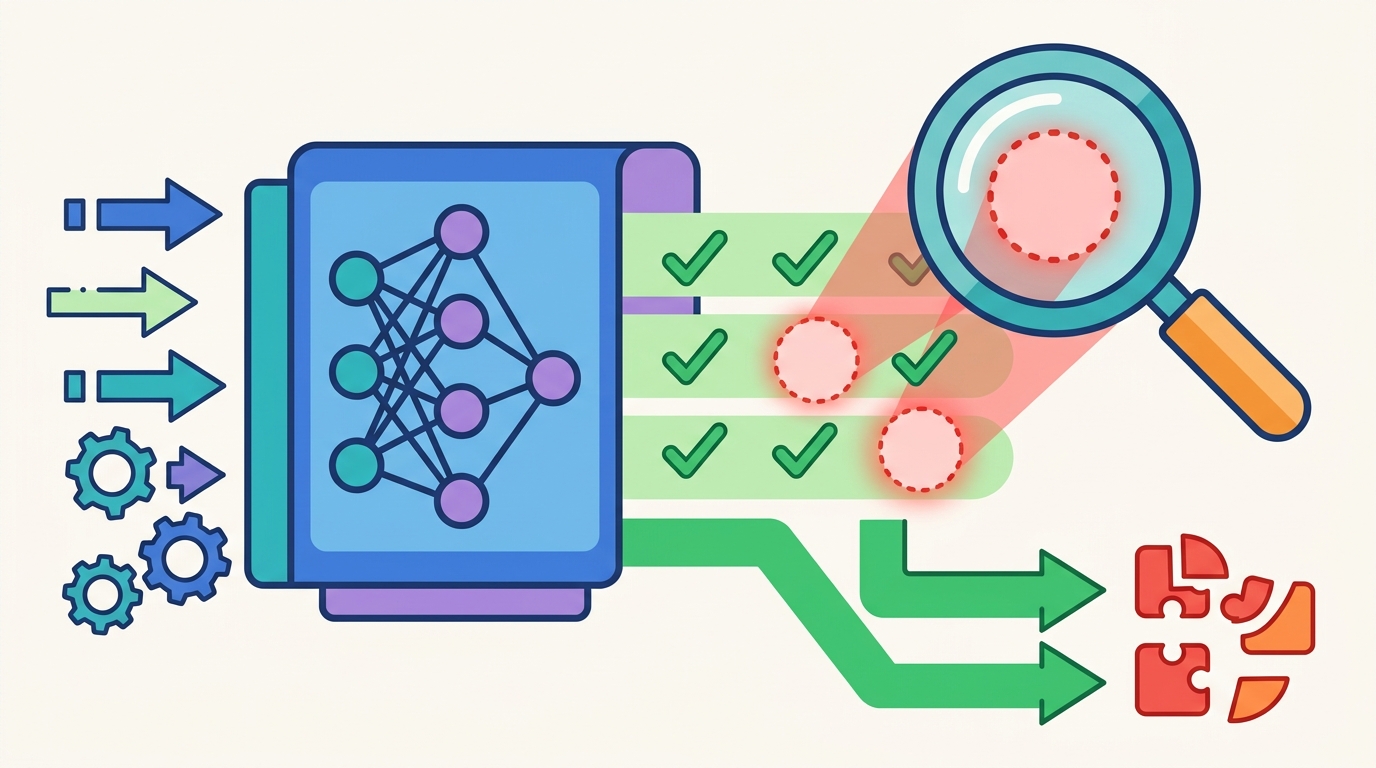

GoalCover is the team’s answer. The framework asks a practitioner to break a high-level goal into smaller subgoals that can be checked one by one, then scores each training example against each subgoal with an LLM-based alignment method. That gives the trainer a map of where the dataset is strong and where it is thin.

The paper frames this as a pre-fine-tuning diagnostic, which is a useful way to think about it. Instead of discovering weak spots after a model underperforms, GoalCover tries to surface them while the data is still being assembled.

- It turns one broad task into atomic subgoals.

- It scores each sample against each subgoal.

- It highlights low-scoring explanations to reveal missing coverage.

- It helps teams decide whether to collect more data, filter what they have, or generate synthetic samples.

Why the evaluation matters

Microsoft Research validated GoalCover in two ways. The first was a set of controlled corruption experiments across Microsoft Research-reported domains: medical QA, legal summarization, and code generation. The second was a real downstream fine-tuning task in financial summarization.

The corruption tests are the more interesting signal for methodology. GoalCover did what a good diagnostic should do: it separated the intended failure mode from the noise around it. Target subgoals dropped by 25.6% on average, while non-target subgoals fell by just 2.1%. That gap is large enough to suggest the framework is detecting something meaningful, not just reacting to random variance.

“We introduce GoalCover, a framework that helps practitioners systematically detect capability gaps in fine-tuning datasets through interactive goal decomposition and automated coverage assessment.”

That quote comes from the paper itself, and it captures the core idea better than any marketing copy could. The value here is not a new training recipe. It is a diagnostic layer that sits before training and tells you what your data is missing.

For teams spending money on fine-tuning, that matters because the cost of a bad run is not just compute. It is also the time spent debugging a model that never had the right examples in the first place.

The numbers behind the Qwen-3-14B result

The downstream test is where the framework starts to look practical. On a financial-summarization reinforcement fine-tuning task, GoalCover-filtered data improved the LLM-judge reward from 3.77 to 4.12 on a 5-point scale. When the team combined filtered data with goal-conditioned synthetic samples, the score rose to 4.20.

Those numbers are modest in absolute terms, but they matter because they come from a workflow decision rather than a larger model or a bigger budget. The gain came from better data selection and better coverage, not from scaling parameters or adding more training steps.

- Unfiltered baseline: 3.77 reward

- GoalCover-filtered data: 4.12 reward

- Filtered data plus synthetic samples: 4.20 reward

- Tested on a financial-summarization RFT task

The paper also matters because it connects diagnosis to action. A lot of data tooling can tell you that a dataset is messy. Fewer tools can tell you which capability is weak and what kind of sample would help fix it. GoalCover tries to close that loop.

That is where the framework is more interesting than a standard data filter. It does not just remove low-quality examples. It gives you a reason for the removal and a path for replacing the missing coverage.

How this compares with normal fine-tuning workflow

In a typical fine-tuning pipeline, teams sample data, label it, train, then inspect failures after the model ships or after a validation run exposes a blind spot. GoalCover flips part of that process by making capability coverage visible before training begins.

That shift matters most in regulated or high-stakes domains, where missing one subskill can wreck the whole deployment. Medical QA, legal summarization, and financial summarization all reward precision, and all punish broad but shallow training sets.

Microsoft Research’s result suggests a practical workflow for teams building domain models with tools from Microsoft Research, Hugging Face, and open-source model families such as Qwen:

- Define the target task as a set of atomic subgoals.

- Score the dataset against each subgoal before training.

- Filter or augment the weak areas.

- Run the fine-tuning job only after coverage looks balanced.

That is a cleaner process than throwing a large pile of examples at a model and hoping evaluation catches the misses. It also gives data teams a concrete artifact to discuss with model trainers, product owners, and domain specialists.

What this means for fine-tuning teams

The main takeaway is simple: better fine-tuning is often a data diagnosis problem, not a model architecture problem. GoalCover gives teams a way to inspect coverage before they burn compute, and the paper shows that the signal can translate into better downstream reward.

If Microsoft Research keeps pushing this line of work, the next question is whether GoalCover can generalize across more tasks, more annotation styles, and more model families without becoming expensive to run. For now, the evidence says teams should treat capability coverage as a first-class metric, especially when the dataset is small, specialized, or expensive to replace.

For developers building custom LLM workflows, the practical move is to ask a harder question before training: which subskills does this dataset actually teach, and which ones are still missing?

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset