OpenClaw Bug Lets Websites Hijack AI Agents

Oasis found a flaw in OpenClaw that lets any website take over a local agent. Update to version 2026.2.25 or later now.

Oasis Security says a flaw in OpenClaw let a website take over a local AI agent with no extension, plugin, or user click. The company’s researchers say the attack worked from a browser tab against the agent’s localhost gateway, and the fix shipped in version 2026.2.25.

The timing matters because OpenClaw moved fast from curiosity to daily tool. Oasis says the project passed 100,000 GitHub stars in five days, and that kind of growth usually means one thing for security teams: adoption outruns review.

If your team runs OpenClaw, the practical advice is simple. Update to version 2026.2.25 or later immediately, then check what that agent can reach on your machines, in your chat apps, and in your cloud accounts.

What OpenClaw is, and why this bug mattered

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

OpenClaw is an open-source AI agent that runs locally and can send messages, run commands, and coordinate tasks across apps and devices. Its appeal is obvious to developers: install it, connect it to your tools, and let it handle repetitive work while you keep coding.

That convenience creates a wide blast radius when the agent trusts the wrong thing. Oasis says OpenClaw’s gateway listened on localhost and treated local connections as safe. That assumption broke down because browsers can open WebSocket connections to localhost from a normal webpage.

In other words, a malicious site did not need a browser extension, a fake login page, or any social engineering beyond getting someone to visit it. The page could talk to the local gateway directly.

- OpenClaw’s gateway runs as a local WebSocket server.

- The gateway auto-approved new device pairings from localhost.

- Local connections were exempt from rate limiting.

- Oasis says the project had over 100,000 GitHub stars in five days.

That combination is what made the flaw so dangerous. A local service that assumes localhost equals trust is fine until a browser becomes the attacker’s bridge into the machine.

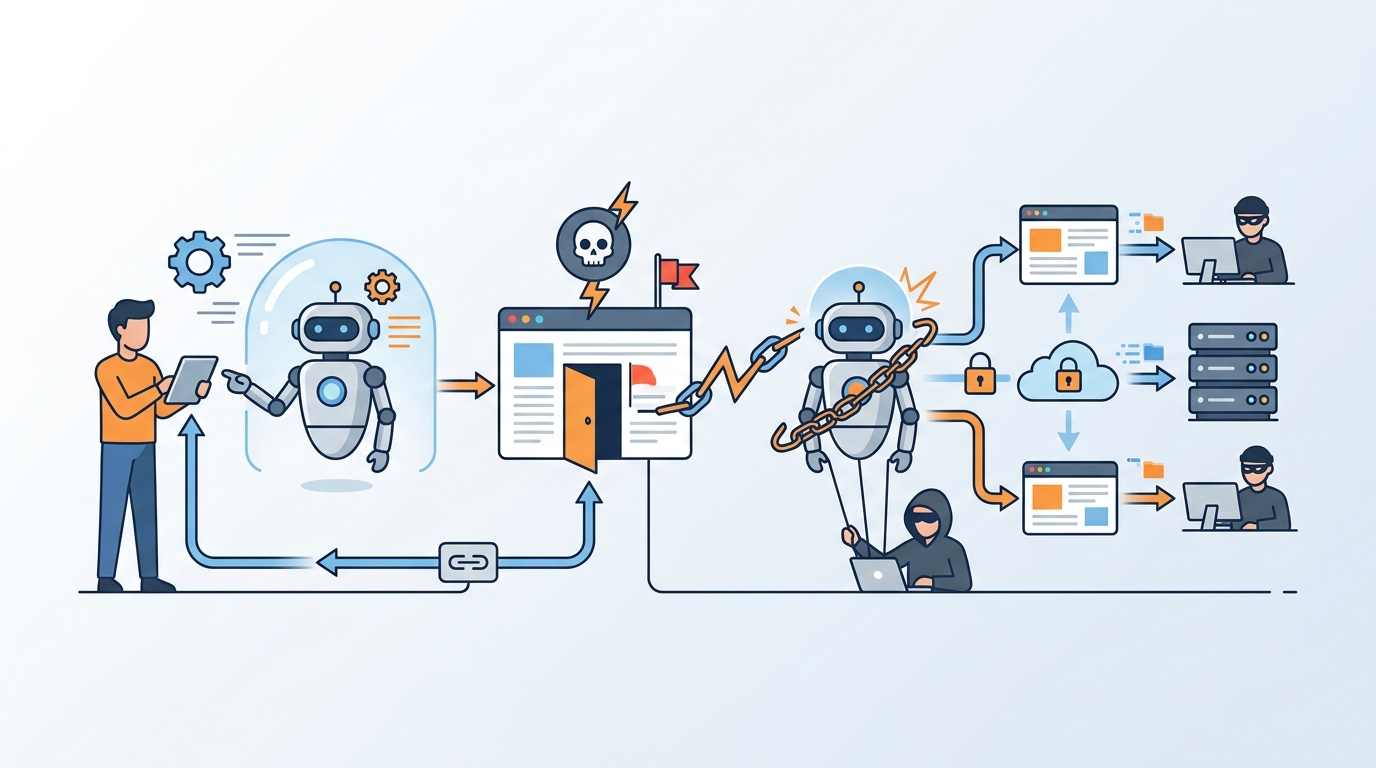

How the takeover chain worked

Oasis describes an attack chain that begins with a visit to any attacker-controlled or compromised website. JavaScript on that page opens a WebSocket connection to the OpenClaw gateway on localhost. Because browsers do not block that kind of connection the same way they block many cross-origin requests, the page can probe the local service without the user seeing anything obvious.

From there, the script brute-forces the gateway password at high speed. Oasis says the rate limiter ignored localhost entirely, so failed attempts were not throttled. Once the password was guessed, the script could register itself as a trusted device without a confirmation prompt.

At that point, the attacker had what amounted to admin access to the agent. Oasis says the script could send messages to the AI, dump configuration data, enumerate connected nodes, and read logs. In a typical setup, that can expose API keys, private messages, and commands that reach other devices.

“The user sees nothing.”

That line from Oasis captures the core problem better than a hundred architecture diagrams. The attack did not rely on malware already living on the machine. It used the browser as a delivery path and the agent’s own trust model as the entry point.

This is also why local-first software can become dangerous when it grows into an automation hub. Once an agent can read messages, issue commands, and reach multiple devices, a local vulnerability stops being local.

Why this is different from the ClawHub supply-chain mess

OpenClaw had already been under scrutiny because of its community marketplace, ClawHub. Oasis says researchers found more than 1,000 malicious skills there, including fake crypto and productivity tools that installed info-stealers and backdoors. That was a supply-chain problem: untrusted plugins sneaking into the ecosystem.

The new flaw is worse in a different way because it lived in the core product. No plugin was needed. No marketplace install was needed. No user had to approve a suspicious extension. The attack worked on the base OpenClaw gateway exactly as documented.

For security teams, that distinction matters. Supply-chain abuse is often caught by software review, package controls, or marketplace policy. A bug in the core trust model needs code changes and a tighter operational setup.

- ClawHub issue: malicious third-party skills and plugins.

- Core bug: website-to-local takeover through the gateway itself.

- Impact: the first problem poisoned content, the second let an attacker control the agent.

- Fix speed: OpenClaw shipped a patch within 24 hours, according to Oasis.

There is also a broader lesson here about shadow AI. Tools like OpenClaw often appear on developer laptops before IT knows they exist. They connect to Slack, calendars, model providers, and shell access, which means one overlooked local agent can become the softest target on the endpoint.

What the patch changes, and what teams should do next

Oasis says OpenClaw’s team classified the issue as high severity and released a fix within 24 hours. The patched version is 2026.2.25, and that is the first place teams should look if they want to confirm they are protected.

But patching alone is only part of the job. If an AI agent can authenticate, hold secrets, and act on a user’s behalf, it needs the same kind of review you would give a privileged service account. That means checking access, limiting scope, and knowing which machine or human owns the identity.

Here is the short version of the response plan:

- Inventory every OpenClaw install across developer machines and test systems.

- Upgrade to version 2026.2.25 or later.

- Audit connected services, stored credentials, and paired devices.

- Restrict what the agent can do when it reaches Slack, GitHub, calendars, or shells.

- Track agent actions with logs that link human intent to machine execution.

That last point is the one many teams miss. If an agent can take action on a user’s behalf, security teams need an audit trail that shows who asked for the action, what the agent tried to do, and what system it touched. Without that, incident response turns into guesswork.

Oasis also uses this incident to push a wider point about non-human identities. AI agents are no longer just apps. They authenticate, store secrets, and make decisions. Treating them like ordinary desktop software is how teams end up with a browser tab controlling a workstation.

What this bug says about the next wave of agent security

The OpenClaw flaw is a clean example of where AI tooling is heading: more autonomy, more local privilege, more ways for a simple browser visit to turn into a full compromise. The next few months will probably bring more bugs like this, because the pressure to ship agent features is moving faster than the habits that keep them safe.

My read is that the winners here will be teams that stop thinking of agents as convenience tools and start treating them as identities with permissions. If an agent can read messages, run commands, and touch secrets, then it needs policy checks, short-lived access, and clear ownership before it ever gets near production laptops.

The practical question for teams is not whether to use agents. It is whether you can answer, today, which ones are running on developer machines and what they can reach. If you cannot answer that, OpenClaw is the warning shot.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset