PARNESS automates scientific research workflows

PARNESS is a paper harness for automated scientific research, with dynamic workflows, full-text indexing, and cross-run knowledge accumulation.

PARNESS is a harness for automating scientific research across dynamic workflows.

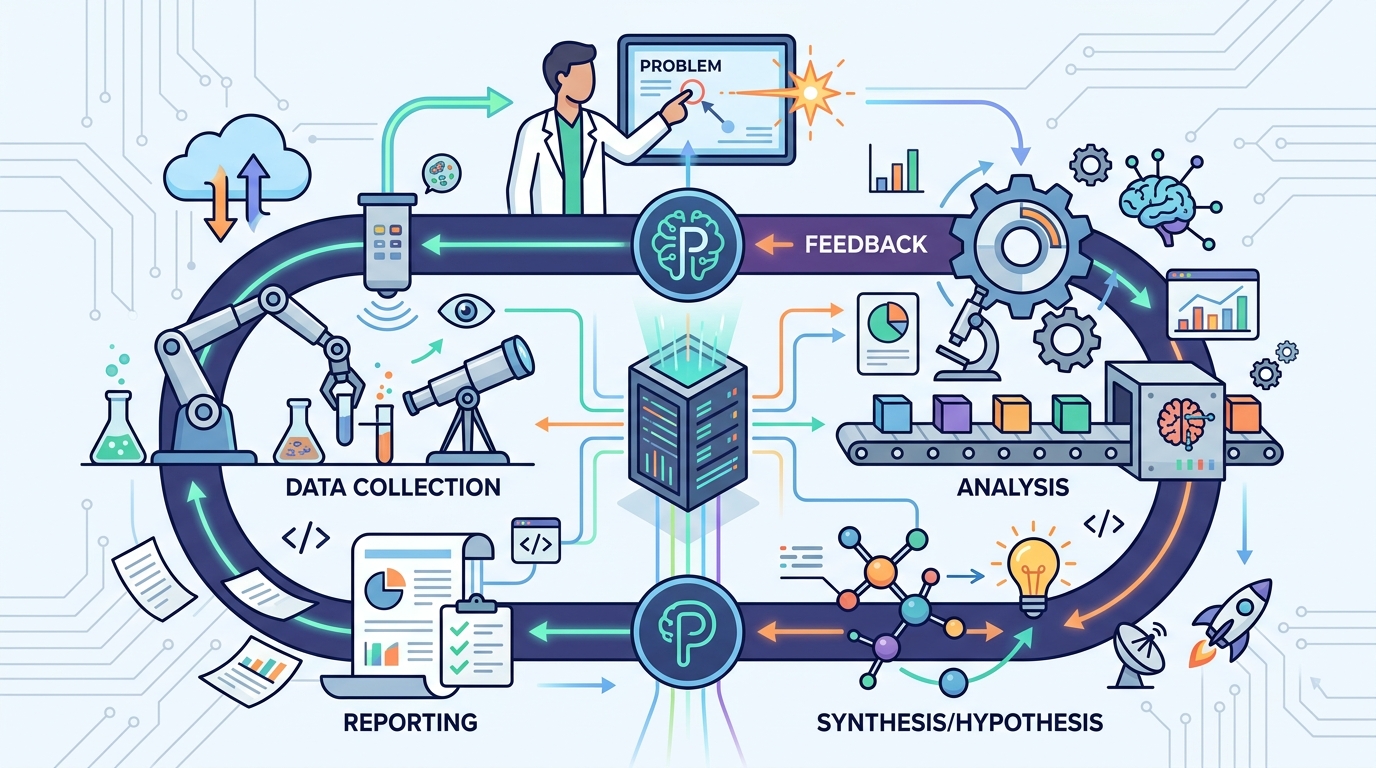

PARNESS: A Paper Harness for End-to-End Automated Scientific Research with Dynamic Workflows, Full-Text Indexing, and Cross-Run Knowledge Accumulation is aimed at a problem that shows up any time you try to automate research end to end: the work is not a single linear script. It changes as the system reads more, learns more, and needs to revisit earlier steps. PARNESS is built around that reality, with support for dynamic workflows, full-text indexing, and knowledge that carries across runs.

That matters for developers because “automated research” is not just about calling a model and waiting for a result. It usually means search, reading, note-taking, synthesis, and repeated refinement. A harness that can keep track of what has already been seen, what was learned in prior runs, and what should happen next is closer to how real research loops work.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The paper is trying to make end-to-end automated scientific research more workable. The title itself points to three pain points: workflows that need to change on the fly, the need to search within full text instead of just metadata, and the need to accumulate knowledge across multiple runs instead of starting from scratch each time.

In practical terms, that suggests the authors are addressing a common failure mode in agentic systems: they can be brittle when the task changes midstream. Research tasks often do change. A promising paper leads to a different query. A new citation changes the direction. A previous result needs to be checked again. A harness that assumes a fixed pipeline will struggle with that kind of work.

PARNESS frames itself as a paper harness, which implies infrastructure rather than a single model or narrow algorithm. That is an important distinction for engineers. The value is not just in generating one answer, but in organizing the whole research process so it can continue across steps and across separate executions.

How the method works in plain English

Based on the paper title and notes, PARNESS combines three ideas. First, it uses dynamic workflows, meaning the sequence of actions can change depending on what the system discovers. Second, it uses full-text indexing, so the system can search inside papers rather than relying only on titles, abstracts, or citation metadata. Third, it supports cross-run knowledge accumulation, which means information learned in one run can be reused later.

That combination is useful because research systems often need both breadth and memory. Full-text indexing helps with breadth: it lets the system find relevant passages in a large document collection. Cross-run accumulation helps with memory: it avoids repeating the same exploration every time a job starts. Dynamic workflows provide the control layer that decides what to do next as the evidence changes.

Seen from an engineering angle, this sounds like a workflow engine wrapped around retrieval and state management. The paper title does not give implementation specifics in the abstract notes, so it is not possible to say exactly how PARNESS stores state, how it indexes documents, or what triggers workflow changes. But the design intent is clear: make automated research more adaptive and less stateless.

What the paper actually shows

The source material provided here does not include benchmark numbers, comparative results, or concrete evaluation metrics. So there is no honest way to claim speedups, accuracy gains, or success rates from the abstract alone.

What the paper does show, at least from the available notes, is the system’s focus and scope. It is positioned as an end-to-end harness for scientific research rather than a single retrieval trick or a one-off summarization tool. The emphasis on dynamic workflows, full-text indexing, and cross-run accumulation suggests the authors are trying to move from isolated automation to a more persistent research loop.

That is still a meaningful contribution even without numbers, because many agentic research demos fail at the system level. They can look good in a single run, but they do not handle changing plans, large document collections, or accumulated context very well. PARNESS is explicitly targeting those operational issues.

- Dynamic workflows let the process change as new evidence appears.

- Full-text indexing should help the system find details buried inside papers.

- Cross-run accumulation aims to preserve useful knowledge between executions.

Why developers should care

If you build research agents, literature review tools, or document-heavy AI workflows, this paper is pointing at the right architectural problems. The hard part is not just generating text. It is keeping the system useful after the first query, the first paper, and the first failed attempt.

PARNESS suggests a design where retrieval, workflow control, and memory are treated as first-class pieces of the system. That is a practical lesson for anyone building with LLMs: if the task spans multiple steps and multiple sessions, you need more than prompt chaining. You need state, indexing, and a way to adapt the plan when the evidence changes.

The limitations are also obvious from the available source. We do not have the paper’s evaluation setup, so we cannot tell how robust the system is, what domain it was tested on, or how much the cross-run knowledge actually improves outcomes. We also do not know the cost of maintaining the index or the failure modes of dynamic workflow selection.

In other words, PARNESS looks like infrastructure for serious automated research, but the abstract notes alone do not prove it is better than existing approaches. For developers, the main takeaway is architectural: if you want research automation to survive real-world complexity, you need adaptive workflows, searchable source text, and persistent memory across runs.

Open questions left by the abstract

Because the source material is thin, several questions remain unanswered. How does PARNESS decide when to branch or revise a workflow? What exactly counts as knowledge accumulation across runs? Does the system index PDFs, extracted text, or both? And how does it avoid carrying forward stale or incorrect information?

Those are the questions that will matter most to engineers reading the full paper. The title promises a harness, which usually means the real value is in the system design and operational behavior. If the paper provides strong implementation detail, that will determine whether PARNESS is a useful blueprint or just a well-named prototype.

For now, the safest reading is simple: PARNESS is trying to make automated scientific research more like a durable process and less like a one-shot demo. That is exactly the direction many developer-built AI systems need to go.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset