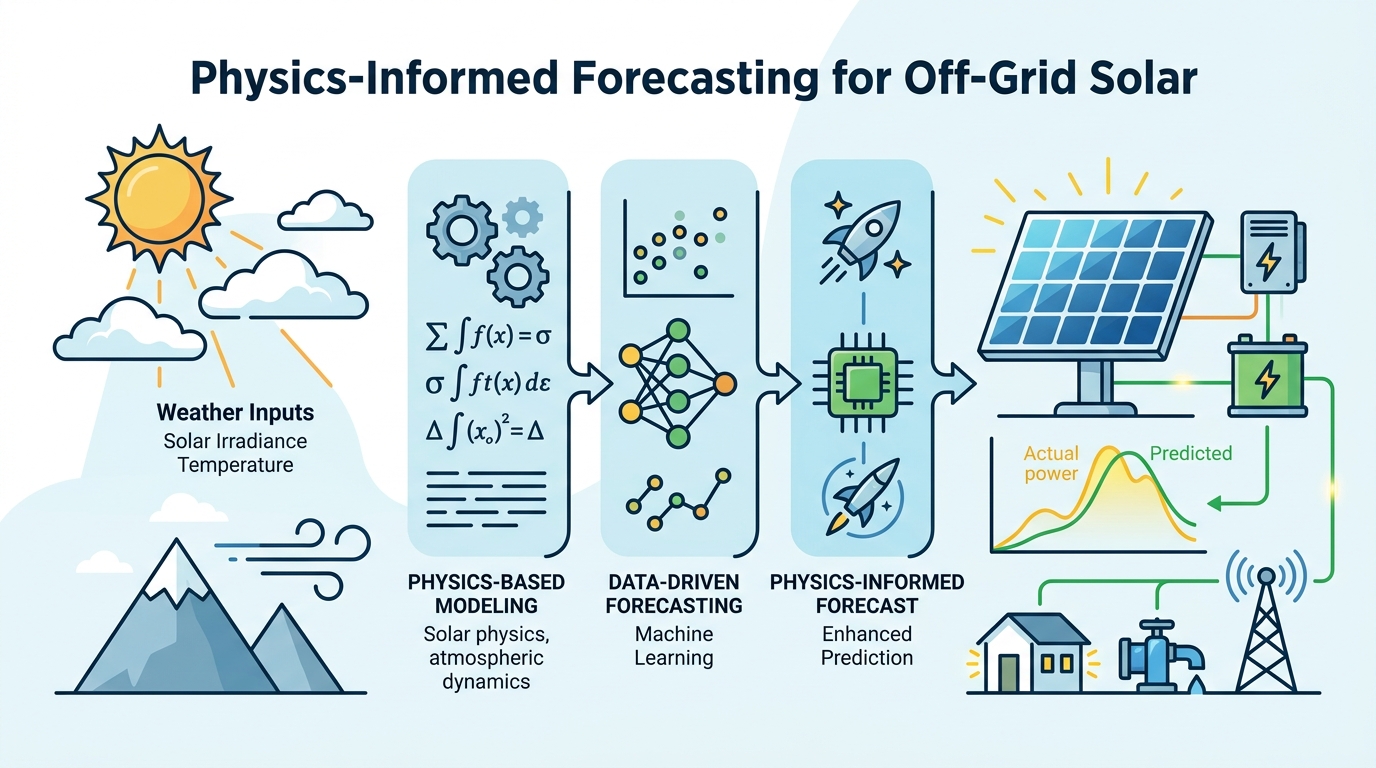

Physics-Informed Forecasting for Off-Grid Solar

A physics-informed state space model aims to cut phase lag and impossible night-time output in solar forecasting for off-grid PV systems.

Off-grid photovoltaic systems live or die on forecasting quality. If a model drifts out of sync with cloud movement or predicts power when the sun is down, the controller makes bad decisions fast. This paper, Physics-Informed State Space Models for Reliable Solar Irradiance Forecasting in Off-Grid Systems, tackles that problem by baking atmospheric and celestial constraints into the model instead of relying on data alone.

The core idea is straightforward: use a physics-informed state space approach to keep solar forecasts aligned with real-world thermodynamics. The paper argues that common deep learning models can suffer from severe temporal phase lags during cloud transients and can even produce physically impossible nocturnal generation. For an engineer building edge-deployable microgrid control, those are not minor errors; they are system-level reliability problems.

What problem the paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The target use case is autonomous off-grid photovoltaic operation, where forecasting has to support stable control without the safety net of the grid. In that setting, a forecast model is not just predicting a curve for analysis. It is helping decide how much energy is available, when storage should charge or discharge, and how much confidence a controller should place in near-term generation.

According to the abstract, contemporary deep learning models struggle with two failure modes. First, they can lag behind rapid cloud transients, which means the predicted irradiance changes too late compared with the actual weather shift. Second, they can generate outputs that violate basic physics, including power production during the night. The paper frames these as mismatches between data-driven learning and deterministic celestial mechanics.

That framing matters because a model can look strong on average error while still being operationally risky. If the output is smooth but delayed, a controller may respond after the useful window has passed. If the model can hallucinate night-time generation, then it is no longer safe to trust as a component in a real microgrid stack.

How the method works in plain English

The proposed system is called the Thermodynamic Liquid Manifold Network. The abstract describes it as projecting 15 meteorological and geometric variables into a Koopman-linearized Riemannian manifold. In practical terms, the paper is trying to reshape the input dynamics into a space where complex weather behavior becomes easier to model while still respecting physical structure.

Two named pieces stand out. A Spectral Calibration unit is used inside the architecture, and a multiplicative Thermodynamic Alpha-Gate helps synthesize real-time atmospheric opacity with clear-sky boundary models. The stated goal is to enforce strict celestial geometry compliance, which in plain English means the network should not be able to predict solar output that contradicts the sun’s position or the day-night cycle.

This is the key engineering move: rather than asking a neural network to learn every constraint from data, the model is structured so that some impossible behaviors are suppressed by design. That is especially relevant for edge and microgrid systems, where reliability often matters more than squeezing out a tiny improvement on average error.

It is worth noting that the abstract uses very dense terminology, and the paper note does not provide implementation details beyond the named components and the 15 input variables. So while the high-level intent is clear, the exact training recipe, loss formulation, and deployment mechanics are not fully visible from the source text alone.

What the paper actually shows

The abstract does include concrete evaluation results. The framework is validated across a five-year testing horizon in a severe semi-arid climate. On that test, it reports an RMSE of 18.31 Wh/m2 and a Pearson correlation of 0.988. Those numbers suggest the model tracks observed irradiance closely over the evaluation period.

It also claims a zero-magnitude nocturnal error across all 1826 testing days. That is the most operationally important result in the abstract, because it directly addresses the physically impossible night-time generation problem the paper set out to fix. In addition, the model is said to maintain a sub-30-minute phase response during high-frequency transients, which speaks to the cloud-shift lag issue.

The model size is also explicitly stated: 63,458 trainable parameters. For developers, that is a useful signal that the design is intended to be lightweight rather than a giant foundation model. The paper positions this as suitable for edge-deployable microgrid controllers, which makes parameter count relevant alongside forecast accuracy.

What the abstract does not provide is just as important. It does not include comparison tables, baseline model names, or ablation results in the supplied text. So while the reported metrics are promising, the source here does not show how much of the gain comes from the physics constraints versus the rest of the architecture.

Why developers should care

If you work on energy systems, embedded control, or forecasting pipelines, this paper is a reminder that prediction quality is not only about average error. In operational settings, a model that respects physical constraints can be more trustworthy than one that merely fits historical data well. That is especially true when forecasts are feeding batteries, load scheduling, or islanded microgrid logic.

There is also a broader ML lesson here: when the domain has hard rules, encode them. Solar irradiance is bounded by geometry and atmospheric conditions, so a model that can generate night-time output is not just inaccurate; it is structurally wrong. The paper’s approach reflects a growing pattern in applied ML where physics-informed design is used to reduce failure modes that pure black-box learning may not eliminate.

- Useful if you need forecasts that stay physically plausible under day-night transitions.

- Relevant for edge or controller-adjacent deployments where model size matters.

- Interesting if you are exploring state space models combined with domain constraints.

- Important if your current model looks good on average but fails during rapid weather changes.

Limitations and open questions

The biggest limitation in the provided abstract is that it gives us the headline results but not enough methodological detail to judge robustness. We do not see the full training setup, dataset split strategy, or how the five-year horizon was partitioned. Without that, it is hard to know how transferable the results are beyond the reported semi-arid climate.

There is also no evidence in the supplied text of cross-site testing, uncertainty estimation, or failure analysis. Those would matter a lot for real-world deployment. A model that performs well in one climate zone may still need retuning elsewhere, especially if cloud dynamics, aerosol conditions, or seasonal patterns differ significantly.

Finally, the abstract makes strong claims about “zero-lag synchronization” and “strict celestial geometry compliance,” but the source excerpt does not show the exact mechanism by which those guarantees are enforced or measured. For practitioners, that means the paper is best read as a promising direction rather than a fully audited production recipe.

Still, the practical takeaway is clear: this work pushes solar forecasting toward models that are not only accurate, but also harder to break in the ways that matter most for off-grid systems. If you are building control logic around renewable generation, that is the kind of constraint-aware modeling worth watching.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset