Physics Simulators as RL Data for LLM Reasoning

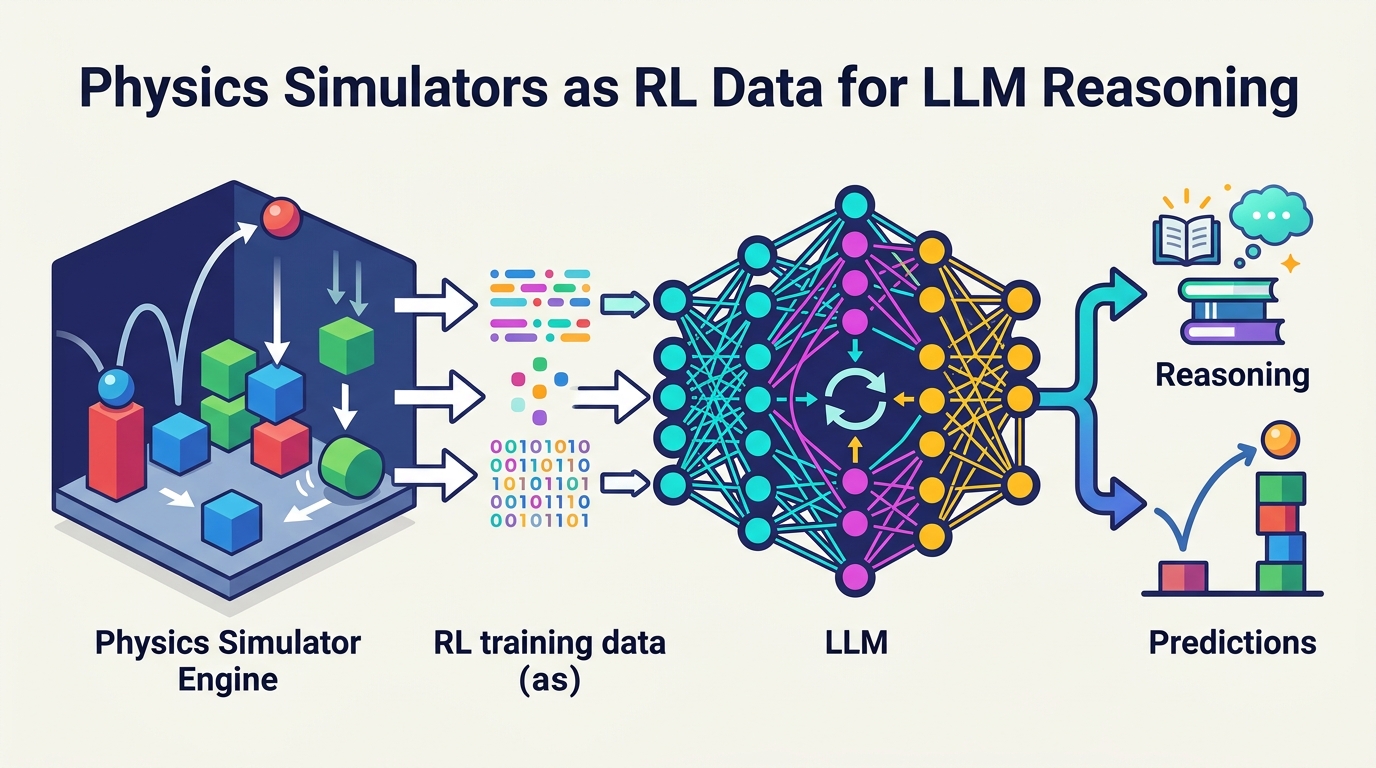

Researchers train LLMs on synthetic physics from simulators and report zero-shot gains on IPhO problems, showing a new path beyond web QA data.

Large language models have gotten much better at reasoning, but the training recipe has leaned heavily on internet question-answer pairs. That works well in math, where there is lots of structured data, but it becomes a bottleneck in physics and other sciences where comparable QA corpora are scarce. This paper argues that physics simulators can fill that gap, and it tests that idea by training models on synthetic interactions instead of scraped web answers.

The paper is Solving Physics Olympiad via Reinforcement Learning on Physics Simulators. The core claim is practical: if you can generate enough varied physical scenarios in simulation, you can use them as supervision for reinforcement learning and teach an LLM to reason about physics without relying on large real-world QA datasets.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The authors start from a real constraint in reasoning-model training: internet QA data is abundant in some domains and thin in others. Physics is a good example of the mismatch. You can find plenty of general text about physics online, but not enough high-quality, large-scale question-answer pairs that cover the kinds of reasoning needed for training a model to solve olympiad-style problems.

That matters because the recent jump in reasoning performance has been tied to data scale as much as model architecture. If the only scalable supervision source is web QA, then science domains without that data stay behind. The paper’s answer is to stop treating the web as the only training substrate and instead use physics engines as a data generator.

In other words, the paper is not trying to make a better physics simulator. It is trying to turn simulators into a training pipeline for reasoning models.

How the method works in plain English

The method is conceptually simple. First, the researchers generate random scenes in physics engines. Those scenes produce synthetic interactions that can be turned into question-answer pairs. Then they train LLMs with reinforcement learning on that synthetic data so the model learns to respond to physics questions by internalizing the patterns present in the simulated environment.

The important detail is that the supervision comes from simulated physics, not from collected human explanations or scraped textbook solutions. That makes the data source scalable in a way that manual QA creation is not. If you can keep sampling new scenes, you can keep expanding the training distribution.

The paper frames this as a form of sim-to-real transfer for reasoning. The model learns from synthetic physical worlds, then is evaluated on real-world physics benchmarks. That is a familiar idea in robotics and control, but here it is applied to language-model reasoning rather than motor policies.

- Generate random scenes in physics simulators

- Convert simulated interactions into synthetic QA pairs

- Train LLMs with reinforcement learning on that data

- Test whether the learned reasoning transfers to real benchmarks

What the paper actually shows

The headline result is that training solely on synthetic simulated data improves performance on IPhO, the International Physics Olympiad benchmark, by 5–10 percentage points across model sizes. The authors describe this as zero-shot sim-to-real transfer, meaning the models are evaluated on real-world physics problems without being trained on real-world QA data for that benchmark.

That is the most concrete metric in the abstract, and it is the one engineers should pay attention to. The paper is not claiming a minor calibration gain or a narrow benchmark bump in a toy setting. It is claiming that synthetic physics data can move performance on a hard reasoning benchmark in a measurable way.

At the same time, the abstract does not give a full benchmark table, exact model names, training compute, dataset size, or ablation details. So while the result is promising, the source material here does not let us judge how broad the gains are beyond the reported IPhO improvement, or which part of the pipeline contributes most of the lift.

Still, the direction is clear: the authors show that physics simulators can act as scalable data generators for reasoning models, and that the resulting models can generalize beyond the synthetic training environment.

Why developers should care

If you build AI systems for technical domains, this paper points to a useful pattern: when human-labeled data is scarce, synthetic environments may be good enough to bootstrap reasoning. That is especially relevant for fields where the underlying rules can be simulated, such as physics, robotics, control, or other structured scientific domains.

For LLM practitioners, the takeaway is not just about physics. It is about the data bottleneck. The paper suggests that reasoning models do not have to depend exclusively on internet-scale QA corpora. If you can generate valid interactions from a simulator, you may be able to create a training signal that is both scalable and domain-aligned.

That could change how teams think about dataset creation. Instead of only asking, “Can we find more labeled examples?” the question becomes, “Can we synthesize the environment that produces the examples?”

Limitations and open questions

The abstract is promising, but it also leaves important questions open. We do not get details on the simulator setup, the exact reinforcement learning objective, or how the synthetic questions were generated from interactions. We also do not know how sensitive the results are to scene diversity, simulator fidelity, or the choice of model size.

There is also a broader sim-to-real caveat. Physics simulators can be useful, but they are still approximations. If the synthetic world is too clean or too narrow, the model may learn shortcuts that do not transfer well outside the benchmark. The reported zero-shot gain on IPhO is encouraging, but it does not prove general physics understanding in the broadest sense.

Another open question is whether this approach scales beyond physics-style domains where the rules are well specified. The paper’s argument is strongest where you can build a simulator that produces correct interactions. It is less clear how far the same recipe goes in messier domains with ambiguous ground truth.

Even with those caveats, the paper makes a strong case that simulators are more than just testing tools. In the right setting, they can become training data factories for reasoning models. That is a useful idea for anyone thinking about the next phase of LLM training, especially as web QA data becomes less of a growth engine and more of a constraint.

In short: this paper is a reminder that if the internet runs out of clean answers, synthetic worlds may be the next place to look.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset