Pion keeps LLM weights’ spectrum fixed

Pion is a new LLM optimizer that updates weights with orthogonal transforms, preserving singular values instead of adding gradients directly.

Pion updates LLM weights with orthogonal transforms while preserving their singular values.

Large language model training usually relies on additive optimizers like Adam or Muon, which change weight matrices directly. This paper argues for a different route: update the matrix geometry without changing its spectrum. That matters because the spectrum, especially the singular values, is one of the core ways a matrix’s behavior is expressed during training.

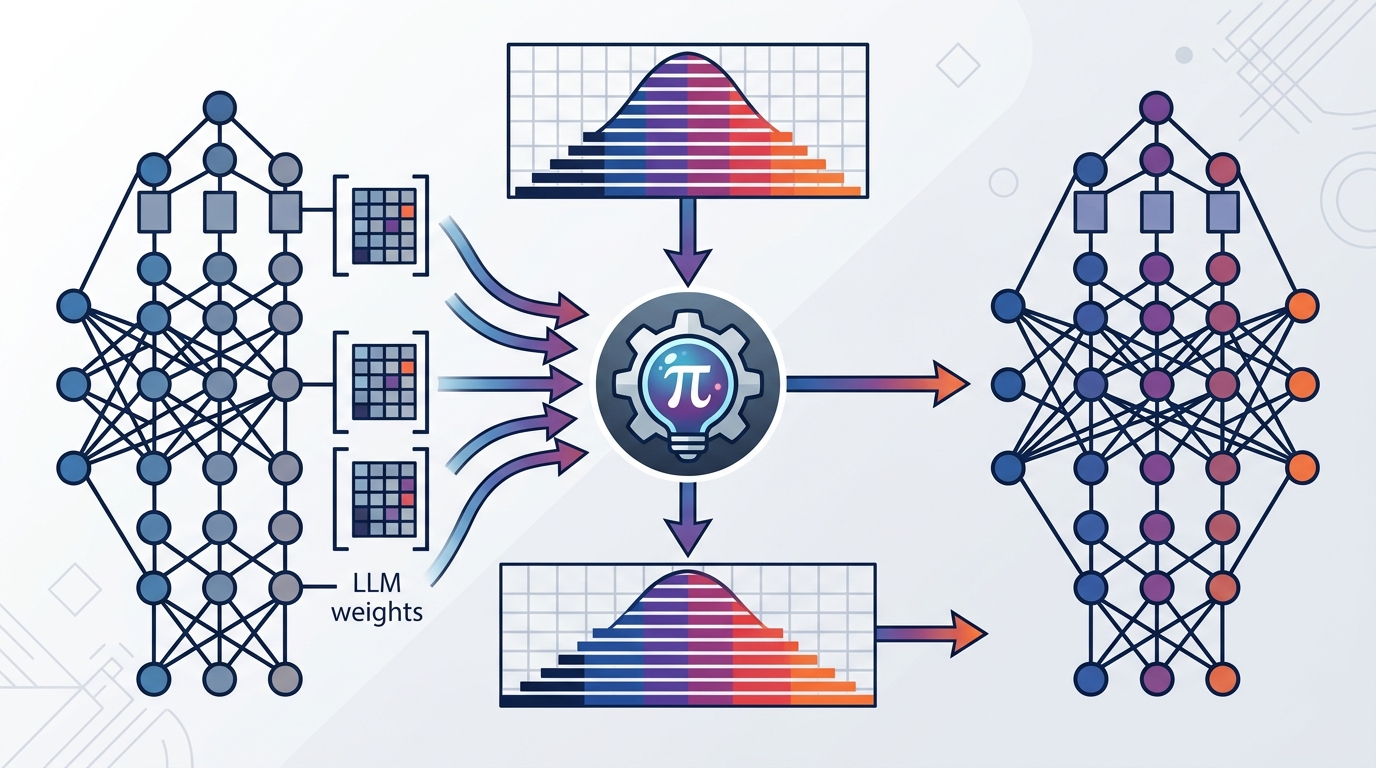

The paper is Pion: A Spectrum-Preserving Optimizer via Orthogonal Equivalence Transformation, and its main idea is simple to state even if the math is not: instead of nudging weights by addition, Pion applies orthogonal transformations on the left and right side of each weight matrix. The result is an optimizer that preserves singular values throughout training while still changing the model’s parameters.

What problem this is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Traditional optimizers are built around additive updates. That works, but it also means the spectrum of a weight matrix can drift as training progresses. For engineers working on LLMs, that drift can matter because it changes not just the values in the matrix, but the matrix’s geometric structure.

This paper is trying to answer a practical question: can we train large models in a way that changes the model enough to learn, but keeps a key structural property of the weights intact? Pion’s answer is yes, at least in the formulation the authors present. It is designed as a spectrum-preserving optimizer, so the singular values of each weight matrix stay fixed while the matrix is still updated.

That makes Pion interesting for anyone who cares about stability, parameter geometry, or alternative optimization dynamics. It is not presented as a drop-in replacement that magically solves all training issues. Instead, it is a different optimization mechanism with different invariants.

How Pion works in plain English

The core trick is orthogonal equivalence transformation. In plain terms, Pion updates a weight matrix by multiplying it on the left and right by orthogonal matrices. Orthogonal transformations are special because they preserve lengths and angles, which is why they also preserve singular values when used this way.

That means Pion does not behave like Adam, where the update is added to the parameters, or like Muon, which the abstract groups with additive optimizers. Instead, Pion changes the matrix through a structured transformation. The paper says this modulates the geometry of the weight matrix while keeping its spectral norm fixed.

The authors derive the Pion update rule and systematically examine design choices around it. They also analyze convergence behavior and several key properties. The abstract does not spell out every implementation detail, so from the source alone we can say the method is mathematically structured and explicitly designed around invariance, but not exactly how every training loop component is implemented.

What the paper actually shows

The empirical claim is modest but relevant: Pion is described as a stable and competitive alternative to standard optimizers for both LLM pretraining and finetuning. That is the main result available in the abstract and notes.

There are no benchmark tables, accuracy numbers, throughput figures, or scaling curves in the provided source material. So while the paper says the method is competitive, this summary cannot tell you by how much, on which tasks, or under which exact settings. If you need those details, you would have to read the full paper.

What we do know is that the authors did not stop at a conceptual proposal. They say they derived the update rule, examined design choices, and analyzed convergence behavior and key properties. That suggests the paper is trying to be both a theory piece and an applied optimizer paper, not just a one-off training trick.

- Pion preserves singular values during training.

- It uses left and right orthogonal transformations instead of additive updates.

- The paper claims stability and competitiveness for LLM pretraining and finetuning.

- No benchmark numbers are provided in the abstract or notes.

Why developers should care

If you train or fine-tune LLMs, optimizers are not just plumbing. They shape convergence, stability, and in some cases the kind of representations a model ends up learning. Pion is interesting because it changes the optimization primitive itself: instead of directly adding updates, it preserves a structural invariant of the weights.

That could matter in workflows where training stability is a concern, or where preserving spectral characteristics is desirable for theoretical or practical reasons. It may also be useful as a research baseline for anyone exploring non-additive optimization methods for deep learning.

At the same time, the source material leaves open several practical questions. We do not get benchmark numbers, training cost comparisons, memory overhead, or evidence about how easy Pion is to integrate into existing stacks. We also do not know from the abstract whether the method is broadly better than standard optimizers or simply competitive in certain settings.

So the useful way to read this paper is not “replace Adam tomorrow.” It is: here is a structured optimizer that preserves a matrix property most optimizers ignore, and it appears to work well enough to be taken seriously for LLM pretraining and finetuning. For engineers, that makes it worth watching as both a theoretical direction and a possible training tool.

Bottom line

Pion is a spectrum-preserving optimizer that updates LLM weights through orthogonal transformations rather than additive steps. The paper’s main contribution is the optimization rule itself, plus convergence analysis and an empirical claim of stable, competitive behavior. The abstract does not provide benchmark numbers, so the strongest takeaway is conceptual: this is a different way to train large models while keeping singular values fixed.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset