Recursive Multi-Agent Systems Could Cut Token Use

RecursiveMAS treats a whole multi-agent setup as one recursive latent computation, reporting 8.3% accuracy gains and big token savings.

Most multi-agent systems still pass around plain text, which means every step burns tokens and loses some of the structure that made the agents useful in the first place. Recursive Multi-Agent Systems proposes a different path: make the collaboration loop recursive in latent space, so the system can refine itself without constantly translating everything back into text.

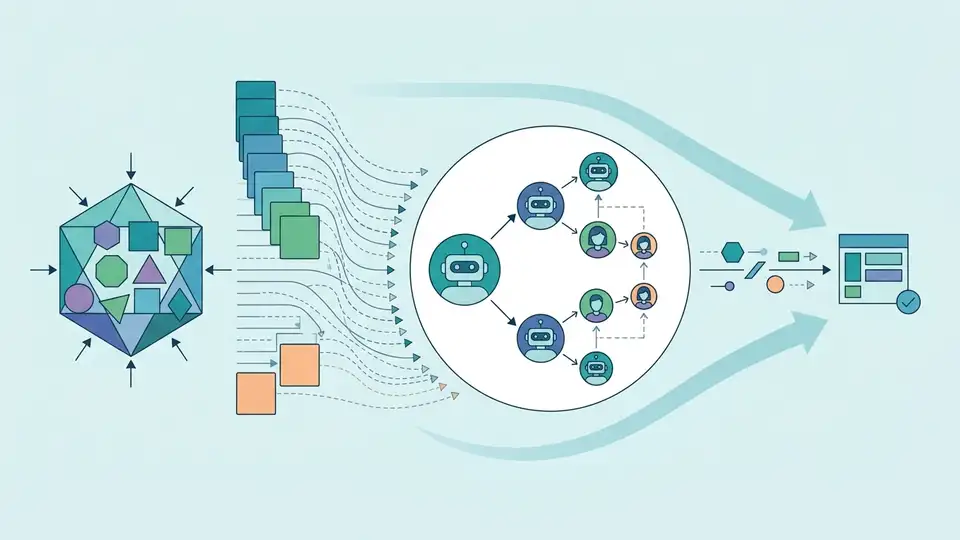

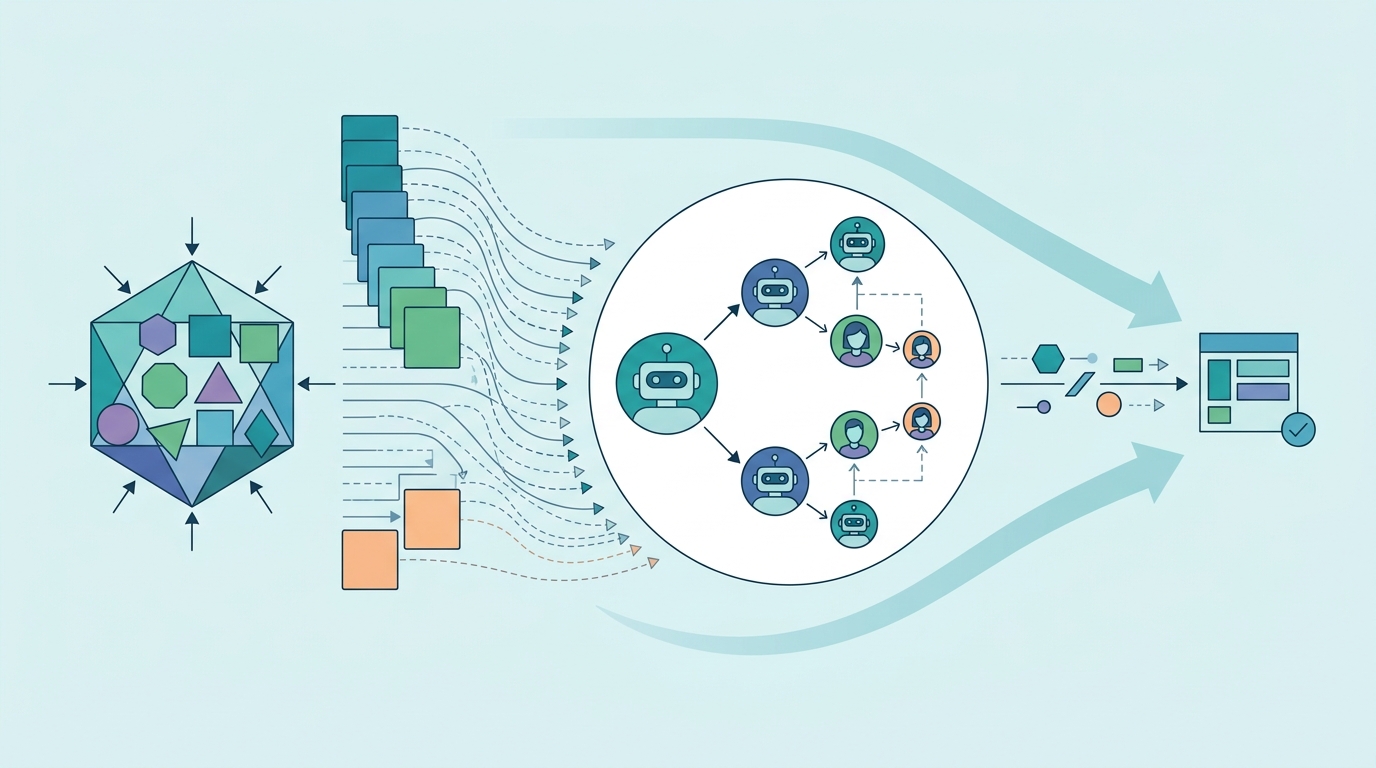

The paper’s core idea is simple to state but ambitious in practice. Instead of treating each agent as a separate text-speaking module, the authors cast the entire system as a unified recursive computation and let agents exchange latent thoughts through a lightweight connector called RecursiveLink.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

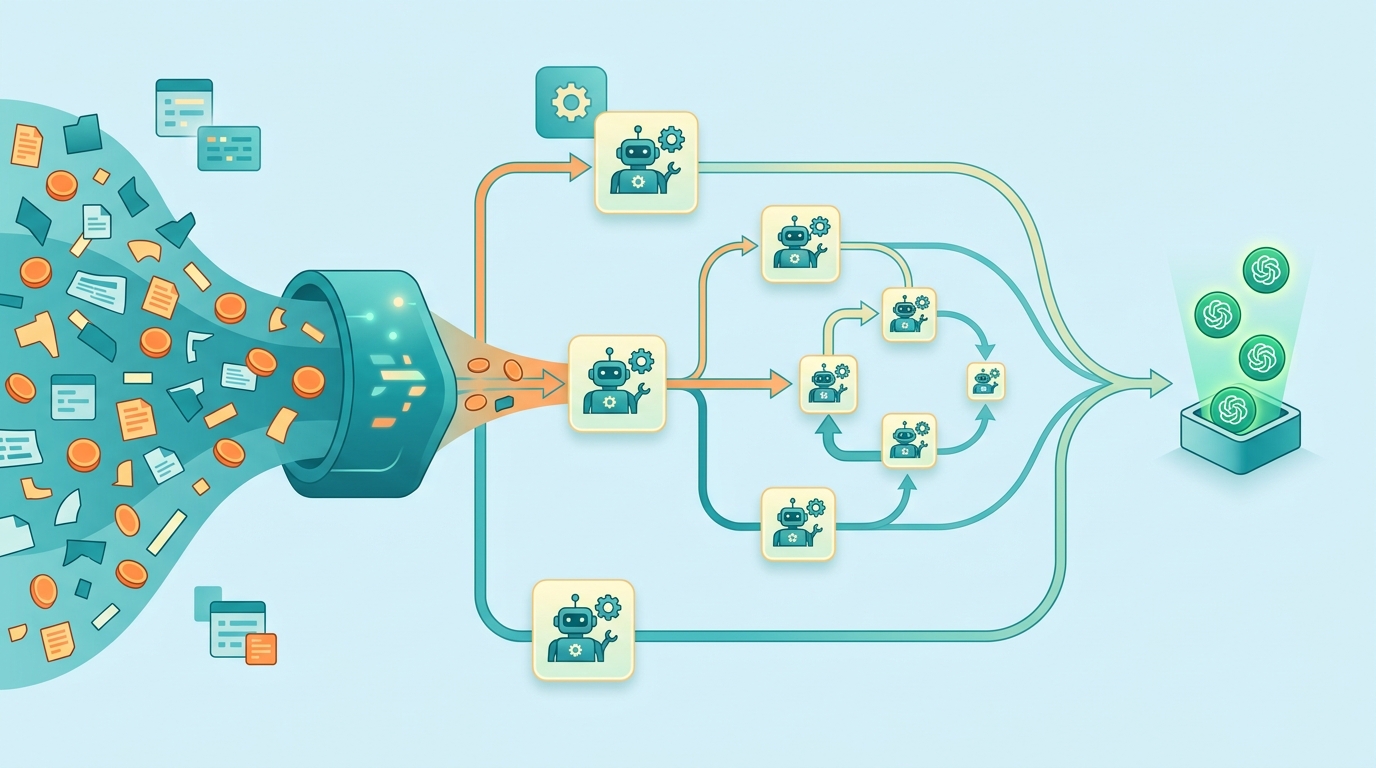

Multi-agent systems are often designed as chains or loops of text prompts. That works, but it is expensive and awkward: agents keep generating and reading tokens, and the collaboration can become slow, redundant, and hard to optimize end to end.

The authors are targeting that bottleneck directly. Their framing is that recursive language models have already shown a new scaling axis by repeatedly refining the same computation over latent states. The paper asks whether the same scaling principle can be extended from one model to many agents collaborating together.

That question matters for developers because multi-agent setups are increasingly used for math, science, medicine, search, and code generation. If the coordination layer is token-heavy, then the system pays a tax on every round of collaboration. A more compact internal representation could make these systems cheaper and faster without giving up accuracy.

How RecursiveMAS works in plain English

The proposed framework is called RecursiveMAS. At a high level, it turns a multi-agent collaboration into a recursive loop in latent space rather than a back-and-forth of text messages.

The key component is RecursiveLink, described as a lightweight module that connects heterogeneous agents. It enables two things the paper emphasizes: in-distribution latent thought generation and cross-agent latent state transfer. In practical terms, that means one agent can hand off a compact internal state to another agent without forcing the whole exchange through verbose natural language.

The system is also trained differently from a standard multi-agent pipeline. The authors develop an inner-outer loop learning algorithm that performs iterative whole-system co-optimization. The goal is to share gradient-based credit assignment across recursion rounds, so the system can learn how each round of collaboration contributes to the final outcome.

That training detail is important. In many multi-agent systems, it is hard to tell which agent or which step deserves credit for a good result. RecursiveMAS tries to make the collaboration itself trainable as one unified process, rather than a loose collection of prompt templates.

What the paper actually shows

The paper includes theoretical analyses of runtime complexity and learning dynamics. According to the abstract, these analyses show that RecursiveMAS is more efficient than standard text-based multi-agent systems and that it maintains stable gradients during recursive training.

On the empirical side, the authors instantiate RecursiveMAS under four representative agent collaboration patterns and test it across nine benchmarks covering mathematics, science, medicine, search, and code generation. The abstract does not list the individual benchmark names or per-benchmark scores, so those details are not available from the provided material.

What the abstract does give are the headline results. Compared with advanced single-agent, multi-agent, and recursive computation baselines, RecursiveMAS delivers an average accuracy improvement of 8.3%. It also achieves 1.2× to 2.4× end-to-end inference speedup, along with 34.6% to 75.6% token usage reduction.

Those are the kinds of numbers practitioners will notice. An 8.3% average accuracy gain is meaningful on its own, but the bigger operational story may be the token savings. In systems that run many reasoning steps or coordinate multiple agents, cutting token usage by roughly a third to three-quarters can change the economics of deployment.

- Average accuracy improvement: 8.3%

- Inference speedup: 1.2×–2.4×

- Token usage reduction: 34.6%–75.6%

- Benchmarks: 9 total across math, science, medicine, search, and code

- Agent patterns tested: 4 representative collaboration patterns

Why developers should care

If you build agentic systems, the main takeaway is not just that recursion is fashionable. It is that the collaboration layer itself may be something you can optimize as a first-class computation, rather than treating it as a pile of prompts and message passing.

That could matter in several ways. First, smaller token footprints can reduce cost and latency. Second, latent-state transfer may preserve useful structure that gets diluted when agents must explain everything in text. Third, whole-system co-optimization may make it easier to improve the behavior of the full collaboration loop instead of tuning agents in isolation.

There is also a design implication: heterogeneous agents do not have to be forced into a single text-only interface if the system can provide a shared latent collaboration channel. For teams building orchestration layers, that suggests a possible future where the “agent bus” is not just messages, but a learned internal state exchange.

Limits and open questions

The abstract is promising, but it leaves a lot unsaid. We do not get the exact benchmark list, the per-task results, the model sizes, or the implementation details behind the four collaboration patterns. Without that, it is hard to know how broadly the gains transfer across different agent stacks.

There is also an important practical question: latent-space collaboration can be powerful, but it may be harder to inspect and debug than text-based agent traces. Developers often rely on text logs to understand why a system made a bad decision. A more compact internal representation may improve efficiency while making observability more difficult.

Another open question is how RecursiveMAS behaves outside the reported benchmark suite. The paper spans math, science, medicine, search, and code generation, which is a good spread, but the abstract does not tell us whether the approach is equally strong on long-horizon planning, tool use, or noisy real-world workflows.

Still, the direction is clear: the paper argues that multi-agent collaboration itself can be scaled recursively, not just individual model depth. If the full paper backs up the abstract, RecursiveMAS could point toward a more efficient generation of agent systems—ones that reason and coordinate with less text, less latency, and more end-to-end trainability.

For now, the most useful takeaway is practical: if you are designing multi-agent infrastructure, it may be worth thinking beyond prompt chains. Recursive latent collaboration could become a real optimization target, especially when token cost and inference speed matter as much as raw accuracy.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset