Geometry Matters: Understanding Neural Networks Through Manifolds

Researchers use Riemannian geometry to analyze the intrinsic structure of neural network representations, revealing patterns hidden by traditional similarity metrics.

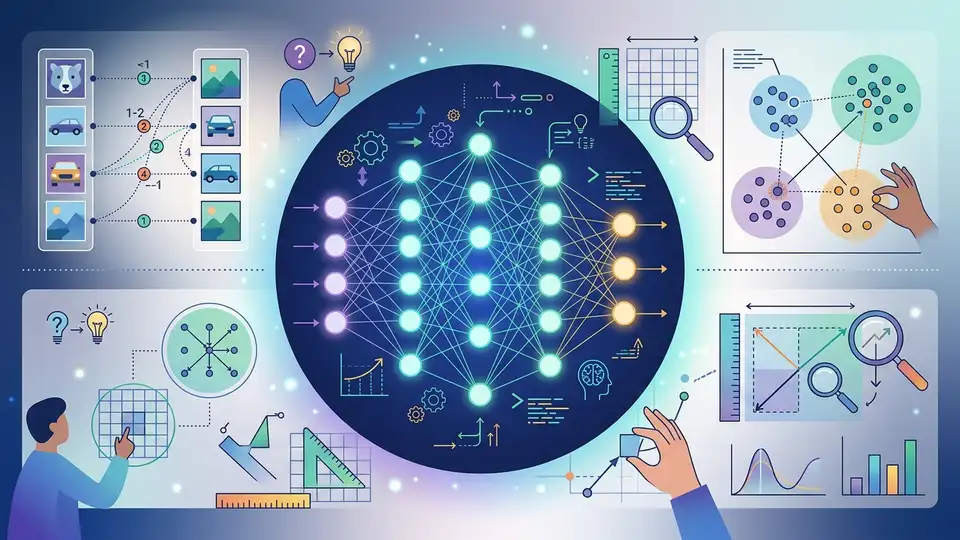

Neural networks are mysterious in their success. We train them, they generalize, and we mostly shrug and accept it works. But underneath that surface-level success lies a geometric reality: the way neural networks arrange information in high-dimensional space matters as much as the patterns they capture.

N Alex Cayco Gajic and Arthur Pellegrino from UC Berkeley wanted to understand this geometry more deeply. Rather than asking "what do these representations look like" (extrinsic geometry), they asked "what is their fundamental internal structure" (intrinsic geometry). Their answer: metric similarity analysis, a framework that uses differential geometry to compare neural representations in ways traditional methods miss.

The resulting paper, submitted to arXiv on March 30, 2026, opens a door to understanding why some neural networks trained differently end up with fundamentally different internal geometries—even when they produce similar outputs.

The Limits of Classical Similarity Metrics

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

When researchers compare neural network representations, they typically ask: are two vectors similar? This seems reasonable until you realize it depends entirely on how you measure similarity. Euclidean distance (the straight-line metric) works in some contexts. Cosine similarity works in others. But both ignore something crucial: the intrinsic geometry of the representation space.

Think of it this way: two cities might be equidistant from a reference point (same Euclidean distance), but on opposite sides of a mountain range, they occupy fundamentally different geographic structures. Classical metrics capture the distance; they miss the landscape.

Neural network representations are like those cities. The abstract space they inhabit has structure—curvature, dimensionality, geodesic distances (shortest paths on the underlying manifold). Standard metrics treat space as flat and featureless, losing information about how representations actually organize information.

Metric Similarity Analysis: A Differential Geometry Approach

Cayco Gajic and Pellegrino's MSA framework grounds itself in Riemannian geometry, a branch of differential geometry that extends distance and angle concepts to curved spaces. Instead of asking "how far apart are these vectors in Euclidean space," MSA asks "what is the intrinsic geometry of the manifold they inhabit, and how do those geometries compare?"

The manifold hypothesis—the belief that high-dimensional data lies on lower-dimensional manifolds—underpins much of modern machine learning. But it's often left as an abstract assumption. MSA makes it concrete by actually measuring manifold properties: curvature, dimensionality, and intrinsic distances.

The technique leverages tools from differential geometry to compute properties like the Ricci curvature tensor, which captures how a manifold curves in different directions. Two representations might look similar under classical metrics but exhibit entirely different intrinsic curvatures, suggesting fundamentally different computational structures.

Three Experimental Domains

The researchers tested MSA across three settings where understanding intrinsic geometry matters:

Deep networks under varying conditions: When networks are trained with different initialization seeds, learning rates, or data augmentation strategies, they converge to different learned representations. Classical metrics might show they're "similar enough." MSA reveals whether the underlying computational manifolds are actually isomorphic or genuinely different in structure.

Nonlinear dynamical systems: Understanding the geometry of phase-space trajectories is crucial for predicting system behavior. MSA provides tools to compare the intrinsic geometry of trajectories across different parameter regimes, revealing when systems undergo fundamental reorganization versus when they merely shift scale.

Diffusion models: As diffusion models generate images through iterative refinement, the representation geometry evolves. MSA can track whether representations at different timesteps lie on the same underlying manifold or transition between qualitatively different geometric structures. This matters for understanding where generative capacity comes from.

Why Geometry Captures What Metrics Miss

Classical similarity metrics are oblivious to manifold structure. Imagine two high-dimensional spaces that are topologically identical but with different intrinsic curvatures. Points might be equidistant in both, yet the spaces compute fundamentally differently because geodesic distances—the shortest paths along the manifold—differ.

This distinction isn't academic. It has real implications: two networks with "similar" representations (by classical standards) might learn completely different decision boundaries if their underlying manifolds have different curvatures. MSA detects these structural differences, revealing when two representations are truly similar versus merely superficially close.

The framework also handles cases where manifold dimension varies. A representation might concentrate on a low-dimensional submanifold in one case and spread across higher dimensions in another, even though point-wise distances look similar. MSA distinguishes these scenarios by measuring intrinsic dimensionality.

Implications for Neural Network Research

If MSA successfully captures intrinsic geometry, it offers a more principled way to ask: what makes a good representation? Current answers rely on downstream task performance—if a learned representation produces good results, we call it good. But MSA suggests a deeper criterion: representations should organize information on well-structured, interpretable manifolds.

This could inform architecture design. Perhaps layers that deform representation manifolds excessively (introducing unnecessary curvature) are undesirable. Perhaps skip connections work partly because they preserve manifold structure. Perhaps attention mechanisms succeed because they adapt manifold geometry to the current task.

Understanding representation geometry also matters for transfer learning. If pre-trained representations use "nice" manifold structures—ones that generalize across tasks—that could explain why pre-training helps. Conversely, if fine-tuning deforms the pre-trained manifold too severely, it might destroy transfer capability.

Connections to Broader Theory

MSA connects to longstanding questions in machine learning theory. The manifold hypothesis assumes data concentrates on low-dimensional manifolds. MSA provides tools to verify and quantify this. The implicit bias of gradient descent—why neural networks learn generalizing solutions—might partly reflect learned manifold geometry. MSA offers a lens to investigate.

The work also touches information geometry, the study of spaces of probability distributions via geometric tools. If neural representations encode probability distributions (a common assumption in generative models), their geometric properties encode information about probability structure. MSA bridges these perspectives.

Methodological Considerations

One challenge is computational cost. Measuring Riemannian properties requires careful numerical computation. The paper addresses this, but practitioners implementing MSA will need to contend with numerical stability issues when dealing with very high-dimensional representations.

Another question is interpretability. MSA reveals geometric differences, but translating those differences into actionable insights requires domain expertise. A representation with high Ricci curvature might be "bad" in some contexts and "good" in others depending on downstream tasks.

Future Directions

The natural next step is systematic application to modern architectures: transformers, vision models, multimodal systems. Do attention-based architectures produce representations with characteristic geometric properties? Do certain design choices (layer norms, skip connections, positional encodings) predictably shape manifold structure?

There's also potential to develop geometry-aware learning algorithms—methods that explicitly optimize for good manifold properties during training. If network geometry correlates with generalization, geometry-aware training could improve efficiency and robustness.

For practitioners, MSA offers a diagnostic tool. When you have two representations that look similar classically but perform differently in production, MSA can reveal geometric differences explaining the gap. As neural networks move into higher-stakes applications, these deeper understanding of representation structure becomes more valuable.

To explore this work further, check the arXiv paper on Riemannian geometry for neural representations, along with related research on geometric approaches to representation learning. The connections to information geometry and the manifold hypothesis run deep, offering rich ground for future investigation.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset