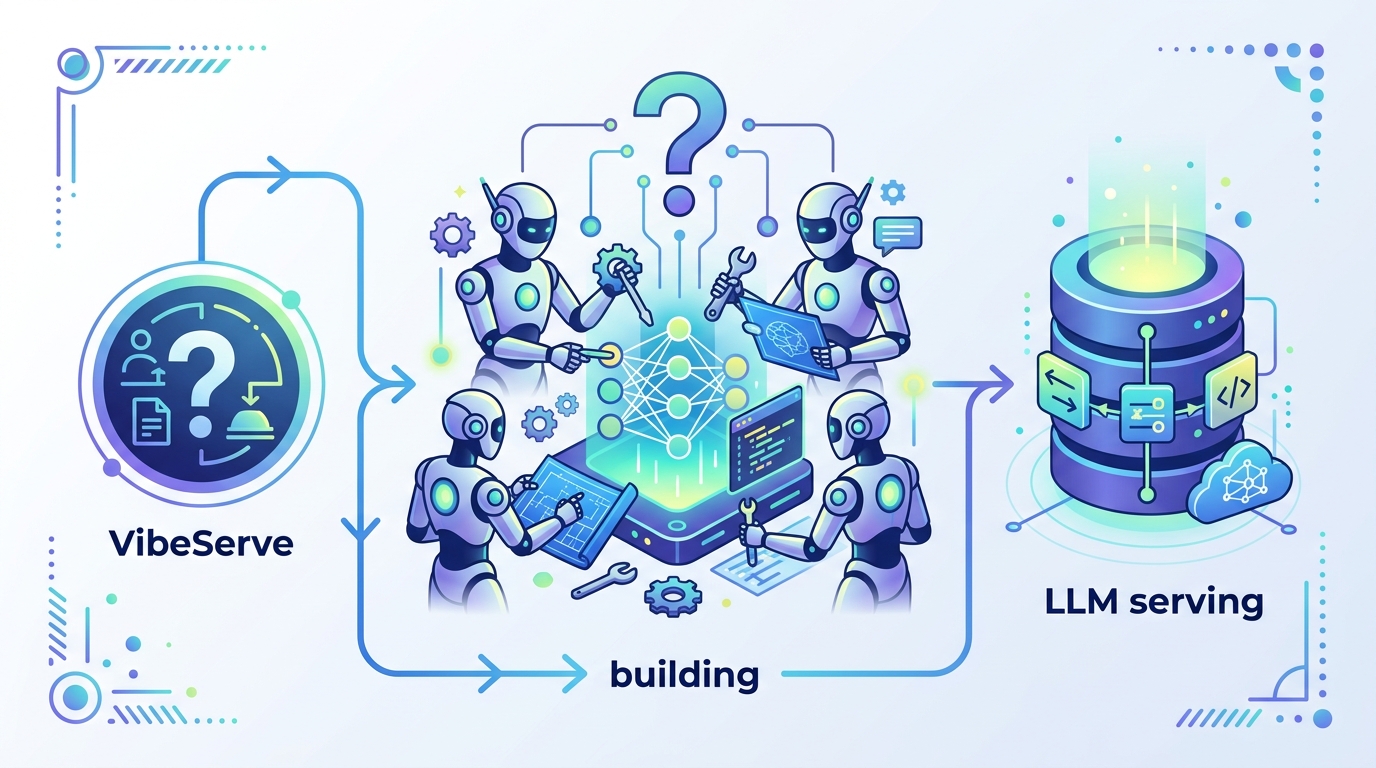

VibeServe asks if AI agents can build LLM serving

VibeServe explores whether AI agents can assemble bespoke LLM serving systems, but the provided notes do not include benchmark results.

VibeServe studies whether AI agents can build custom LLM serving systems.

VibeServe: Can AI Agents Build Bespoke LLM Serving Systems? asks a practical question that matters to anyone deploying models in production: can AI agents help construct serving stacks that are tailored to a specific workload instead of forcing teams into a one-size-fits-all setup? The raw abstract notes only the paper’s topic and authors, so the safest takeaway is also the most honest one: this is a research paper about the feasibility of agent-built serving systems, not a product announcement with ready-made performance claims.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

LLM serving is not a single problem. Different teams care about different tradeoffs: latency, throughput, cost, batching behavior, routing, memory pressure, and how the system behaves under changing traffic patterns. A generic serving stack can work, but it may leave performance on the table when the workload has specific constraints. That is the gap this paper is trying to explore.

The title suggests the authors are asking whether AI agents can help build these bespoke systems automatically or semi-automatically. For engineers, that is interesting because the hard part of serving is often not just running a model, but shaping the infrastructure around it. If agents can reason about those choices, they could reduce the time spent hand-tuning serving pipelines.

At a high level, the paper sits at the intersection of two fast-moving areas: LLM systems engineering and agentic automation. The question is not whether agents can write code in general, but whether they can meaningfully participate in designing a specialized serving system that fits real deployment needs.

How the method works in plain English

The source material does not provide a full abstract or method section, so we cannot claim specific architecture details, prompts, evaluation loops, or system components. What we can infer from the title alone is that the paper likely frames LLM agents as builders or assistants for serving-system construction, rather than as the serving system itself.

In plain English, that means the paper is probably looking at a workflow like this: describe the serving requirement, let an AI agent propose or assemble a system, and then assess whether the result is actually useful for the target workload. That is a very different idea from simply using an agent to generate code snippets. The target is an operational system that has to behave correctly under load.

For developers, the key distinction is that “bespoke serving” implies adaptation. Instead of relying on a fixed architecture, the system is tuned for a particular use case. If AI agents can do that reliably, they might become a new layer in the deployment toolchain, especially for teams that do not want to handcraft every optimization from scratch.

What the paper actually shows

Based on the raw notes provided here, there are no benchmark numbers, no reported latency figures, no throughput measurements, and no comparison table we can cite. The notes also do not include the paper’s experimental setup, so we should not invent results or imply a level of validation that is not visible in the source.

That does not make the paper uninteresting. It just means the current material only confirms the paper’s scope: it is a research investigation into whether AI agents can build custom LLM serving systems. The real value will be in the paper’s experiments, if any, and in how the authors define success for this kind of system-building task.

Without concrete metrics in the provided abstract notes, the most accurate summary is that the paper proposes or studies the idea, but the evidence is not available in the raw source we have. For an engineering audience, that is an important limitation because serving systems are only as good as their measurable behavior under load.

Why developers should care

If this line of research works, it could change how teams approach LLM infrastructure. Today, building a good serving stack often requires specialized systems knowledge: how requests are batched, how memory is managed, how to keep latency predictable, and how to match the stack to the model and traffic pattern. That expertise is valuable, but it is also expensive and time-consuming.

An agent that can help assemble bespoke serving systems could lower that barrier. Even if it does not replace human engineers, it could act as a force multiplier: proposing configurations, surfacing tradeoffs, or accelerating the first pass of system design. That would be especially useful for small teams that need production-grade behavior without a large infra staff.

There is also a broader strategic angle. As LLM workloads diversify, the “best” serving solution may increasingly depend on the exact application. A research direction like VibeServe suggests a future where system design itself becomes partially automated and more responsive to workload-specific constraints.

Limitations and open questions

The biggest limitation here is the source material itself: it does not include the abstract text, method details, or any results. So we cannot say whether the paper demonstrates a working system, a framework, a prototype, or only a concept. We also cannot tell how much human oversight is required, how robust the agent is, or whether the approach generalizes beyond a narrow set of tasks.

There are several practical questions engineers will want answered before treating this as more than an interesting idea:

- What exactly does the agent build or configure?

- How much human guidance is needed?

- How is success measured for a bespoke serving system?

- Does the approach improve latency, cost, or reliability?

- How does it compare with manually engineered baselines?

Those questions matter because infrastructure automation can fail in subtle ways. A system that looks clever in a demo may not survive real traffic, changing workloads, or operational constraints. Until the paper’s full text is available, the honest position is to treat VibeServe as a promising research direction rather than a proven recipe.

Still, the premise is timely. If AI agents can reliably participate in the construction of LLM serving systems, they could become part of the standard workflow for deploying models. That is why this paper should be on the radar of anyone building inference infrastructure, even if the current notes do not yet reveal the full technical story.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset