Tag

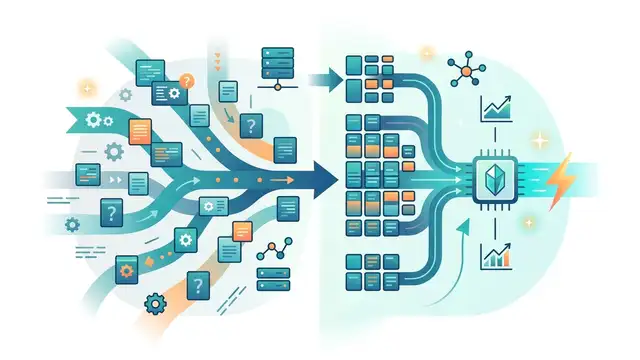

KV cache

KV cache is the working memory that lets LLMs reuse past tokens during inference, and it often becomes the main limit on context length, latency, and serving cost. This tag covers quantization, compression, HBM capacity and bandwidth trade-offs, and papers like TurboQuant.

5 articles

Why TurboQuant changes the KV cache debate

TurboQuant makes KV cache compression a theoretical win, not just an engineering trick.

TurboQuant Explained: Why Google’s New Paper Matters

Google’s TurboQuant paper targets KV cache bottlenecks with lower-bit quantization, aiming to cut LLM memory use and inference costs.

TurboQuant Won’t Fix the Memory Crunch

Google’s TurboQuant can cut KV-cache memory use 6x, but longer contexts may keep DRAM and NAND demand climbing.

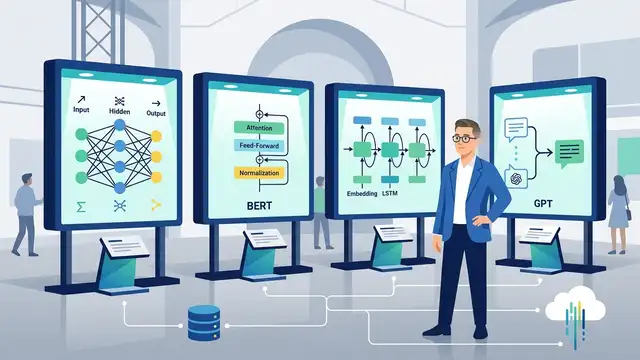

Sebastian Raschka’s LLM Architecture Gallery

Raschka’s gallery compares GPT-2, Llama 3, OLMo 2, DeepSeek, and Qwen stacks with exact layer, cache, and attention data.

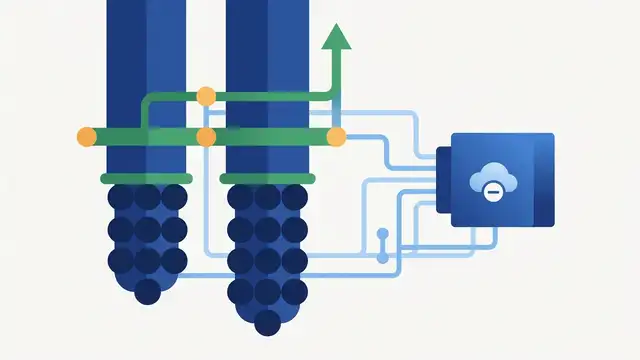

Universal YOCO aims to scale depth without cache bloat

YOCO-U mixes recursive computation with efficient attention to scale LLM depth while keeping inference overhead and KV cache growth in check.