Why TurboQuant changes the KV cache debate

TurboQuant makes KV cache compression a theoretical win, not just an engineering trick.

TurboQuant makes KV cache compression a theoretical win, not just an engineering trick.

TurboQuant matters because it turns KV cache compression from a messy systems tradeoff into a mathematically disciplined path to lower memory use without paying the usual accuracy tax.

TurboQuant is the first compression scheme that treats overhead as the real enemy

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

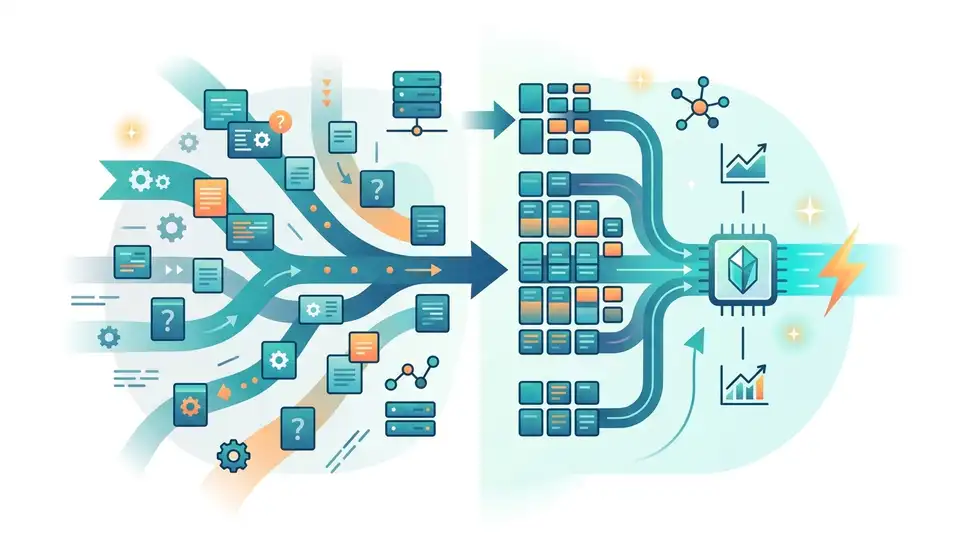

Most KV compression schemes are judged on the headline number, but the hidden cost is the bookkeeping. Classical vector quantization often needs per-block constants, scale factors, or normalization state, and that extra metadata eats into the gains. TurboQuant attacks that overhead directly by redesigning the representation, not just squeezing the numbers harder.

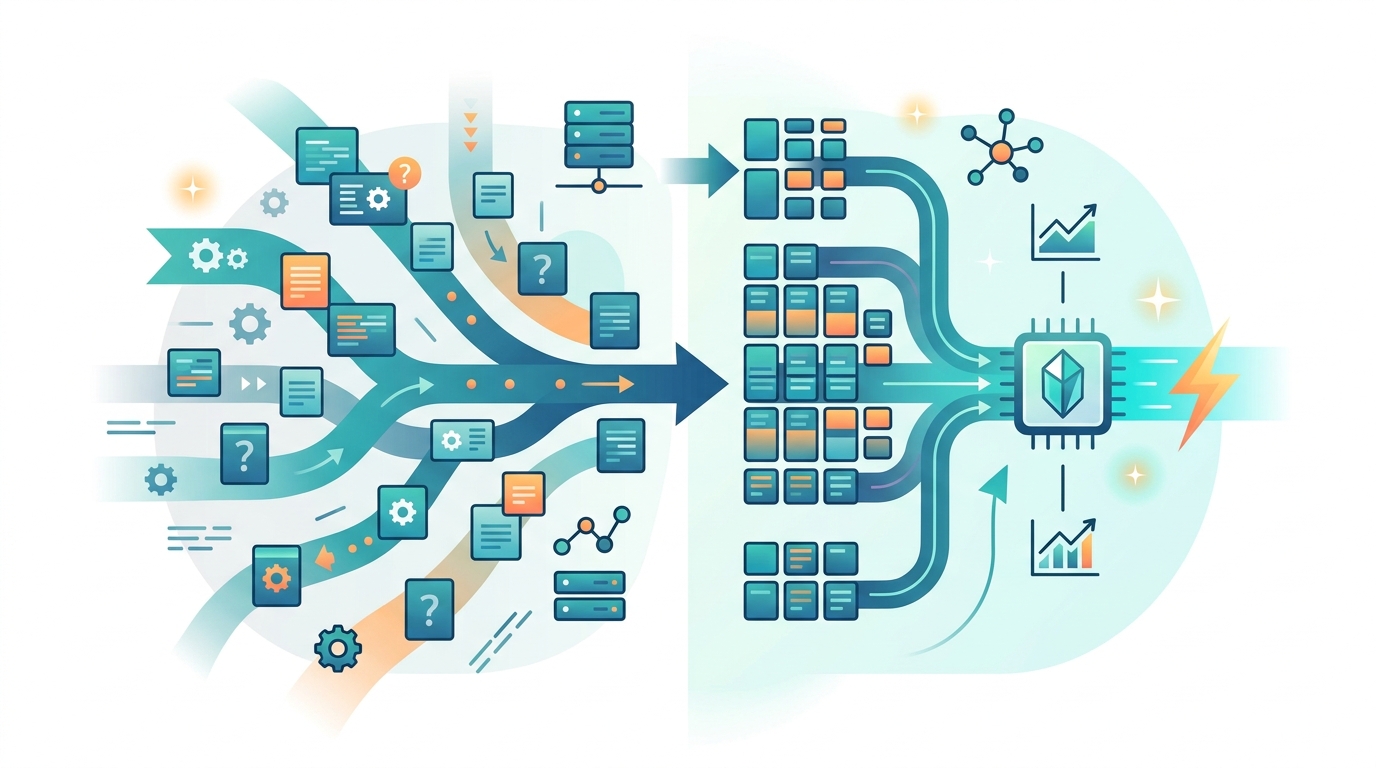

The practical result is why the 3-bit-level claim matters. If a method can bring cache storage down near that range while preserving attention quality, it changes the economics of long-context inference. In a system where every extra token length multiplies memory pressure, eliminating auxiliary storage is not a nice-to-have. It is the difference between a model that scales and one that stalls.

PolarQuant does the heavy lifting by changing the geometry

The first stage, PolarQuant, is the core innovation because it stops treating vectors as raw Cartesian objects. By applying a random rotation and moving to polar coordinates, it simplifies the geometry enough that scalar quantization becomes far more efficient. That is not cosmetic. It reduces the need for storing normalization constants and lets the compressor capture the main semantics of the vector in a compact form.

This is the kind of move that deserves attention from anyone building retrieval-augmented generation or long-context LLM systems. KV cache grows linearly with context, so any method that compresses each stored vector without retraining the model has immediate system-level impact. The article’s point is not that PolarQuant is a clever trick. It is that the geometry itself can be exploited to remove a major source of waste.

QJL is what makes the compression trustworthy

Compression schemes fail when they preserve size but distort retrieval. TurboQuant’s second stage, QJL, exists to clean up the bias left behind by the first stage. By compressing the residual error with a one-bit Johnson-Lindenstrauss transform, it acts like a mathematical correction layer that restores unbiased attention score estimation.

That matters because attention is unforgiving. A tiny systematic bias in inner products can cascade into worse token selection, weaker retrieval, and degraded generation quality. QJL is not there to add another aggressive squeeze. It is there to protect the integrity of the compressed representation, which is why the method feels more like a proof-driven pipeline than a standard engineering optimization.

The counter-argument

The strongest objection is simple: theoretical elegance does not guarantee deployment success. Real inference stacks are full of fused kernels, vendor-specific memory layouts, and latency constraints that do not care about clean proofs. A method can look brilliant on paper and still lose to a cruder approach that is easier to integrate, easier to debug, and easier to optimize on actual hardware.

That objection is fair, and it exposes the main limit of TurboQuant: adoption will depend on implementation quality, not just mathematics. But it does not defeat the argument. KV cache is already one of the dominant bottlenecks in long-context systems, and a method that removes metadata overhead while preserving accuracy addresses the exact pain point that existing quantization techniques leave behind. The burden is now on implementers to prove the library works in production, not on the idea itself to justify its relevance.

What to do with this

If you are an engineer, treat TurboQuant as a signal to audit your cache pipeline for hidden overhead, not just raw bit width. If you are a PM, evaluate compression methods by end-to-end memory savings and accuracy retention on real workloads, not by compression ratio alone. If you are a founder, understand the strategic shift: the next wave of AI infrastructure advantage will come from mathematically grounded efficiency, and KV cache compression is one of the clearest places to win it.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset