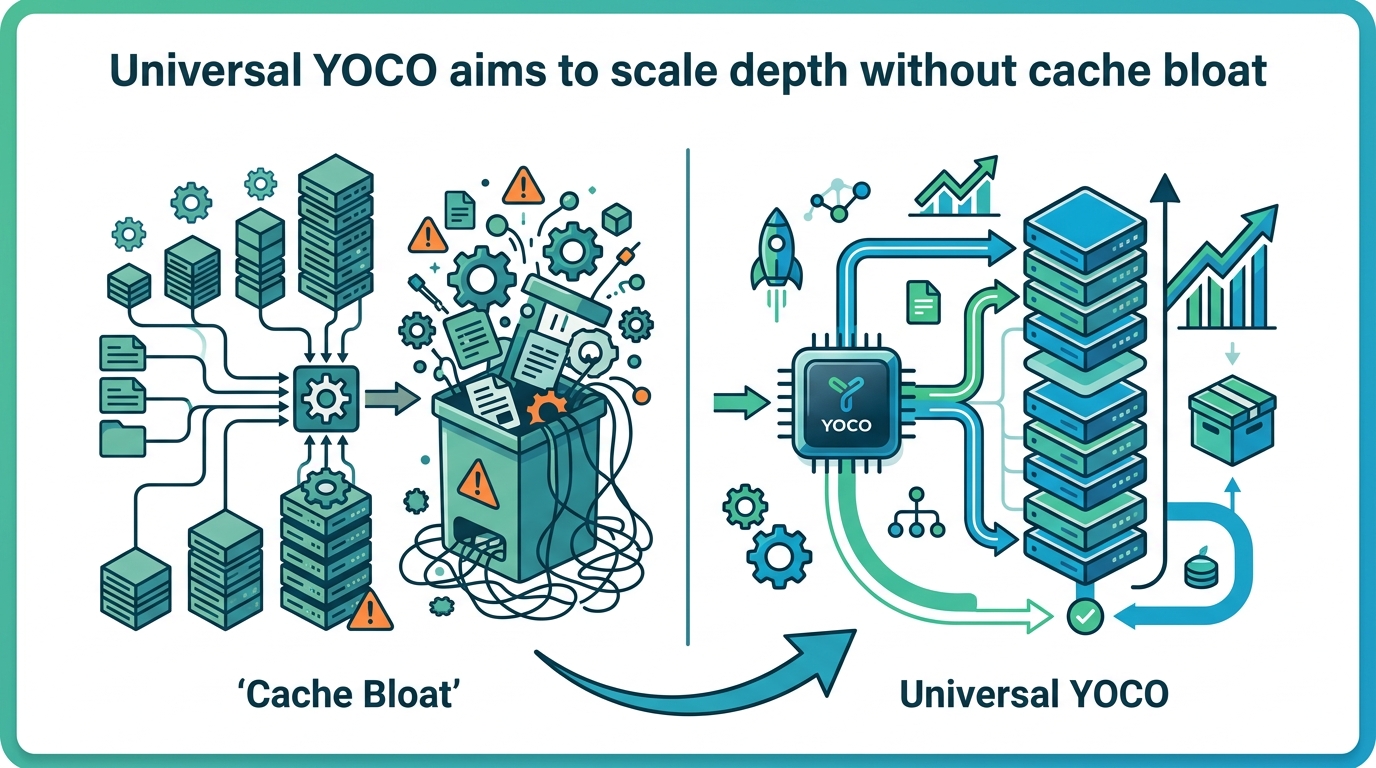

Universal YOCO aims to scale depth without cache bloat

YOCO-U mixes recursive computation with efficient attention to scale LLM depth while keeping inference overhead and KV cache growth in check.

Test-time scaling has become one of the main ways to push large language models toward better reasoning and agent behavior, but the usual Transformer playbook does not make that cheap. This paper, Universal YOCO for Efficient Depth Scaling, argues that standard looping strategies waste compute and let the KV cache grow with model depth.

The proposed answer is Universal YOCO, or YOCO-U: a system that combines the YOCO decoder-decoder architecture with recursive computation. The goal is simple to state and hard to pull off in practice: add more effective depth at inference time without paying the usual overhead tax.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The paper starts from a familiar limitation in LLM inference. If you want more reasoning power at test time, one common trick is to loop or iterate more. But in a standard Transformer, that quickly gets expensive. Each extra pass adds compute, and the KV cache grows alongside model depth, which makes inference heavier as you scale.

That matters for developers because test-time scaling is attractive precisely when you want to spend more compute only on harder prompts, long-context tasks, or agentic workflows. If the scaling mechanism itself becomes too costly, you lose the practical benefit. The paper frames YOCO-U as a way to keep that scaling path more efficient.

How YOCO-U works in plain English

YOCO-U builds on the YOCO framework, which the abstract describes as a decoder-decoder architecture with a constant global KV cache and linear pre-filling. In other words, the model is structured to avoid the usual cache growth pattern that makes deeper inference more expensive in standard Transformers.

On top of that, YOCO-U adds a Universal Self-Decoder that performs multiple iterations through parameter sharing. Instead of treating every pass as a fully separate chunk of computation, it reuses the same parameters across iterations. That is the “recursive computation” part of the design.

The key detail is that the recursion is not applied everywhere. The paper says it confines the iterative process to shallow, efficient-attention layers. That choice is important because it aims to get some of the representational depth benefits of recursion without pushing the full cost into the heaviest parts of the network.

Put simply: YOCO handles the cache and prefill side of the efficiency problem, while partial recursion adds depth. The paper’s claim is that the combination is better than either idea alone.

Why combining YOCO and recursion matters

The abstract is explicit that neither YOCO nor recursion alone gives the same tradeoff. YOCO provides efficient inference characteristics, but by itself it does not fully solve the need for deeper computation at test time. Recursion, meanwhile, can increase effective depth, but on its own it does not remove the overhead that comes with repeated computation.

YOCO-U is positioned as the middle ground: a model that can iterate, but only where the architecture is designed to keep those iterations relatively cheap. That makes it interesting for teams thinking about inference-time reasoning, because it suggests a route to more depth without simply stacking more expensive Transformer passes.

This is also a reminder that “more compute” is not a single knob. In practice, the cost profile depends on where that compute lands: attention layers, cache management, repeated passes, and parameter reuse all matter. YOCO-U is an attempt to shape those costs more deliberately.

What the paper actually shows

The abstract does not give benchmark numbers, so there are no concrete scores to report here. It does, however, say that empirical results show YOCO-U remains highly competitive on general benchmarks and long-context benchmarks.

That is a useful signal, but it is not the same as a full performance claim. From the abstract alone, we do not get the exact tasks, the size of the gains, the model scale, or the compute budget used for comparison. So the safest reading is that the method appears to preserve competitiveness while improving the capability-efficiency tradeoff.

The paper also claims that YOCO-U improves token utility and scaling behavior while maintaining efficient inference. Again, the abstract does not quantify those improvements, so readers should treat this as a directional result rather than a fully specified engineering recipe.

- YOCO-U uses the YOCO decoder-decoder architecture.

- It adds a Universal Self-Decoder with parameter sharing.

- Recursion is limited to shallow, efficient-attention layers.

- YOCO offers constant global KV cache and linear pre-filling.

- The paper reports competitive results on general and long-context benchmarks, but no numbers are given in the abstract.

What this means for developers

If you work on LLM systems, the practical takeaway is that inference-time scaling does not have to mean blindly repeating a full Transformer stack. YOCO-U is exploring a more selective approach: use architectural choices to keep the cache stable, then spend iterative compute only where it is likely to be cheaper and still useful.

That could matter for long-context applications, reasoning-heavy assistants, and agents where deeper test-time computation is attractive but latency and memory are real constraints. The paper is not claiming to solve all of that, but it does point toward a design that tries to make deeper inference more tractable.

At the same time, the abstract leaves several open questions. We do not know how YOCO-U behaves across different model sizes, how sensitive it is to the choice of shallow layers, or how the efficiency tradeoff changes under real deployment constraints. We also do not get benchmark details, so it is hard to compare this method against other test-time scaling strategies from the abstract alone.

Still, the direction is clear: if the industry keeps pushing toward more reasoning at inference time, architectures that separate “where depth helps” from “where depth is expensive” are likely to matter. YOCO-U is one more attempt to make that separation explicit.

Bottom line

Universal YOCO is not trying to make LLMs deeper in the usual brute-force sense. It is trying to make depth cheaper by combining a cache-efficient architecture with recursive computation in a tightly controlled part of the network.

For engineers, that makes it worth watching even without headline numbers. The paper’s core idea is practical: if test-time scaling is going to be a standard tool, it needs architectures that can absorb extra compute without turning inference into a memory and latency problem.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset