Tag

LLMs

LLMs are the core engine behind modern generative AI, powering chat assistants, enterprise agents, ad systems, and content generation. This tag also covers bias, alignment, jailbreak resistance, and internal model behavior, all of which shape reliability in real deployments.

12 articles

AutoTTS lets LLMs discover test-time scaling

AutoTTS turns test-time scaling into an environment search problem, letting LLMs discover cheaper reasoning strategies automatically.

Why small language models should replace LLM-first enterprise AI

Enterprise AI should default to small language models, not giant LLMs, because they are cheaper, faster, and safer for most workflows.

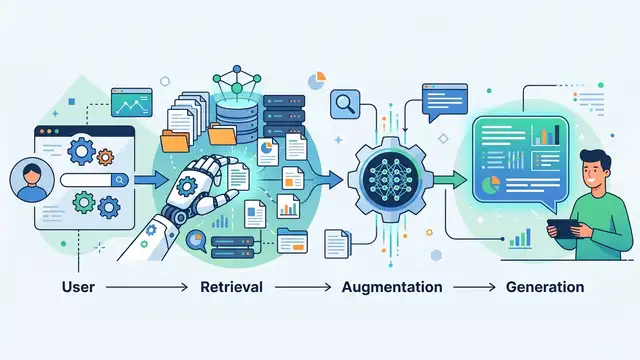

Retrieval-Augmented Generation, Explained Simply

RAG lets large language models pull fresh facts from documents before answering, which cuts hallucinations and adds citations.

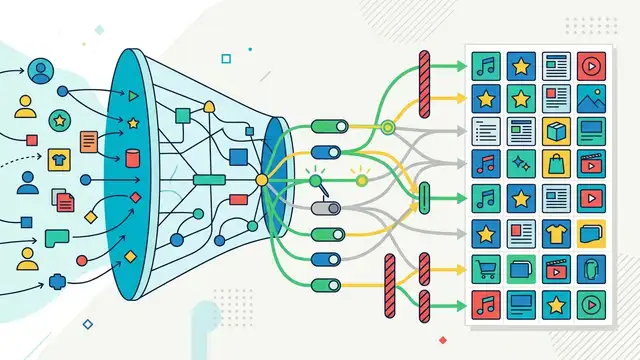

Selective LLM Regularization for Recommenders

A paper on using selective LLM-guided regularization to improve recommendation models without overhauling the recommender stack.

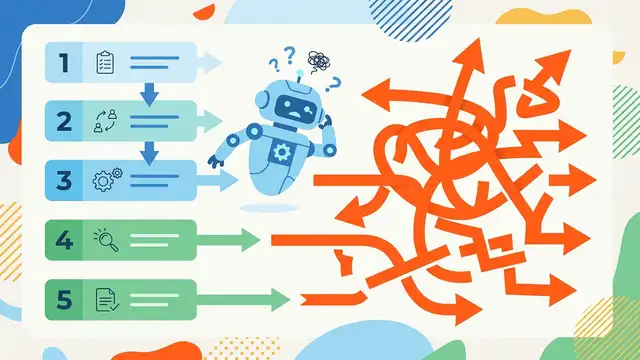

When LLMs Stop Following Procedural Steps

A diagnostic benchmark shows LLMs lose procedural fidelity as step counts grow, even when the arithmetic stays simple.

How LLMs Stereotype Global Majority Nationalities

A study finds widely used LLMs produce harmful, one-sided narratives about national origins, especially when US cues appear in prompts.

How LLMs encode harmful behavior internally

A pruning study suggests harmful output lives in a compact, shared weight set—helping explain jailbreak brittleness and emergent misalignment.

ChatGPT Ads Are Getting More Uniform

New data from 40,000 ad placements shows ChatGPT ads are becoming shorter, clearer, and more standardized as OpenAI optimizes for conversion.

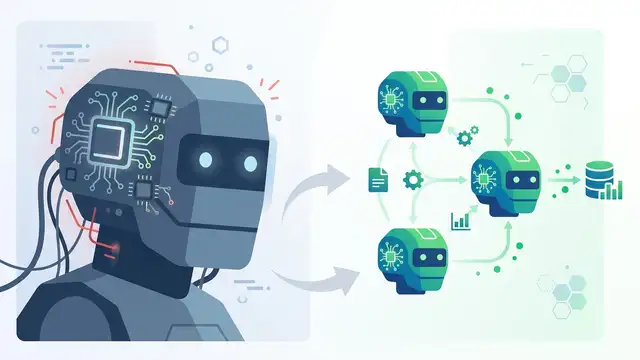

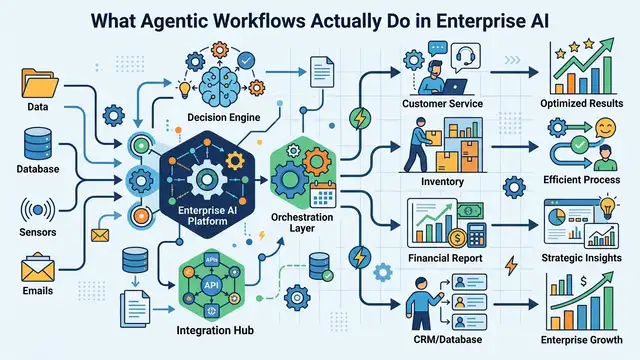

What Agentic Workflows Actually Do in Enterprise AI

Agentic workflows let AI agents plan, act, and adapt with little human input, changing how teams handle support, ops, and data work.

Duplicate Prompts Can Lift Accuracy Fast

A Google study found repeating prompts once improved 47 of 70 model-benchmark pairs, with one task jumping from 21% to 97%.

Universal YOCO aims to scale depth without cache bloat

YOCO-U mixes recursive computation with efficient attention to scale LLM depth while keeping inference overhead and KV cache growth in check.

What AI Agents Are and How They Work

AI agents combine LLMs, memory, tools, and planning. IBM says they can call APIs, search data, and coordinate tasks autonomously.