Tag

LLM evaluation

LLM evaluation examines whether models reason, judge, and stay consistent beyond producing a plausible answer. It spans long-horizon benchmarks like LongCoT, ASR quality assessment, and agreement with human labels on tasks where accuracy alone misses real failure modes.

4 articles

DeepTest 2026 benchmarks an LLM car manual assistant

DeepTest’s first LLM testing competition compared four tools on car manual retrieval, showing how to benchmark automotive assistants.

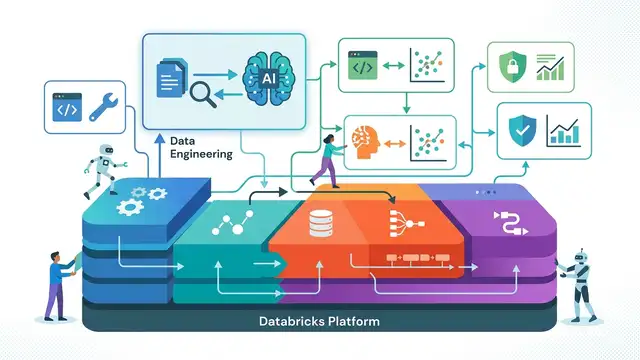

Why Databricks RAG Is a Platform Play, Not a Feature

Databricks treats RAG as an end-to-end platform problem, and that is the right way to build it.

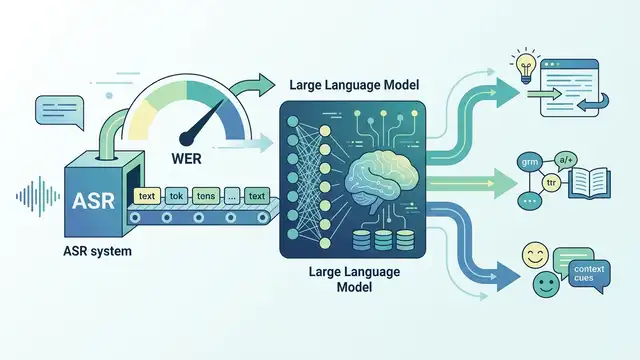

LLMs for ASR Evaluation: Beyond WER

This paper tests decoder-based LLMs as ASR evaluators and finds they beat WER on human agreement, with 92–94% on one task.

LongCoT Benchmark: 2,500-Probl. Long-Horizon Reasoning

LongCoT is a 2,500-problem benchmark for measuring whether frontier models can sustain long, interdependent reasoning chains.