DeepTest 2026 benchmarks an LLM car manual assistant

DeepTest’s first LLM testing competition compared four tools on car manual retrieval, showing how to benchmark automotive assistants.

DeepTest 2026 compares four tools for LLM-based car manual retrieval.

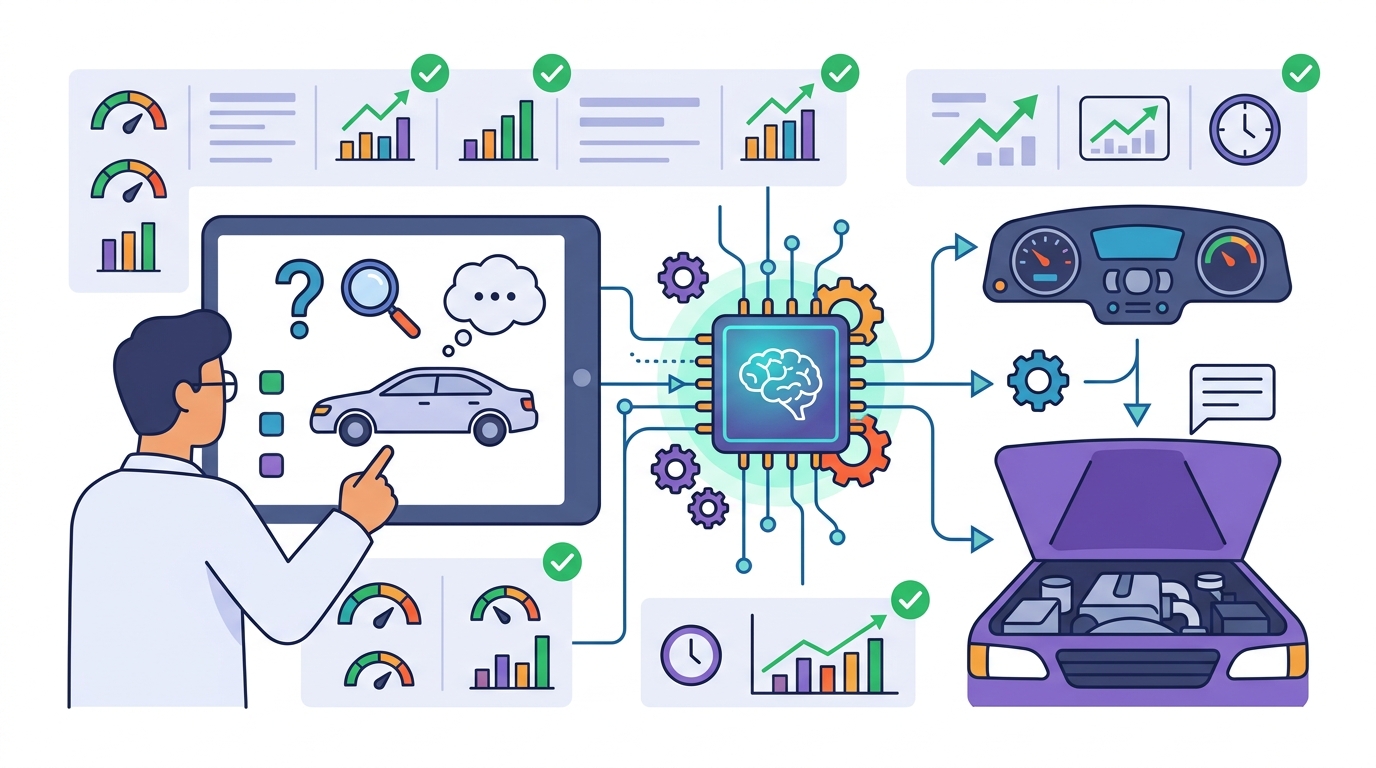

The paper DeepTest Tool Competition 2026: Benchmarking an LLM-Based Automotive Assistant summarizes the first edition of the Large Language Model testing competition held at the DeepTest workshop at ICSE 2026. At a high level, it’s about one practical question: how do you benchmark an LLM-based assistant that helps people find information in a car manual?

That matters because “LLM assistant” is a broad label, but the real engineering challenge is narrower and more testable. If a system is supposed to retrieve the right manual information, then you need a way to compare tools on the same task, under the same conditions, instead of relying on vague demos.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The abstract frames the paper as a competition report, not a new model paper. The core problem is the lack of a shared benchmarking setup for LLM-based automotive information retrieval. In other words, if multiple tools claim they can answer car-manual questions, you still need a fair way to see which ones actually work.

That kind of evaluation is especially important for automotive assistants because the user expectation is simple: ask a question, get the right answer, and avoid confusion. The paper does not claim to solve the full automotive assistant problem. It focuses on the narrower task of retrieving information from car manuals, which is a sensible starting point for benchmarking.

The report also makes clear that this was the first edition of the competition. That tells you this is an early step toward building a repeatable evaluation culture around LLM testing in this domain, rather than a mature benchmark with years of history behind it.

How the method works in plain English

According to the abstract, four tools competed in the benchmark. The competition centered on LLM-based car manual information retrieval, which suggests the tools were compared on how well they could find or surface relevant manual content in response to user queries.

The source does not provide the full benchmark protocol, task format, scoring details, or dataset description in the abstract. So the safest reading is that the workshop organized a head-to-head evaluation of tools built for the same retrieval job, then reported the results in a competition-style summary.

That may sound basic, but it is exactly what many teams need before they can trust a system in practice. A benchmark like this can help separate a model that sounds helpful from one that actually returns the right manual information.

- Competition format: first edition of the LLM Testing competition

- Venue: DeepTest workshop at ICSE 2026

- Task focus: benchmarking an LLM-based car manual information retrieval assistant

- Participants: four tools

What the paper actually shows

The abstract is thin on concrete performance data. It does not include benchmark numbers, rankings, or metric values, so there is no way to report comparative performance from the source alone.

What it does show is that the competition happened, that four tools took part, and that the organizers considered the exercise important enough to publish a report on the first edition. That alone is useful for practitioners: it signals that the field is starting to define a shared evaluation target for automotive LLM assistants.

Because the abstract does not provide the results in detail, readers should treat this paper as a meta-level contribution about evaluation rather than as evidence that one approach is better than another. If you need actual tool-to-tool comparisons, you would need the full paper’s tables and methodology sections.

So the honest takeaway is: this source confirms the existence of a benchmark competition and its domain, but it does not expose the numbers needed to judge winners or losers.

Why developers should care

If you build assistants for manuals, support docs, or other structured technical content, this paper points to a useful pattern: benchmark the retrieval task directly. That is often more actionable than evaluating a generic chatbot end to end, because it isolates whether the system can find the right source material.

For engineering teams, that means the benchmark idea can inform product design even when the paper is light on details. You would want to measure whether the assistant retrieves the right passage, handles ambiguous questions, and stays grounded in the manual instead of improvising.

It also highlights an important product lesson: a domain assistant is only as good as its evaluation setup. Without a repeatable benchmark, it is hard to know whether prompt changes, retrieval tweaks, or model swaps actually improve user outcomes.

Limitations and open questions

The biggest limitation here is the abstract itself. It does not tell us the scoring method, the dataset size, the kinds of questions used, or whether the tools were tested on accuracy, relevance, latency, robustness, or some other metric. It also does not name the competing tools.

That leaves several open questions for anyone trying to reuse the idea:

- What exactly counted as a correct answer in the benchmark?

- Were the tools pure retrieval systems, LLM-based agents, or hybrid pipelines?

- Did the evaluation focus on exact answers, passage selection, or user satisfaction?

- How generalizable is a car-manual benchmark to other technical support domains?

Even with those gaps, the paper still has value as a signal. It shows the community is moving from generic LLM discussion toward task-specific evaluation, which is where practical systems usually get better.

For developers, the main lesson is straightforward: if your assistant needs to answer from a manual, you should benchmark it like a retrieval system first, not just like a conversational model. This paper is a reminder that the evaluation harness is part of the product.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset