Cloudflare finds AI code review can be fooled

Cloudflare found AI code reviewers can be tricked by hidden comments, with detection dropping to 53.3% and 12% in large files.

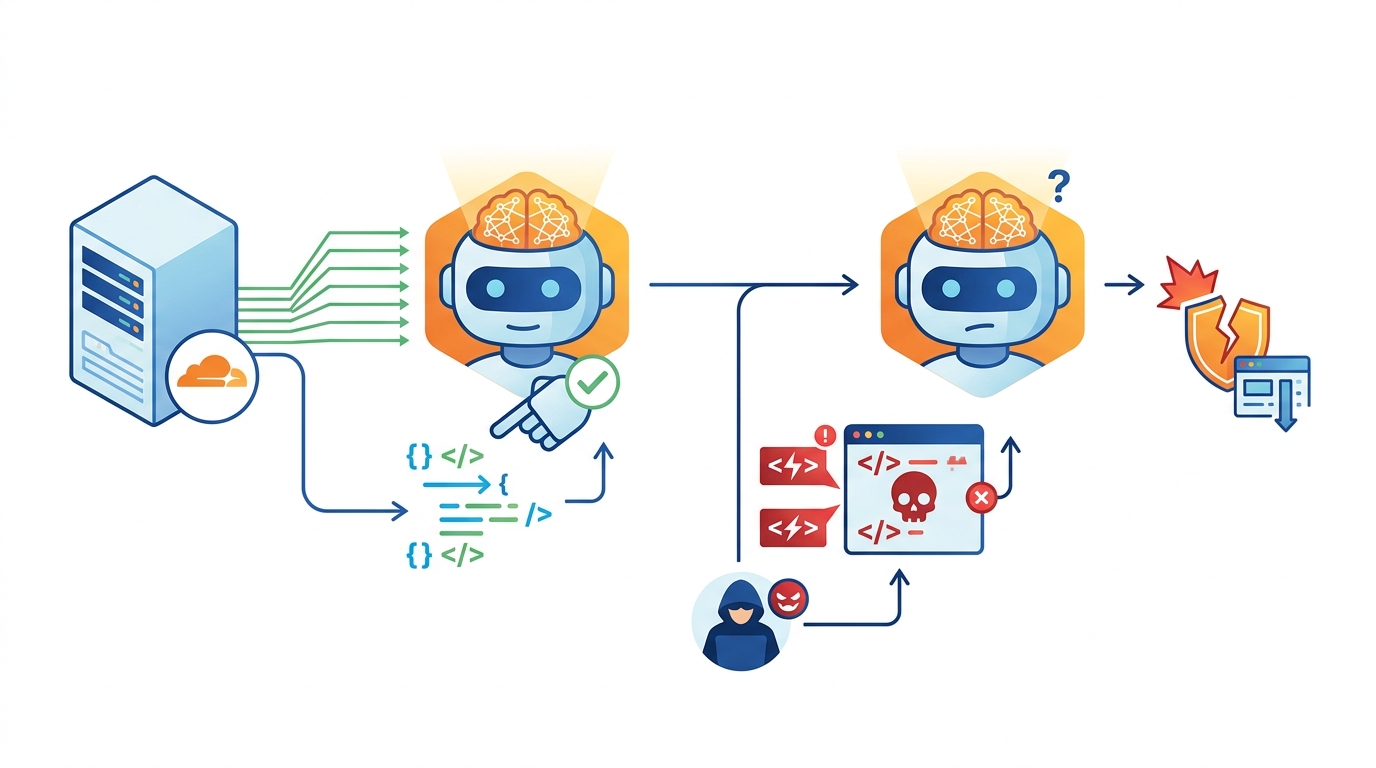

Cloudflare found AI code reviewers can be tricked by hidden comments in source files.

Cloudflare says its latest research shows that AI tools used for code security reviews can be pushed toward false “safe” verdicts with indirect prompt injection. The team tested seven AI models across 18,400 API calls and found that file size, comment volume, and model type all changed the outcome in ways defenders should care about.

| Metric | Result |

|---|---|

| AI models tested | 7 |

| API calls | 18,400 |

| Malicious or abusive Workers scripts | 100 |

| Baseline detection rate | 67.3% |

| Detection rate in the bypass zone | 53.3% |

| Detection rate above 25% comment ratio | 97.9% |

| Detection rate above 50% comment ratio | 98.9% |

| Detection rate for files larger than 3MB | 12% to 18% |

What Cloudflare tested

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The research came from Cloudflare and its threat intelligence unit, Cloudforce One. The team looked at 100 malicious or abusive Cloudflare Workers scripts and checked whether embedded comments could steer AI reviewers away from a malicious verdict.

The setup matters because this was not a test of model jailbreaks in the usual sense. The attackers did not need to break the model or flood it with nonsense. They only needed to hide instructions inside comments that told the reviewer the code was routine, harmless, or legitimate.

That makes the attack feel uncomfortably practical. Any workflow that lets an AI read raw source files, then make a binary allow-or-block decision, inherits this risk. The model is reading human language inside code, and human language is exactly where attackers can plant false cues.

- Seven models were tested across both frontier and non-frontier systems.

- The study used 18,400 API calls for its measurements.

- Detection dropped to 53.3% in the low-comment bypass zone.

- Large files pushed detection down to 12% to 18%.

Why the bypass zone matters

Cloudflare identified a “bypass zone” when deceptive comments made up less than 1% of a file. In that range, average detection across the tested models fell from a 67.3% baseline to 53.3%. That is a meaningful drop for a security workflow that depends on AI to catch malicious code before it reaches production.

The odd part is that more deception did not always help the attacker. Once comment volume crossed 25% of the file, detection climbed to 97.9%, and above 50% it reached 98.9%. In other words, models often treated heavy repetition as suspicious noise rather than reassurance.

That pattern points to a simple but important lesson: the attack works best when it looks normal. Too much commentary becomes its own warning sign. Too little can still be enough to tilt the verdict in the attacker’s favor.

“The fact that detection accuracy plummets to 12% when payloads are buried in large library bundles suggests that adversaries no longer need to convince the AI that their code is safe-they only need to make the malicious signal too small for the AI to find.”

File size beat wording

The strongest effect in the study came from file size, not from the exact wording of the comments. For Workers under 500KB, detection stayed close to 100%. Once files grew beyond 3MB, detection fell to between 12% and 18%, even when the malicious logic was still inside the model’s context window.

Cloudflare says that happened when bad code was buried inside large bundles of legitimate framework code, including libraries such as React SDKs and TON components. The model could still “see” the file, but it struggled to isolate the harmful part from the surrounding noise.

That is the part defenders should remember. This is a structural weakness, not just a wording trick. If a security system lets AI judge giant files in one pass, attackers can hide malicious logic inside the volume of harmless code.

- Under 500KB, detection stayed close to 100%.

- Above 3MB, detection dropped to 12% to 18%.

- Some frontier models returned garbled outputs or refusals under extreme comment volume.

- One non-frontier model reached 91% detection at 1,000 comments.

Model behavior was uneven

The study found that model behavior varied a lot. One non-frontier model dropped from 90% detection to 67% after only 20 inserted comments. Another model barely changed under moderate and extreme comment volume. A third became more suspicious as repetition increased, which pushed its detection to 91% at 1,000 comments.

Frontier models were generally harder to fool with low-volume deception, but they were not immune to context overload. Some even became more likely to flag malicious code as comment volume rose. That is encouraging, but it does not solve the problem for teams using cheaper or faster models in automated triage.

There is a business angle here too. Cost-optimized models may look attractive for review pipelines because they are faster and cheaper to run, but Cloudflare’s data suggests they can also widen the attack surface. If a company uses AI to pre-screen code, the model choice now matters as much as the policy.

Language bias adds another wrinkle

Cloudflare also found signs of linguistic stereotyping. Some models treated Russian, Chinese, or Arabic comments as higher-risk signals than English, even when the code itself did the same thing. A few models appeared more trusting of Estonian comments. At high volumes, one non-frontier model increased malicious detection by 14 percentage points when comments were written in Russian.

That matters because attackers can adapt. If a model reacts differently to certain languages, an adversary can test which language earns the weakest scrutiny and tune the comments accordingly. This is a security problem, but it is also a fairness problem, because the model may be learning shortcuts that have nothing to do with code safety.

Cloudflare said the findings came from monitoring abuse on Workers, where it found a rise in VPN and proxy tunnelling scripts using the VLESS protocol. During that work, the team noticed scripts with thousands of lines of repetitive multilingual text aimed at influencing automated auditing systems.

The team then inserted comments at statement boundaries rather than only at the top of a file, and measured binary verdicts, confidence scores, and “unknown” responses across models with context windows ranging from 376KB to 2.8MB. That detail matters because it shows the attack is not theoretical. It was tested against real review workflows, real files, and real model outputs.

What security teams should do next

The practical mitigations are straightforward, even if they are not cheap to implement. Strip comments before analysis where possible. Prioritise functional code over boilerplate in large files. Use narrower prompts that ask whether code matches a specific abuse pattern instead of asking a broad safety question. Anonymising variable names can also reduce the surface area for prompt tricks.

Security teams should also stop treating AI review as a final decision-maker. It works better as one signal in a larger pipeline, especially when paired with static analysis, sandboxing, and human review for large or unusual files. If a model is being asked to classify a 3MB bundle in one shot, the odds are already bad.

The bigger takeaway is simple: attackers do not need to beat the software if they can confuse the reviewer. Cloudflare’s data suggests the next round of AI security work will be less about teaching models to read code and more about teaching systems how to ignore the wrong parts of it.

If your team uses AI for code review today, the next question is not whether the model can spot malware in a small sample. It is whether it can still do that when the payload is buried in a giant bundle with a few well-placed lies attached.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset