HealthNLP_Retrievers’ cascaded QA pipeline for EHRs

A grounded clinical QA pipeline for EHRs that uses cascaded LLM retrieval and answer generation, but the abstract gives no benchmark numbers.

This paper describes a cascaded LLM pipeline for grounded clinical question answering over EHRs.

HealthNLP_Retrievers’ HealthNLP_Retrievers at ArchEHR-QA 2026: Cascaded LLM Pipeline for Grounded Clinical Question Answering is about a very practical problem: how to answer clinical questions from electronic health records without drifting away from the source material. In other words, it is trying to make LLM-based QA more grounded, so the system stays tied to the patient record instead of improvising.

That matters for developers because clinical QA is not just another retrieval demo. If a pipeline cannot reliably anchor its answers in the underlying chart, it is hard to trust in workflows where accuracy and traceability matter. The paper’s title already tells us the central design choice: a cascaded pipeline, not a single-pass answer generator.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The abstract-level material available here is thin, but the target is clear enough: grounded clinical question answering for ArchEHR-QA 2026. The core issue is that EHR data is messy, long, and context-heavy, while clinical questions often require precise answers drawn from specific parts of the record.

That combination creates a familiar failure mode for large language models. A model may produce a fluent answer that sounds plausible, but unless the system is carefully grounded, it can miss the relevant note, pull the wrong detail, or combine facts that should not be combined. For a clinical setting, that is not just a quality issue; it is a trust issue.

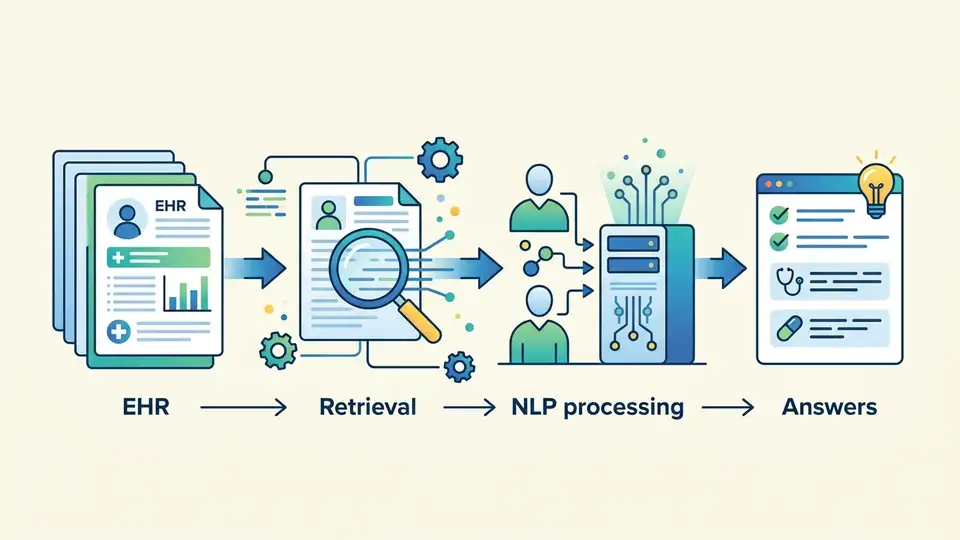

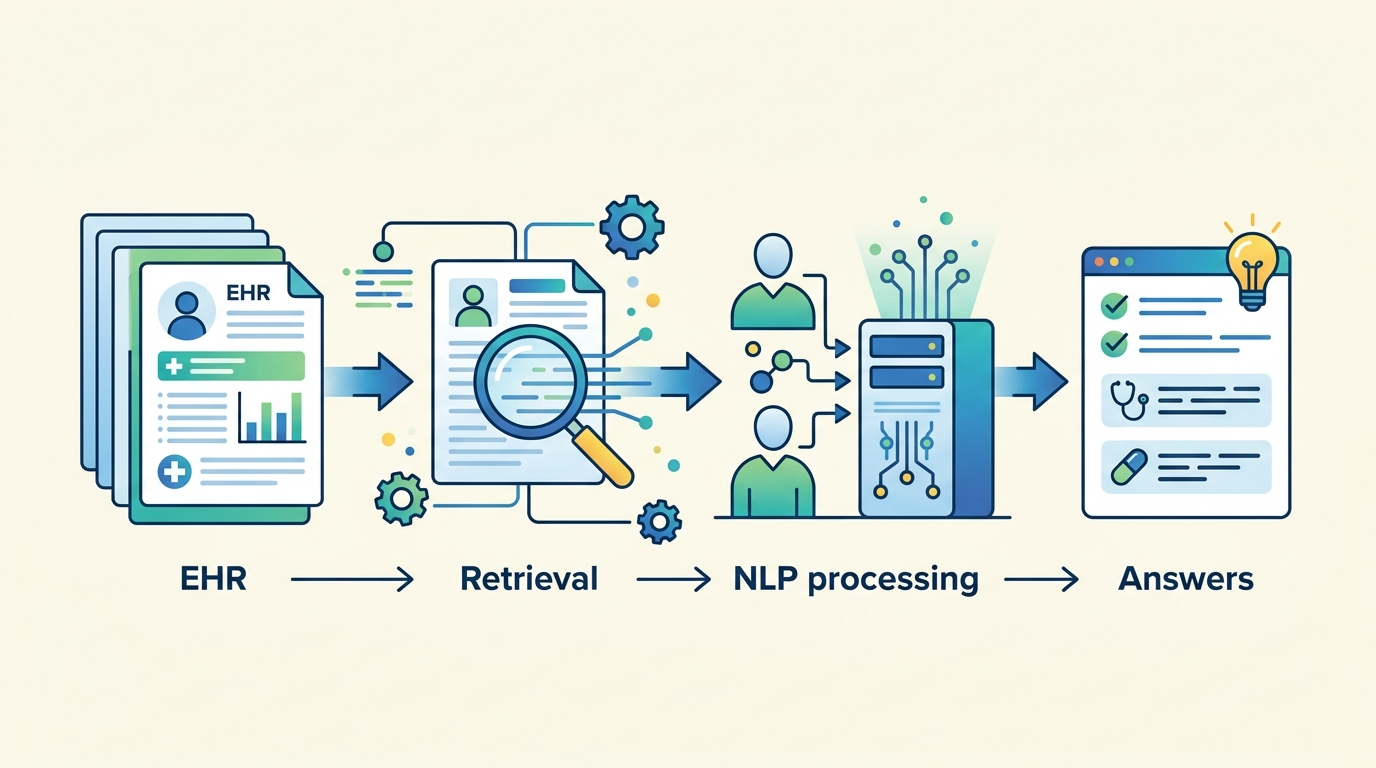

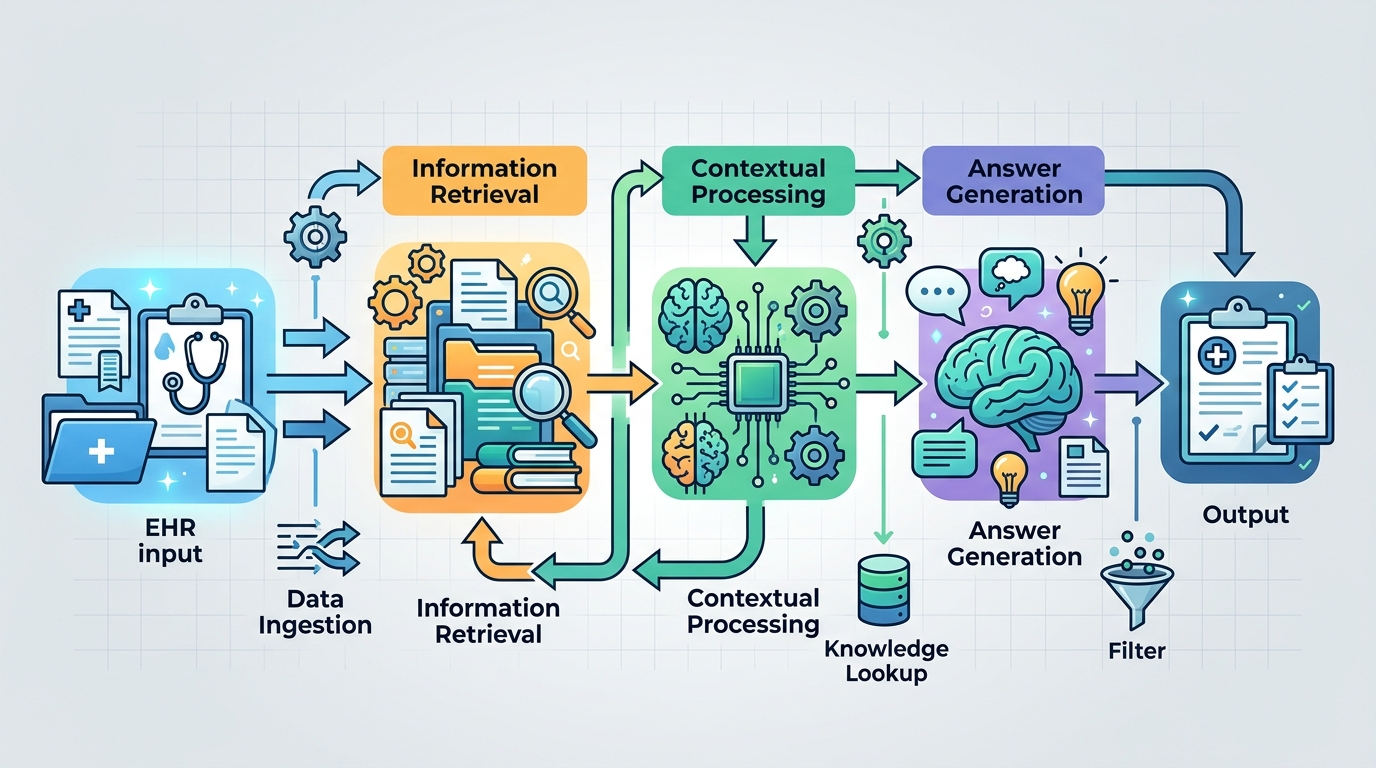

A cascaded approach is a sensible response to that problem. Instead of asking one model call to do everything, the system can split the task into stages: find relevant evidence first, then generate an answer from that evidence. The title strongly suggests that this paper is built around that kind of multi-step retrieval-and-answering flow.

How the method works in plain English

The paper is described as a “cascaded LLM pipeline,” which usually means each stage narrows the problem before the next stage handles it. In practical terms, that can look like retrieval, reranking, evidence selection, and answer generation. The source material does not spell out the exact stages, so it would be wrong to claim a specific architecture beyond what the title says.

Still, the engineering idea is easy to understand. A first pass gathers candidate evidence from the EHR. A later pass filters or prioritizes that evidence. Then a final LLM produces the answer using the selected context. This is a common pattern when you want better grounding than a single prompt can provide.

For developers, the appeal of a cascade is control. Each stage can be inspected, swapped, or improved independently. If retrieval is weak, you can tune retrieval. If evidence selection is too broad, you can tighten it. If the answer generator is hallucinating, you can constrain the context it sees. The paper’s title suggests exactly this style of modular clinical QA system.

That modularity is especially relevant in healthcare, where “show your work” is often as important as the final answer. A cascaded pipeline can make it easier to trace how the system arrived at a response, even if the paper material provided here does not describe a specific explanation or citation format.

What the paper actually shows

Here is the important limitation: the abstract text available in the source does not include benchmark numbers, dataset details, or concrete performance metrics. So there are no reported scores to cite here, and it would be misleading to invent them.

What we can say is that the paper positions the system in the context of ArchEHR-QA 2026 and frames it as a grounded clinical QA pipeline. That tells us the work is likely evaluated in a task setting where evidence-backed answers matter, but the raw material provided does not give the outcome details.

Because the source is only an abstract page in the notes provided, we also do not have information about model size, retrieval backend, prompt design, ablations, or error analysis. Those may exist in the full paper, but they are not visible in the material here, so they should not be assumed.

- No benchmark numbers are provided in the abstract notes.

- No dataset or task metrics are listed in the source text.

- No implementation details beyond “cascaded LLM pipeline” are given.

- No claims about superiority over other systems are visible here.

Why developers should care

Even with sparse details, the paper points to a pattern that many teams building LLM systems are converging on: break the job into stages and ground the final answer in retrieved evidence. That is especially useful when the source data is large, domain-specific, and sensitive, like EHRs.

If you are building internal copilots, clinical search tools, or any workflow where answers must be defensible, this is the kind of architecture worth studying. A cascaded design gives you more places to add safeguards, logging, and evaluation. It also gives you clearer failure modes than a monolithic answer generator.

The open question is how much the cascade improves reliability in practice, and at what cost in latency and system complexity. Multi-stage pipelines often trade simplicity for control. They can be easier to debug, but they can also be slower and more brittle if one stage drops the ball.

That tradeoff is the real engineering story here. The paper is not presented, in the source material available, as a breakthrough with published numbers. It is better read as a signal that grounded clinical QA is moving toward more explicit retrieval-and-generation pipelines, which is a direction many product teams will recognize.

What is still unknown

Because the source is limited to the paper title and abstract page, several questions remain open. We do not know how the authors define “grounded” in their evaluation, whether the system uses citations, whether retrieval happens over structured notes or free text, or how they handle ambiguous clinical questions.

We also do not know whether the pipeline is meant for direct deployment or for competition-style benchmarking in ArchEHR-QA 2026. That distinction matters. A leaderboard system can be optimized differently from a production system that needs auditability, robustness, and predictable latency.

So the safest takeaway is straightforward: this paper is about applying a cascaded LLM architecture to grounded EHR question answering, but the abstract notes do not provide the quantitative evidence needed to judge how well it works.

For engineers, that makes it a useful architecture signal rather than a finished playbook. The idea is familiar, the domain is hard, and the missing metrics are exactly the kind of detail you would want to inspect in the full paper before adopting the approach.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset