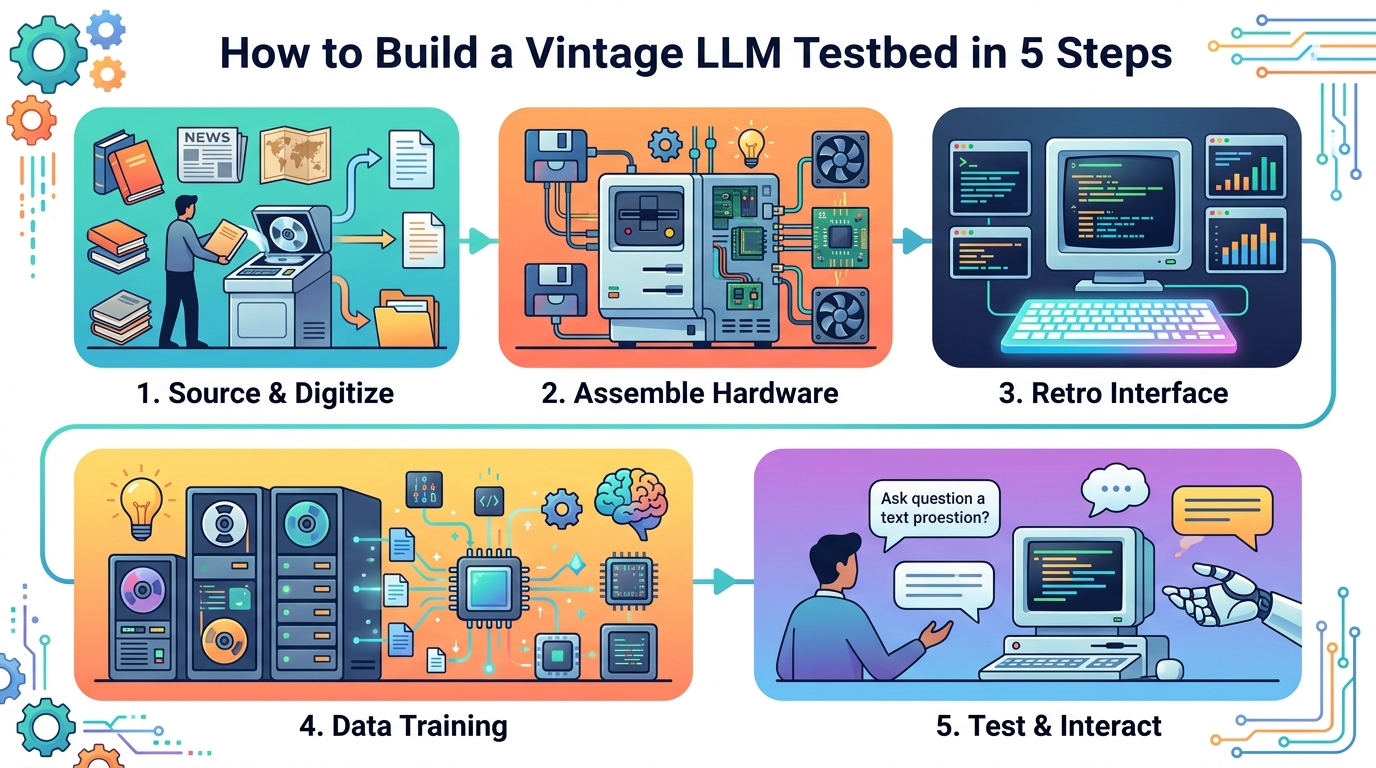

How to Build a Vintage LLM Testbed in 5 Steps

Build a 1930-cutoff LLM testbed to study historical reasoning and contamination-free generalization.

Build a 1930-cutoff LLM testbed to study historical reasoning and contamination-free generalization.

This guide is for ML engineers, research scientists, and platform teams who want to reproduce the core idea behind Talkie-1930: a model trained only on pre-1931 English text. After following the steps, you will have a working pipeline for sourcing historical text, filtering temporal leakage, preparing OCR-based training data, post-training on vintage instructions, and evaluating a model against modern baselines.

The approach is useful when you need a clean setting for generalization research, benchmark contamination studies, or historical reasoning experiments. It also helps you understand why OCR quality, date filtering, and carefully designed post-training data matter as much as model size.

Before you start

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

- Python 3.11+

- CUDA GPU with at least 28 GB VRAM for a 13B model

- PyTorch 2.4+

- Hugging Face account and access to model weights

- Git 2.40+

- OCR tooling such as Tesseract 5+ or a custom document OCR pipeline

- Access to historical corpora, including books, newspapers, journals, patents, and case law

- Optional: an API key or local access for a judge model used in preference optimization

For the reference implementation, review the model release notes and repository linked from the project page, along with the technical details in the paper and demo site. The first public references are the project page on MarkTechPost, the live demo at talkie-lm.com/chat, and the code and weights linked from the article’s GitHub and model resources.

Step 1: Assemble a pre-1931 corpus

Goal: create a source set that is legally usable and historically bounded to December 31, 1930, so the model’s knowledge cutoff is explicit and auditable.

Start by collecting public-domain English text from books, newspapers, periodicals, scientific journals, patents, and case law. Keep document metadata intact, especially publication date, source type, and scan provenance. Store each item with a stable ID so you can trace every training token back to an original artifact.

python build_corpus.py \

--sources books,newspapers,journals,patents,case_law \

--cutoff-date 1930-12-31 \

--output corpus/manifests/pre1931.jsonlYou should see a manifest where every document has a verified date at or before 1930-12-31. If you inspect a sample record, the source, publication year, and scan path should all be present.

Step 2: Filter temporal leakage

Goal: remove anachronistic documents so the model does not learn post-1930 facts through misdated pages, editorial notes, or later reprints.

Apply a document-level filter that combines date checks with n-gram or classifier-based anachronism detection. Flag pages that mention post-1930 entities, technologies, or events, and exclude items with uncertain metadata. This step is crucial because even a small leak can distort historical fidelity and invalidate contamination-free experiments.

In practice, keep a quarantine set for suspicious documents and review it manually before finalizing the corpus. The Talkie-1930 work showed that leakage can survive simple date filtering, so your pipeline should treat metadata as necessary but not sufficient.

You should see a reduced corpus size plus a leakage report. A good verification signal is that random samples no longer contain obvious post-1930 references such as World War II, modern computing, or later political events.

Step 3: OCR and clean the scans

Goal: convert page images into trainable text while minimizing the quality loss that OCR introduces into historical documents.

Run OCR on every scanned page, then normalize hyphenation, page headers, marginal notes, and broken ligatures. If you can, benchmark plain OCR against a human-transcribed subset. The Talkie-1930 experiments found that conventional OCR text delivered only 30% of the learning efficiency of human transcription, while simple regex cleanup improved that to 70%.

python ocr_pipeline.py \

--input scans/ \

--engine tesseract \

--cleanup rules/historical_regex.yml \

--output text/ocr_cleaned/You should see aligned page-level text files and a quality report with character error rate, token retention, and cleanup gains. If the cleaned sample still contains broken lines or repeated headers, tighten your preprocessing rules before training.

Step 4: Train the base model on historical tokens

Goal: pretrain a 13B-class base model on the cleaned corpus so it learns language patterns from the 1930 cutoff world only.

Use a standard causal language modeling setup, but keep the data stream strictly historical. Track token counts carefully, since the reference project used 260 billion tokens. Save checkpoints often, and evaluate perplexity on held-out pre-1931 text to confirm the model is learning the distribution rather than memorizing scans.

For a reproducible run, pin your tokenizer, sequence length, optimizer settings, and mixed-precision mode. If you compare against a modern twin, train the twin with the same architecture and hyperparameters on a contemporary corpus so the comparison is fair.

You should see training loss decrease steadily and held-out historical perplexity improve. A healthy sign is that the model can complete period-appropriate text in a fluent style without drifting into modern references.

Step 5: Post-train with vintage instructions and evaluate

Goal: teach the model to follow instructions without importing modern conversational habits or contemporary facts.

Build instruction-response pairs from pre-1931 sources such as etiquette manuals, letter-writing guides, cookbooks, dictionaries, encyclopedias, poetry, and fable collections. Then run supervised fine-tuning, followed by preference optimization with a judge model. The reference pipeline used online DPO and a final synthetic-chat round to improve instruction following while staying historically grounded.

python post_train.py \

--base_model checkpoints/talkie_base \

--instruction_data data/vintage_instructions.jsonl \

--dpo_judge claude-sonnet-4.6 \

--output checkpoints/talkie_itYou should see instruction-following scores rise on a five-point rubric and conversational responses become more usable. For evaluation, test both anachronistic and anachronism-filtered benchmarks, then compare against your modern twin to measure how much of the gap comes from historical cutoff, OCR noise, or subject-matter mismatch.

Common mistakes

- Using date filters alone. Fix: add an anachronism classifier and manual review for suspicious documents.

- Training on raw OCR output. Fix: apply cleanup rules and validate against a human-transcribed subset.

- Mixing modern instruction data into post-training. Fix: derive prompts and answers from pre-1931 manuals, encyclopedias, and similar sources only.

Another frequent issue is underestimating hardware needs. A 13B model can fit only with careful batching and a CUDA GPU class setup, so verify memory headroom before long runs. If you are scaling beyond a single node, lock down deterministic data ordering and checkpoint naming so your historical experiments remain reproducible.

What’s next

Once the pipeline works, extend it to a larger vintage corpus, add better OCR models for historical layouts, and run controlled experiments on forecasting, temporal surprise, and code generalization. From there, you can compare 1930-cutoff models against modern LLMs to study which capabilities depend on web-era knowledge and which emerge from language modeling itself.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset