Nvidia’s MLPerf Gains Show Software Still Matters

Nvidia posted up to 2.77x MLPerf gains on GB300 NVL72, with software tricks like Dynamo and TensorRT-LLM doing heavy lifting.

Nvidia keeps telling the same story for a reason: the company is not just selling GPUs. At GTC 2026, that message lined up with fresh MLPerf Inference results that Nvidia says pushed token throughput and time-to-first-token numbers to new highs.

The headline number is hard to ignore. Nvidia said its GB300 NVL72 system improved by as much as 2.77x on the DeepSeek-R1 server test versus the previous MLPerf run, while one interactive DeepSeek-R1 result hit 250,634 tokens per second and a cost of 30 cents per million tokens. That is the kind of metric that gets CFOs and platform teams paying attention.

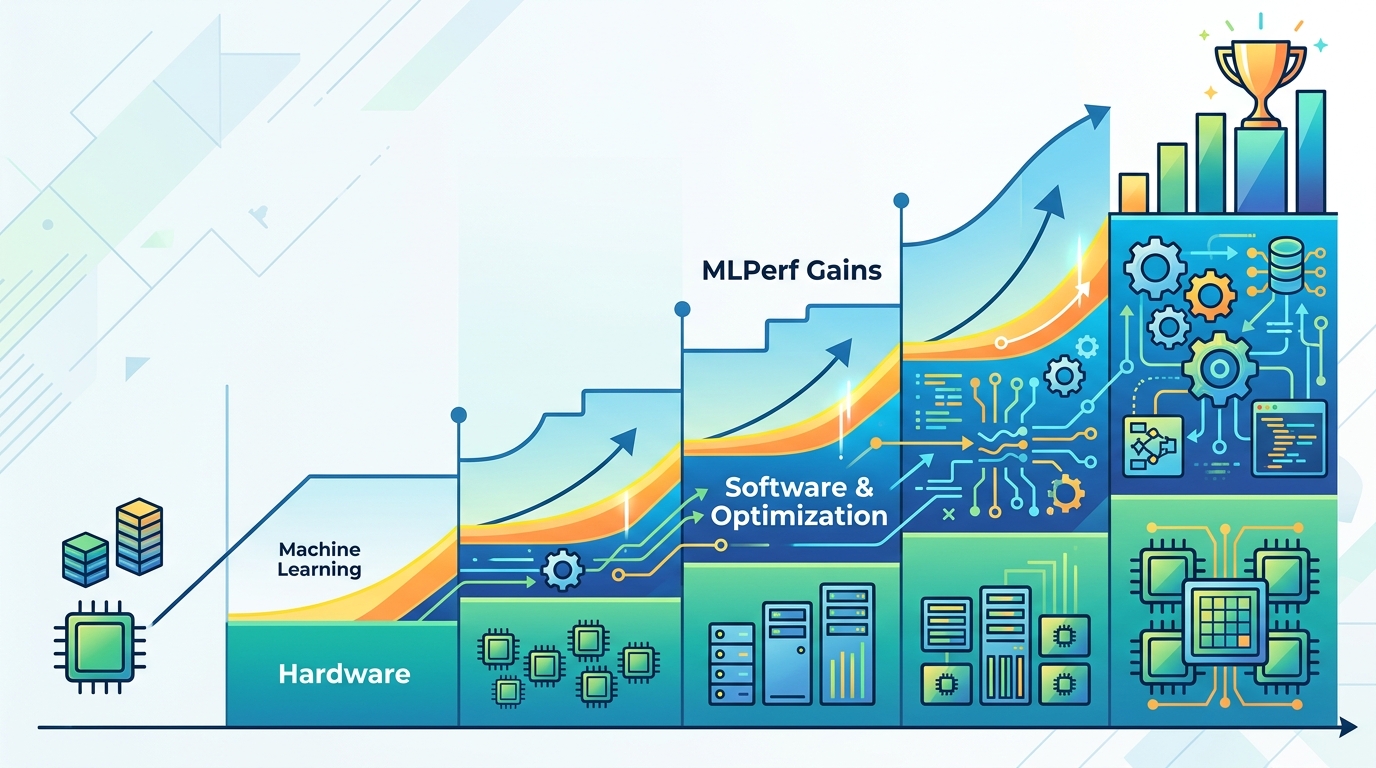

What makes this interesting is that the gains are not coming from silicon alone. Nvidia’s pitch is that hardware, software, model tuning, and system design all move together, and the latest benchmark round gives that claim some real numbers to point at.

MLPerf v6.0 is a better fit for today’s AI traffic

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

MLCommons updated the MLPerf Inference v6.0 suite with workloads that reflect where datacenter AI is headed now, especially around reasoning and multimodal use cases. That matters because benchmark suites can age quickly when model behavior changes faster than the tests themselves.

Dave Salvatore, Nvidia’s director of accelerated computing products, said the consortium updated the workloads to better match the market. He pointed to new or updated tests including DeepSeek-R1 Interactive, GPT-OSS-120B, and Qwen3-VL-235B-A22B. Those are not toy models. They are the kinds of workloads that stress token generation, latency, and memory behavior in ways older benchmarks often miss.

The new suite also spreads tests across offline, server, and interactive modes. That is useful because real AI systems rarely run one clean workload pattern all day. A chatbot, a coding assistant, and a multimodal assistant can all hit the same infrastructure in very different ways.

- DeepSeek-R1 Interactive measures token delivery speed and time to first token.

- GPT-OSS-120B is a mixture-of-experts reasoning model.

- Qwen3-VL-235B-A22B tests multimodal vision-language performance.

- MLPerf v6.0 includes offline, server, and interactive test modes.

That mix helps explain why Nvidia is leaning so hard into inference. Training still matters, but the money is increasingly in serving users, agents, and applications at scale. The benchmark suite now mirrors that shift more clearly than it did a year ago.

Nvidia’s software stack did a lot of the heavy lifting

Nvidia’s message this week is simple: the GPU matters, but software is what turns raw throughput into useful throughput. Salvatore said the company’s co-design strategy, which combines hardware, software, and AI models, drove the inference numbers. That includes Dynamo, TensorRT-LLM, and CUDA-level optimizations.

Dynamo is Nvidia’s open distributed inference framework. The key idea is disaggregated serving, where prefill and decode happen on different GPUs so the system uses resources more efficiently. That matters because prefill and decode stress hardware in different ways, and splitting them can lower token cost when traffic gets messy.

TensorRT-LLM adds another layer of acceleration through parallelism techniques and multi-token prediction. Instead of predicting one token at a time, the model can predict several future tokens at once. Nvidia also talked up CUDA kernel fusion, where multiple kernels get combined into one larger kernel to reduce overhead. That kind of optimization does not make for flashy keynote slides, but it often shows up in the numbers.

“Increases in token generation or increases in performance basically generate more revenue, they reduce costs, they get you more value from the same infrastructure,” Salvatore said.

That quote gets to the heart of Nvidia’s pitch. If a system can serve more tokens per second without increasing cost at the same rate, the economics of AI deployment improve. For cloud providers and large enterprise customers, that is often more persuasive than peak FLOPS.

Nvidia also said it is working with open inference frameworks such as SGLang and TLLM-style stacks, which points to a practical reality: the company wants developers to see Nvidia as part of the broader inference software ecosystem, not a closed box.

The numbers are getting better fast

The benchmark deltas are the part that will catch most people’s eye. Nvidia said the GB300 NVL72 improved from MLPerf v5.1 to v6.0 by 1.21x on Llama 3.1 405B offline and by 2.77x on DeepSeek-R1 server. In a six-month window, that is a substantial jump for a system already sitting at the high end of the market.

Here is the practical comparison: if your system can nearly triple throughput on a popular reasoning model, you can either serve more users, cut infrastructure spend, or do a bit of both. That is why inference benchmarking matters more now than it did when model training dominated the conversation.

- DeepSeek-R1 server: 2.77x improvement on GB300 NVL72 versus v5.1.

- Llama 3.1 405B offline: 1.21x improvement.

- DeepSeek-R1 Interactive: 250,634 tokens per second.

- DeepSeek-R1 Interactive cost: 30 cents per 1 million tokens.

Nvidia said 14 partners submitted results, including OEMs such as Dell Technologies and HPE, plus cloud providers like Google Cloud. That breadth matters because it shows the software gains are not locked to one lab setup. They are showing up across a partner ecosystem that sells real systems to real customers.

The bigger strategic point is that Nvidia is trying to make expensive hardware look economical through software. If the system cost is high but the token cost falls enough, the math can still work. That is the argument Nvidia keeps making, and these results give it more credibility than a marketing deck would.

What this means for AI buyers

Nvidia will keep being associated with GPUs, because that is where its brand was built. But the MLPerf v6.0 results show why the company keeps talking about platform design, reference architectures, and inference software. For buyers, the question is no longer whether the chip is fast. It is whether the whole stack can turn that speed into lower cost per token.

If you are evaluating AI infrastructure in 2026, this benchmark round is a reminder to look past peak throughput charts. Ask how prefill and decode are scheduled, what the software stack does with kernel fusion, and whether the system is tuned for reasoning workloads rather than just old-school LLM serving.

My read is simple: the next round of AI infrastructure deals will go to the vendors that can show better token economics, not just bigger GPU counts. Nvidia clearly knows that, and MLPerf is where it keeps trying to prove it. The real question for buyers is whether those gains hold when the workloads get messy, the models get larger, and the user traffic stops looking like a benchmark run.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset