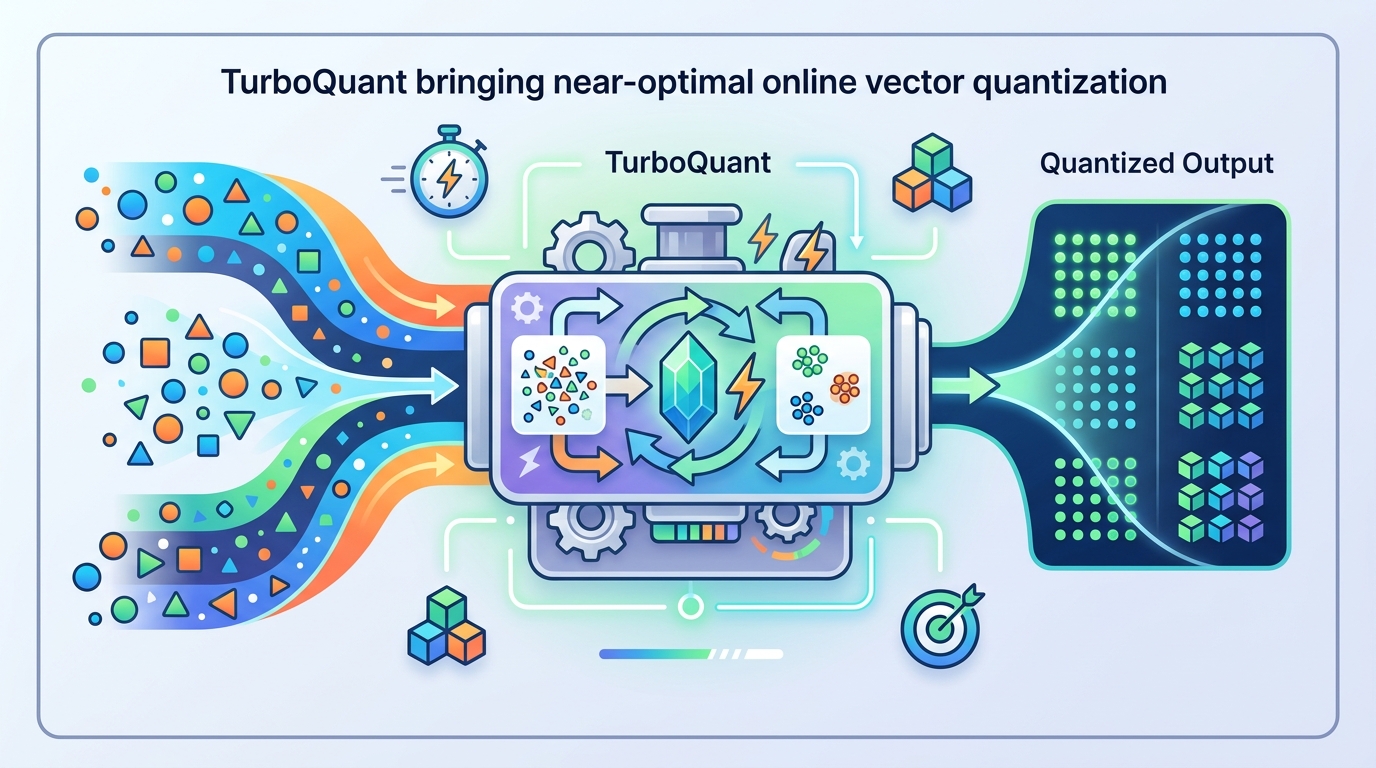

TurboQuant brings near-optimal online vector quantization

TurboQuant is an online, accelerator-friendly vector quantizer that targets near-optimal MSE and inner-product distortion.

Vector quantization is one of those unglamorous infrastructure problems that quietly decides whether a system is fast, memory-efficient, and accurate. TurboQuant: Online Vector Quantization with Near-optimal Distortion Rate proposes a practical answer for compressing high-dimensional vectors while keeping distortion close to the best possible bound.

The engineer-friendly version: the paper focuses on two things people actually care about in production—mean-squared error for reconstruction and inner-product accuracy for retrieval or model inference. TurboQuant is designed to work online, be accelerator-friendly, and avoid the usual trade-off where a quantizer is either too slow or too far from the theoretical optimum.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Quantization is what turns floating-point vectors into compact bitstrings. That matters anywhere vectors are stored, moved, or compared at scale: large language model serving, KV cache compression, and nearest-neighbor search in vector databases are all mentioned in the paper as core use cases.

The issue is that existing vector quantization methods tend to miss one of two targets. Some are not suitable for online use or vectorized accelerator execution, which makes them awkward for real-time workloads. Others are efficient but do not achieve the best known distortion rates for a given bit-width. TurboQuant is introduced to close that gap.

The paper frames the problem in Shannon-style terms: given a vector x in R^d, compress it into B bits and reconstruct it later with as little distortion as possible. The authors explicitly study two distortion measures: MSE, which measures reconstruction quality, and inner-product error, which matters when the downstream task depends on dot products or cosine-like similarity.

How TurboQuant works in plain English

TurboQuant uses a two-stage design. First, it builds an MSE-optimal quantizer. Then, to make inner-product estimates unbiased, it applies a 1-bit Quantized Johnson-Lindenstrauss transform to the residual left over after the first stage.

The first stage starts with a random rotation of the input vector. The paper’s key observation is that after rotation, each coordinate follows a Beta distribution, and different coordinates become nearly independent in high dimensions. That makes it possible to quantize each coordinate separately with an optimal scalar Lloyd-Max quantizer, rather than needing a more complicated vector codebook.

Why does that matter? Because scalar quantization is much easier to implement efficiently, especially in an online setting. The paper’s design is deliberately data-oblivious, which means it does not require learning a codebook from a dataset before it can be used. That is especially useful for streaming or on-the-fly settings like KV cache quantization.

The second stage addresses a subtle but important problem: an MSE-optimal quantizer is not automatically a good inner-product quantizer. In fact, the paper says MSE-optimal quantizers can introduce bias in inner-product estimation. TurboQuant fixes that by quantizing the residual with a 1-bit QJL transform, producing an unbiased estimator for inner products.

What the paper actually shows

The paper claims near-optimal distortion rates across all bit-widths and dimensions, with performance within a small constant factor of the information-theoretic lower bound. It also states that the gap to the lower bound is about a 2.7 factor. That is the main theoretical headline: not just good performance, but performance that closely tracks what is fundamentally achievable.

For the MSE side, the authors provide a formal lower-bound analysis and argue that TurboQuant matches the best achievable rate up to that small constant factor. For the inner-product side, they show that the two-stage construction gives an unbiased quantizer while still keeping distortion low.

The abstract also reports concrete experimental results. For KV cache quantization, TurboQuant achieves absolute quality neutrality at 3.5 bits per channel and only marginal quality degradation at 2.5 bits per channel. In nearest-neighbor search, it improves recall over existing product quantization techniques while reducing indexing time to virtually zero.

Those are useful claims, but the paper does not, at least in the material provided here, give full benchmark tables, dataset-by-dataset breakdowns, or all the settings behind those numbers. So the right way to read the results is as evidence that the theory is not just decorative: the method appears to work in the exact kinds of workloads where quantization matters most.

Why developers should care

If you build systems that store or compare large vectors, this paper is about reducing the cost of doing that without wrecking quality. That includes LLM serving pipelines that want smaller KV caches, retrieval systems that need low-latency approximate search, and any accelerator-bound workload where memory bandwidth is the bottleneck.

TurboQuant is especially interesting because it is online and data-oblivious. That means you do not need a training phase to fit a quantizer to your data distribution, which lowers operational complexity. For systems that see changing data, that can be a big practical advantage over methods that depend on offline codebook training.

The other practical point is that the method is built around simple primitives: random rotation, per-coordinate scalar quantization, and a 1-bit transform on the residual. That makes it easier to reason about than more elaborate learned compression schemes. Simpler does not always mean better, but in infra work, simplicity often wins when latency and implementation cost matter.

Limitations and open questions

The paper is strong on theory, but the provided material leaves some important implementation details open. For example, it does not spell out the full cost of the random rotation step, how the quantizer behaves under different hardware constraints, or how sensitive the method is to vector distributions outside the worst-case framing.

There is also a practical distinction between proving near-optimal distortion and integrating the method into a production pipeline. The paper argues that TurboQuant is accelerator-friendly, but the source excerpt does not provide a full systems analysis of throughput, memory overhead, or end-to-end latency across different deployments.

Still, the core message is clear: TurboQuant is trying to make vector quantization both theoretically tight and operationally usable. For engineers, that combination is the real value. If a compression method is mathematically elegant but too awkward to deploy, it rarely survives contact with production. This paper is aimed at the opposite outcome.

In short, TurboQuant is a compact answer to a big problem: how do you compress vectors online, preserve the geometry that downstream tasks depend on, and stay close to the best possible distortion rate? The paper’s answer is a two-stage quantizer that is simple enough to deploy, strong enough to analyze, and promising enough to matter for LLM serving and vector search.

- Targets both MSE reconstruction and inner-product accuracy

- Uses random rotation plus per-coordinate scalar quantization

- Adds a 1-bit QJL residual stage for unbiased inner products

- Claims near-optimal distortion within about a 2.7 constant factor

- Reports KV cache and nearest-neighbor search wins in experiments

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset