Tag

video generation

Video generation is shifting from making clips that merely move to models that can be controlled and reasoned about. Current work focuses on temporal consistency, playback speed, camera-object motion separation, and active vs. passive actions to better match user intent and real-world causality.

5 articles

ActCam adds joint camera and motion control

ActCam is a zero-shot way to steer both actor motion and camera path in video generation without training a new model.

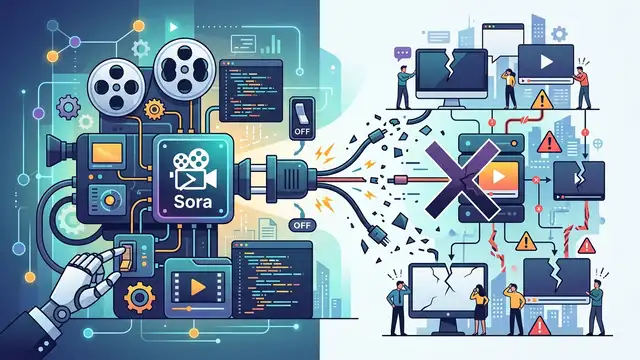

How to Migrate from Sora 2 in 2026

Migrate Sora 2 video workflows to new models before OpenAI’s shutdown deadlines.

Teaching Video Models to Understand Time

A self-supervised way to detect speed changes, estimate playback speed, and generate or sharpen videos at controlled timing.

MoRight tackles motion control and causality

MoRight separates camera and object motion, and models active vs. passive actions so video generation can react more plausibly to user input.

Inside Sora's Shutdown and AI Vendor Risk

Sora peaked near 1 million users, then fell below 500,000 while burning about $1 million a day. The unit economics broke.