Inside Sora's Shutdown and AI Vendor Risk

Sora peaked near 1 million users, then fell below 500,000 while burning about $1 million a day. The unit economics broke.

Sora peaked at about one million users, then slipped below 500,000 while burning roughly $1 million a day in compute. That is the kind of math that ends a product fast, even when the demo videos look amazing.

The shutdown is a useful reminder for anyone building with AI: a model can impress users and still fail as a business. In Sora’s case, the core issue was simple. Video generation is expensive to run, and consumer demand did not stay high enough to pay for it.

What went wrong

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The first lesson is that AI demand can be spiky, shallow, and expensive to serve. A product may attract a huge early audience, but that does not mean those users will keep returning often enough to cover inference costs.

Sora’s usage curve tells the story. One million users at the peak sounds healthy, but a drop to under 500,000 changes the economics fast when each session consumes serious GPU time. If the product does not create repeat behavior or a clear paid path, the burn rate wins.

For video generation, cost pressure comes from several places at once: long generation times, large models, and the need for high-end chips. That means every extra minute of usage can carry real financial weight.

- Peak user base: around 1 million

- Later user base: fewer than 500,000

- Estimated daily compute burn: about $1 million

- Core workload: video generation, one of the most expensive AI tasks to serve

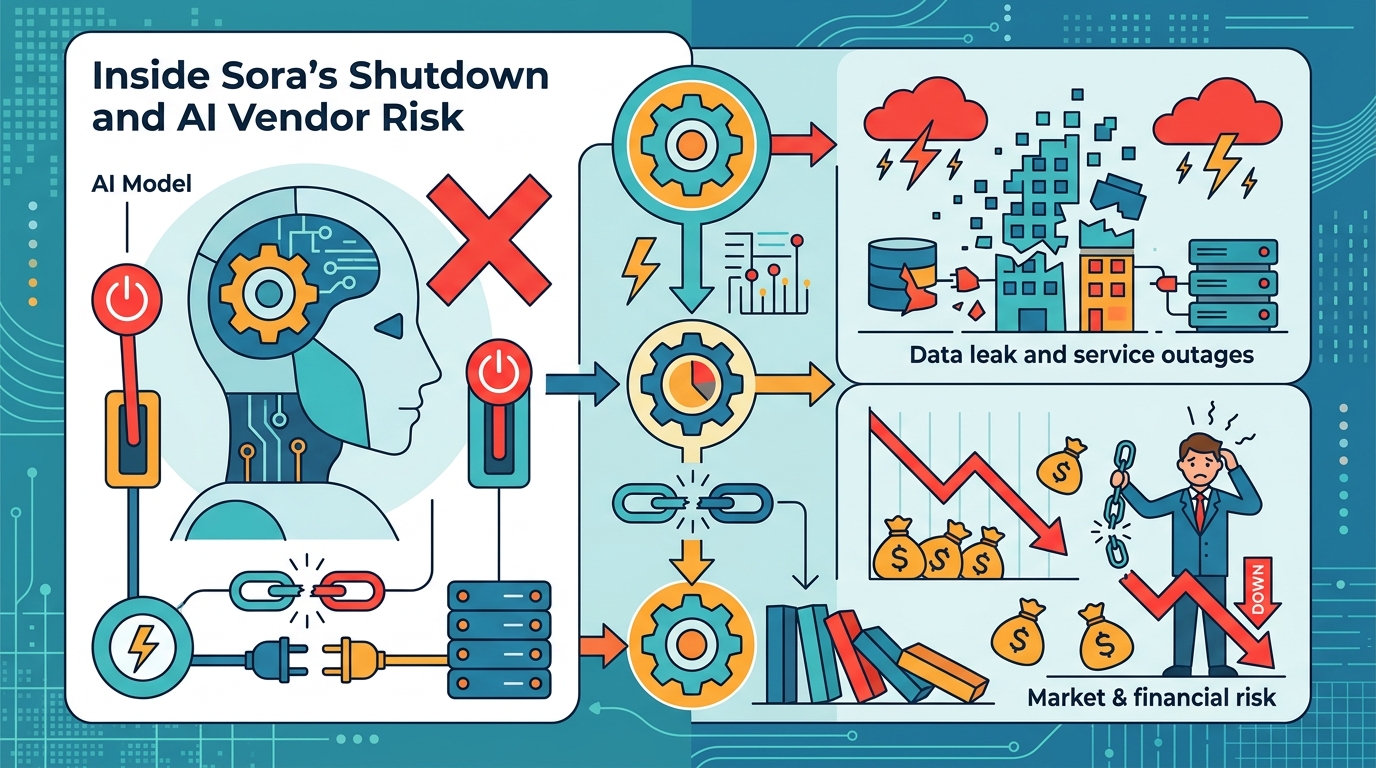

Why vendors keep getting exposed

This is not just a Sora problem. It is a vendor problem that shows up whenever a company sells access to an AI system without tight control over usage economics. The vendor gets paid on subscription or API fees, while the cloud bill arrives based on actual compute.

That gap matters most when the product is built around flashy outputs instead of daily utility. If users try the system once, share a few clips, then leave, the vendor is left paying for the hardware and electricity while revenue fades.

OpenAI is far from the only company facing this pressure. Anthropic, OpenAI, and Google Gemini all have to balance user growth against inference costs, but video is a harsher test than chat. The output is heavier, slower, and more expensive to generate.

“Every cloud is a silver lining, but every silver lining has a cloud.” — Anais Nin

That quote fits AI vendors better than most product slogans. A fast-growing product can hide weak economics for a while, but the bill eventually reveals whether the business works.

How Sora compares with cheaper AI products

Chatbots and text tools usually have a much easier path to profitability because a short text response is cheap compared with full video generation. The difference is not minor; it changes what kind of pricing model can survive.

Video models also face a different usage pattern. A text assistant may get daily use for work, study, or coding. A video generator may get used in bursts, then sit idle for long stretches. That makes retention harder and revenue less predictable.

- Text generation: lower compute cost per request, easier to bundle into subscriptions

- Image generation: higher cost than text, but still far cheaper than video

- Video generation: highest compute load, hardest to price for mass use

- Consumer retention: often lower for creative novelty tools than for daily work tools

That gap explains why many AI companies push high-volume chat products first and treat video as a premium feature. The economics are simply friendlier when the model can answer thousands of small requests instead of a few expensive ones.

For developers, the lesson is practical. If you are building on top of OpenAI’s API or a similar service, you need to know the cost per task before shipping. A product that looks affordable in a prototype can become a money sink once real users arrive.

What this means for builders and buyers

The Sora shutdown also says something about vendor risk. If your app depends on one AI provider, your product inherits that provider’s pricing, uptime, policy changes, and model availability. That is fine when the economics are stable. It gets ugly when they are not.

Builders should think in terms of cost ceilings, usage caps, and fallback paths. Buyers should ask a simpler question: if this vendor changes pricing next quarter, does the product still make sense?

There is a reason many teams now keep a second path ready through Hugging Face Transformers or self-hosted models on PyTorch. The goal is not perfect independence. The goal is avoiding a single point of failure in product economics.

For teams watching this from the outside, the smart move is to audit AI features with the same discipline used for cloud spend. Measure cost per active user, cost per output, and retention after the novelty wears off. If a feature burns cash faster than it creates habit, it is a liability, not a moat.

Conclusion

Sora’s collapse is a warning, not a footnote. The next wave of AI products will be judged less by how impressive they look in a launch video and more by whether the unit economics survive real usage. If you are building in this space, the question to ask now is simple: what happens when your best-case demo becomes your average customer?

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环