LongMemEval-V2 tests agent memory in web workflows

A new benchmark checks whether agent memory can retain web-environment experience, not just user history, and improve long-term task recall.

LongMemEval-V2 benchmarks whether agent memory can retain web-environment experience.

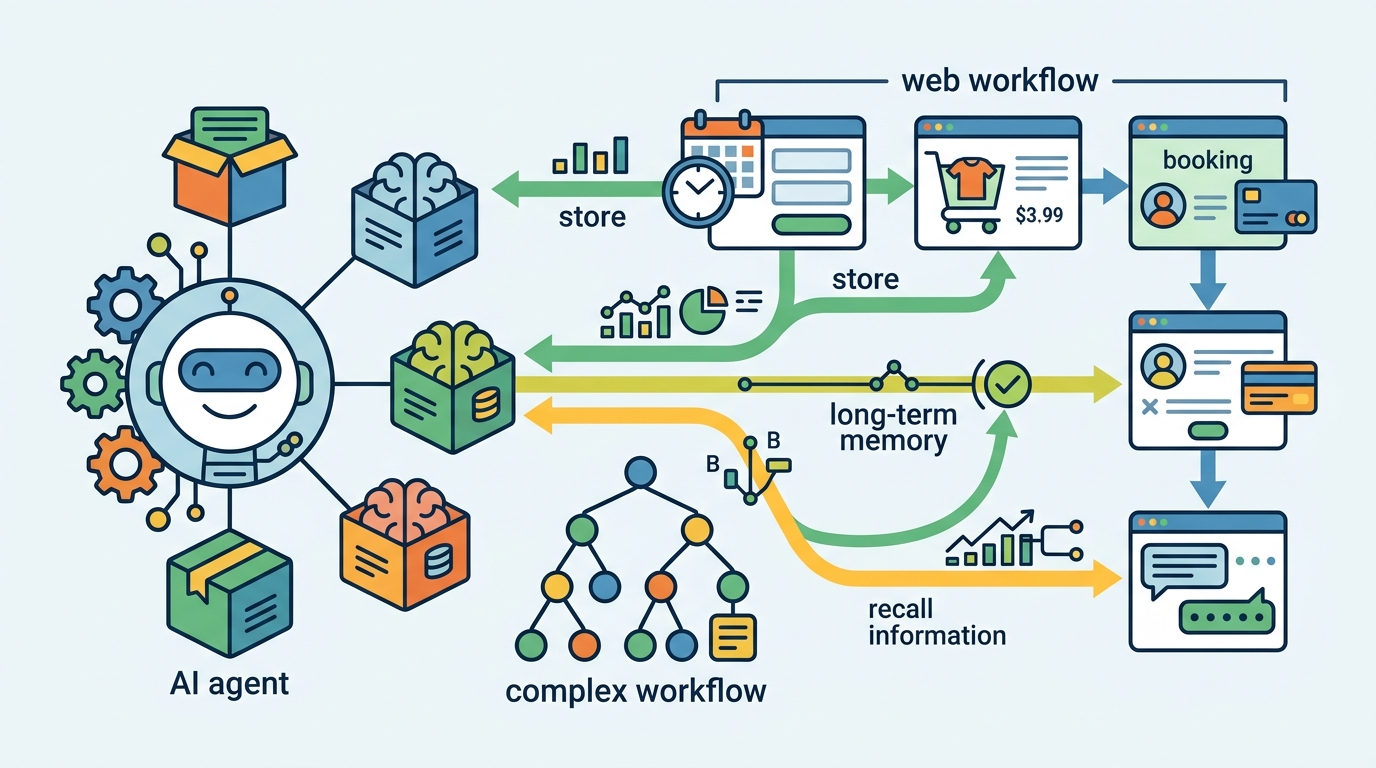

Long-term memory is one of the missing pieces for agents that work inside specialized web environments. The point is not just remembering what a user said, but recalling interface behavior, workflow patterns, and the kinds of failures that keep showing up in the same environment.

The paper LongMemEval-V2: Evaluating Long-Term Agent Memory Toward Experienced Colleagues tries to measure that directly. Instead of judging memory only by downstream task success or short interaction traces, it asks a sharper question: can a memory system help an agent accumulate the kind of experience that makes it behave like a knowledgeable colleague?

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Most existing memory benchmarks for agents focus on user histories, short traces, or whether a task was completed. That leaves a gap. In real web environments, success often depends on remembering environment-specific details that are not obvious from a single session.

Those details include static interface facts, how the environment changes over time, what workflows tend to work, which mistakes are easy to repeat, and what assumptions are safe to make. If a benchmark does not test those things, it can overstate how useful a memory system really is.

LME-V2 is designed to close that gap. The benchmark is built around the idea that an agent should not just store facts; it should internalize experience from repeated exposure to the environment.

How the method works in plain English

LongMemEval-V2 contains 451 manually curated questions. They are organized around five memory abilities for web agents: static state recall, dynamic state tracking, workflow knowledge, environment gotchas, and premise awareness.

Each question is paired with history trajectories. The paper says these histories can include up to 500 trajectories and 115M tokens, which makes this a genuinely long-context memory problem rather than a toy recall test.

The setup uses what the authors call a context gathering formulation. In practice, that means memory systems read the history trajectories and then return compact evidence that can be used for downstream question answering. So the benchmark is not only asking whether a model can store information, but whether it can retrieve the right slice of experience when needed.

The paper evaluates two memory methods under this setup:

- AgentRunbook-R, an efficient RAG-based memory with knowledge pools for raw state observations, events, and strategy notes

- AgentRunbook-C, which stores trajectories as files and uses a coding agent to gather evidence in an augmented sandbox

That split is useful for practitioners because it compares a retrieval-heavy approach with a more tool-driven one. In other words, it asks whether classic RAG-style memory is enough, or whether agents need a more active evidence-gathering workflow to handle long-term experience.

What the paper actually shows

The strongest result in the abstract is that AgentRunbook-C reaches 72.5% average accuracy. That beats the strongest RAG baseline, which gets 48.5%, and also outperforms the off-the-shelf coding agent baseline at 69.3%.

Those numbers matter because they show a meaningful gap between simply retrieving stored information and using a coding agent to collect evidence from stored trajectories. The paper frames AgentRunbook-C as the best performer on the benchmark, and it says this also advances the accuracy-latency Pareto frontier.

At the same time, the paper is explicit about the tradeoff: coding-agent-based methods have high latency costs. So while AgentRunbook-C is better on accuracy, it is not free. For systems that need fast responses, that delay could be a serious engineering constraint.

The abstract does not provide more detailed benchmark breakdowns beyond the average accuracy figures, so there is not enough here to compare results across the five memory abilities individually. What the source does make clear is that LME-V2 is meant to be challenging, and that even the strongest approach still leaves substantial room for improvement.

Why developers should care

If you are building agents for specialized web environments, this paper is a reminder that memory is not just a storage problem. The useful unit of memory may be experience: state changes, workflow habits, recurring traps, and assumptions that are only visible after many trajectories.

That matters for any agent architecture that relies on retrieval, runbooks, or long-lived operational memory. A system that remembers the user but forgets the environment will still fail in the same places. LME-V2 gives teams a way to test whether their memory stack actually captures the environment knowledge that makes agents more competent over time.

The benchmark also highlights a practical design question: do you want faster retrieval from structured memory pools, or slower but more capable evidence gathering with a coding agent? The paper’s results suggest that the answer may depend on your latency budget and how much accuracy you need.

There are still open questions. The abstract does not say how well these methods transfer outside the benchmark, how sensitive the results are to the specific environment setup, or which of the five memory abilities is hardest. It also does not show whether the coding-agent approach remains practical under tight production latency constraints.

Even with those limits, LME-V2 looks useful as a stress test for long-term agent memory. It pushes the conversation away from “can the agent remember?” and toward a more operational question: can it actually learn the environment well enough to act like an experienced teammate?

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset