Claude vs ChatGPT vs Copilot vs Gemini for Enterprise

Claude leads on long-context work and coding benchmarks, while ChatGPT, Copilot, and Gemini win on reach, integration, and workflow fit.

Enterprise AI in 2026 is no longer a side experiment. ChatGPT Enterprise, Claude Enterprise, Microsoft Copilot, and Google Gemini now sit inside real budgets, security reviews, and procurement cycles. The interesting part is that they win for different reasons: one model family is strongest on long-context analysis, another on general chat usage, and another on office workflow integration.

If you are choosing a platform for a team, the wrong question is “which one is best?” The better question is “best for what job, inside which stack, with what controls?” That shift matters because the numbers are already big: Anthropic says Claude Enterprise can handle 500,000+ tokens, Microsoft has pushed Copilot into the tools many companies already pay for, and Google is packaging Gemini as a business-wide interface across Workspace and Cloud.

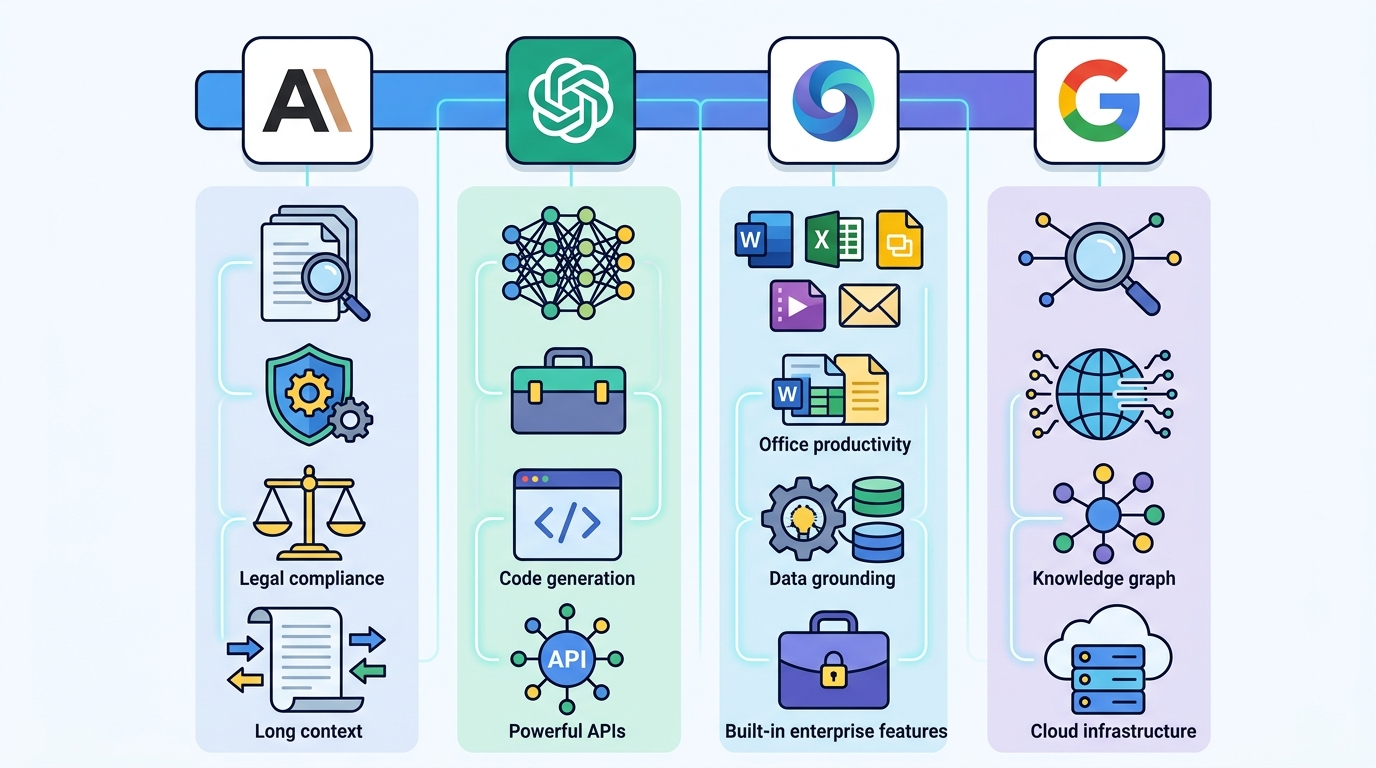

What each platform is really good at

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The cleanest way to compare these four is by job, not by brand. ChatGPT Enterprise is the broadest general-purpose assistant. Claude is the one many teams reach for when the input is huge, the output needs judgment, or the task looks like reading, writing, or coding across a pile of documents. GitHub Copilot and Microsoft 365 Copilot fit teams already living in GitHub, Outlook, Excel, Teams, and SharePoint. Gemini is strongest when a company is already deep in Google Workspace or Google Cloud.

That split shows up in how buyers talk about outcomes. Some care about writing speed. Others care about code review quality, document analysis, or how easily an assistant can touch internal data without creating a compliance headache. In enterprise AI, integration often matters more than model benchmarks, because the tool that fits into daily work gets used and the tool that lives in a separate tab gets ignored.

- Claude Enterprise advertises 500,000+ token context windows, with experimental million-token support in some workflows.

- OpenAI says ChatGPT Enterprise keeps customer data out of model training.

- Microsoft Copilot draws value from Microsoft Graph, Azure identity, and existing admin controls.

- Google Gemini Enterprise connects to Workspace, Cloud, and third-party systems such as Salesforce and SAP.

Why Claude keeps winning the long-doc test

Claude’s biggest advantage is simple: it can hold a lot of information in memory at once. That matters when a legal team drops in contracts, a finance group uploads quarterly reporting packages, or an engineering team wants one model to inspect a large codebase plus design docs plus issue threads. Anthropic has leaned hard into that use case, and the market has noticed.

One benchmark that keeps coming up is Terminal-Bench. Anthropic said Claude Sonnet 4.5 reached 65.4% on Terminal-Bench 2.0, which is a strong signal for agentic coding and terminal work. Anthropic also says Claude Enterprise can process very large document sets, which is why it keeps showing up in legal, financial, and research-heavy workflows.

“We are not just building a chatbot. We are building an AI that can be genuinely useful, and safe, for the long term.” — Dario Amodei, Anthropic co-founder and CEO, in Anthropic’s founding mission statement.

That quote matters because it explains the product shape. Anthropic has spent years selling caution, reliability, and long-context reasoning rather than flashy consumer features. In practice, that means Claude often feels like the model you hand to a team when accuracy, document handling, and policy controls matter more than novelty.

It also explains why Claude tends to get strong reactions from developers. When a model can ingest a giant repo, preserve more context across turns, and stay coherent over long prompts, it becomes useful in places where shorter-context models start to lose the thread.

ChatGPT, Copilot, and Gemini win on distribution

Claude may be strong on long-context work, but distribution is still king. ChatGPT remains the most recognizable AI product in the market, and OpenAI’s enterprise push built on that brand momentum. For many companies, ChatGPT is the default “try this first” tool because employees already know how it works. That creates adoption without a long training curve.

GitHub Copilot and Microsoft 365 Copilot have a different advantage: they sit inside software companies already use every day. If a finance team can ask questions in Excel, a sales team can draft in Outlook, and a developer can autocomplete code in VS Code, adoption becomes part of the workflow instead of a separate initiative. Microsoft has also used its security and identity stack as a selling point, which matters in regulated companies.

Gemini is the most interesting option for Google-centric organizations. Google is positioning it as a front door to AI across Workspace and Cloud, and that matters for companies already using Gmail, Docs, Drive, BigQuery, and Vertex AI. In those environments, the question is less about whether the model is good enough and more about how much friction disappears when the assistant already knows where the data lives.

- StatCounter data cited in the source article put ChatGPT near 81% of global chatbot traffic by mid-2025.

- The same source put standalone Copilot usage around 4% to 5% in summer 2025.

- A16Z’s 2025 CIO survey found 78% of Global 2000 companies used OpenAI models in production.

- The same survey said 81% of Global 2000 firms used three or more model families.

What the enterprise numbers say

The most important enterprise trend is not that one vendor is winning everything. It is that companies are mixing vendors on purpose. A16Z’s CIO survey showed that many large organizations now run multiple model families at the same time. That makes sense. One model may be best for writing and brainstorming, another for code, another for internal search, and another for sensitive document workflows.

The case studies in the source article tell the same story. OpenAI says ChatGPT was tried by nine in ten Fortune 500 companies early on. Microsoft points to customers like BNY Mellon using GitHub Copilot broadly across developers. Anthropic cites Novo Nordisk cutting clinical document work from 10+ weeks to about 10 minutes, and Cox Automotive using Claude agents to speed up listing creation and appointment flows. Google has pushed Gemini Enterprise into companies such as Figma, Gap, and Macquarie Bank.

Those examples are useful because they show where the value comes from. It is rarely “the model answered a question.” It is usually “the model removed hours from a repeated process.” That difference is why enterprise buyers keep asking about context windows, admin controls, audit logs, and data residency instead of just benchmark scores.

For a practical comparison, here is the short version:

- Choose ChatGPT Enterprise if you want broad adoption, strong general-purpose output, and a familiar interface.

- Choose Claude Enterprise if your work involves long documents, codebases, or analysis that needs a lot of context.

- Choose Microsoft Copilot if your company already runs on Microsoft 365, Azure, and GitHub.

- Choose Gemini Enterprise if your data and workflows already live in Google Workspace and Google Cloud.

Bottom line for 2026 buyers

If I were buying for a large company in 2026, I would not ask for a single winner. I would ask for a pilot plan. Put Claude on the messy-document and code-heavy workflows, test ChatGPT with broad employee use, compare Copilot inside Microsoft-heavy teams, and see whether Gemini reduces friction in Google-native departments. The best model is the one that gets adopted, passes security review, and saves measurable time.

The next buying cycle will probably favor companies that treat AI like a portfolio, not a religion. The real question is which team can prove a return in 90 days without creating compliance problems or another shelfware subscription. If your organization is still choosing one assistant for every job, that is the wrong procurement model for 2026.

For more context on how enterprise AI is being packaged into products and workflows, see our related coverage on Claude Code and OpenAI enterprise tools.

// Related Articles

- [IND]

Circle’s Agent Stack targets machine-speed payments

- [IND]

IREN signs Nvidia AI infrastructure pact

- [IND]

Circle launches Agent Stack for AI payments

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods