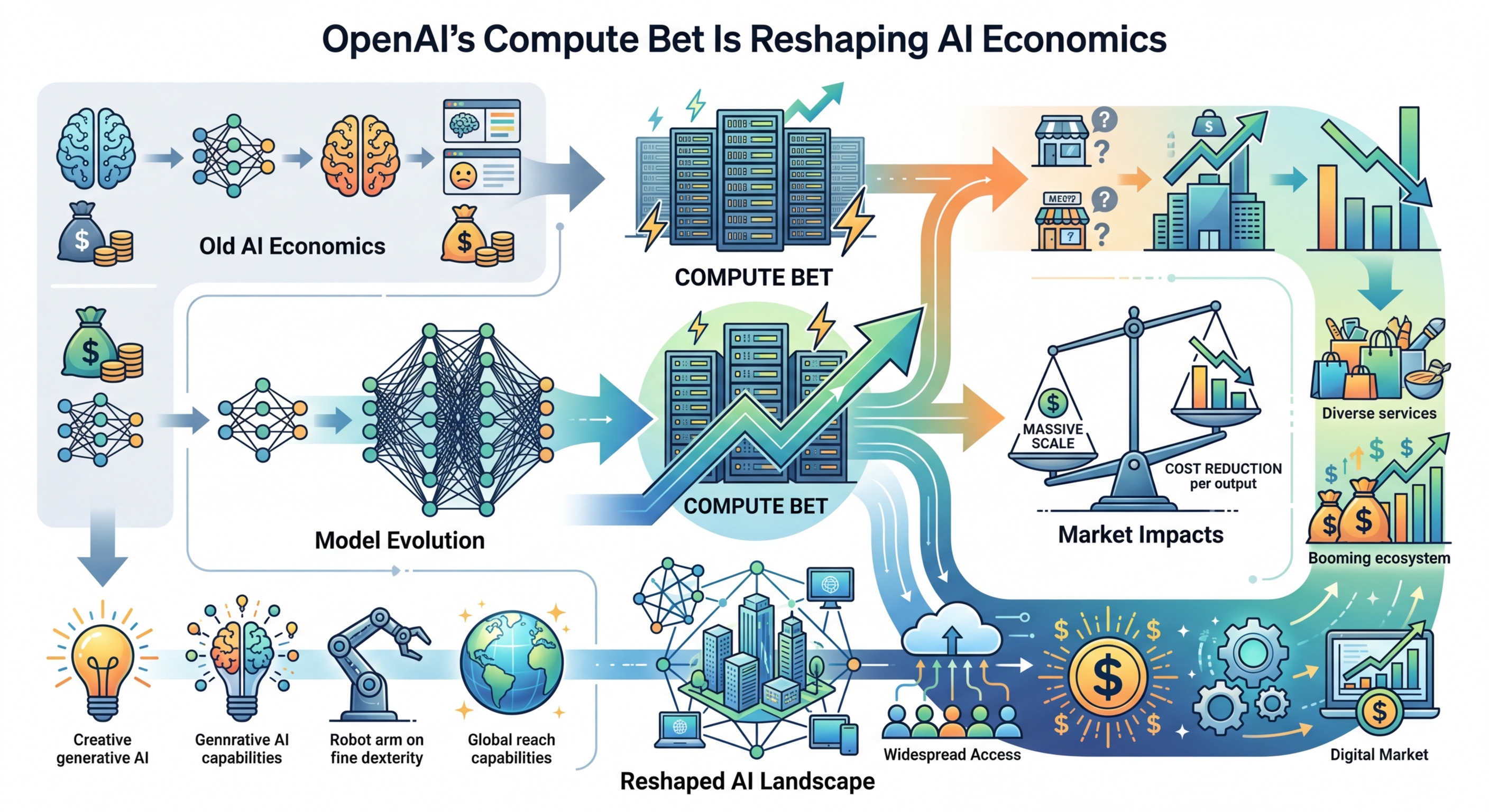

OpenAI’s Compute Bet Is Reshaping AI Economics

OpenAI’s spending on chips, power, and data centers is exposing a hard truth: AI demand is real, but the business math is still shaky.

OpenAI’s AI business has grown at a startling pace, with reported annualized revenue above $20 billion in 2025. At the same time, the company has been linked to infrastructure commitments measured in gigawatts, not racks. That mismatch between demand growth and the cost of serving it is now one of the most important stories in tech.

The short version: OpenAI wants far more compute than any one vendor can reasonably provide, and it wants that compute at lower cost for inference. That is pushing the whole stack to change, from chips and foundries to power contracts and debt markets.

Why OpenAI is moving beyond Nvidia

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

For years, Nvidia has owned the premium AI chip market through products such as H100 and the newer Blackwell line. But training and inference are starting to split into different businesses with different cost structures. Training still rewards top-end general-purpose accelerators. Inference rewards lower latency, better efficiency, and tighter control over unit economics.

That helps explain why OpenAI has reportedly expanded its supplier mix. The company has been tied to a large deal with Cerebras for inference capacity, has reportedly rented Google TPU systems, and is said to be working with Broadcom on an in-house inference chip that would be manufactured by TSMC on a 3 nm process.

This looks less like supplier diversification for its own sake and more like financial necessity. If inference costs stay too high, every successful product turns into a larger bill. That is a bad place to be when usage is rising fast.

- OpenAI reportedly crossed $20 billion in annualized revenue in 2025, up sharply from roughly $2 billion in 2023.

- Industry claims cited in the source article say Google TPU inference efficiency can exceed comparable GPU setups by more than 2x in some scenarios.

- The same article says OpenAI’s custom inference chip with Broadcom is targeting production in 2026 using TSMC’s 3 nm manufacturing.

- Morgan Stanley has projected pressure on Nvidia’s AI chip share as inference workloads spread across more specialized hardware.

The bigger takeaway is simple: the era of one default chip for every AI job is ending. Training clusters and inference fleets are starting to optimize for different goals, and that opens the door for more vendors.

Power is becoming the real bottleneck

AI infrastructure discussions still focus on chips, though power is often the harder constraint. A data center full of accelerators is useless if the site cannot get enough electricity, cooling, and grid access. That is why OpenAI’s compute story now overlaps with utilities, batteries, and gas turbines.

Several infrastructure partners named in the source article point in the same direction. Oracle, Crusoe, CoreWeave, Nvidia, AMD, and Broadcom have all been connected to OpenAI’s broader compute buildout. The article claims total commitments above 26 GW. Even if that figure shifts over time, the order of magnitude matters. This is utility-scale demand.

Crusoe’s Texas project is one of the clearest examples. The company has described large AI data center plans in Abilene, and the source article says the site has expanded to 1.2 GW across multiple buildings. It also notes work with Lancium on battery storage, solar integration, and gas backup. That mix shows what AI infrastructure now requires: not just servers, but energy engineering.

“America has a real opportunity to build a lot more datacenter capacity than any other country.”

— Satya Nadella

Nadella has also repeatedly pointed to electricity and construction capacity as limiting factors in AI expansion. That fits the market reality. A shortage of advanced chips hurts, but a shortage of power strands already-purchased hardware and delays revenue.

The business model still looks uncomfortable

The bullish case for AI is easy to see in demand metrics. Microsoft has reported strong Azure growth tied to AI workloads. Google Cloud has talked publicly about surging use of Gemini APIs. TSMC’s capex plans reflect confidence that customers will keep ordering advanced silicon.

The harder question is whether that demand translates into healthy margins for model providers. The source article argues that OpenAI’s inference costs have exceeded related revenue in some periods. If that framing is directionally correct, it explains a lot: the rush toward custom chips, the need for lower-cost inference partners, and experiments with new monetization such as advertising.

This is where the AI market feels less like software and more like airlines or telecom. Usage growth alone does not guarantee attractive economics when infrastructure bills rise alongside demand. If every extra user creates heavy compute cost, scale can punish a company before it helps it.

- The source article cites a projected OpenAI cash burn of $17 billion in 2026, up from about $9 billion in 2025.

- It also cites long-range infrastructure and leasing obligations that could reach hundreds of billions of dollars by 2030.

- JPMorgan and other financial firms have highlighted the growing role of debt and private credit in funding AI-related infrastructure.

- Meta has reportedly tapped private credit markets for large data center financing, a sign that this financing model extends far beyond OpenAI.

That financing angle matters because it spreads AI risk across more of the economy. If top model companies hit margin problems or slow their spending, the effect would not stop with chip vendors. It would hit cloud providers, builders, utilities, lenders, and regional energy plans.

What the next 24 months will decide

There are two plausible paths from here. In one path, model providers lower inference costs fast enough through better chips, smarter routing, smaller models, and stronger pricing. If that happens, the current infrastructure buildout looks aggressive but rational. Nvidia still wins big, though so do Cerebras, Google TPU, AMD, Broadcom, TSMC, and the operators that can actually power these clusters.

In the other path, demand keeps rising while margins stay weak. Then AI starts to look like a capital-intensive utility business with software valuations attached to it. That would put pressure on supplier contracts, financing structures, and expansion plans across the stack. It would also raise a blunt question: how many users need to convert into paying enterprise seats, API revenue, or ad-supported products before this math works?

My read is that inference economics will decide more than model quality over the next two years. The company that delivers useful AI at materially lower cost per query will shape the next phase of the market. If you are tracking AI as an investor, operator, or developer, watch four numbers closely: power capacity, inference cost per token, enterprise conversion, and utilization of newly built clusters.

OpenAI has forced the industry into a huge infrastructure sprint. The next step is proving that all this compute can produce durable margins instead of bigger bills. If that proof does not arrive soon, the AI stack may stop asking who has the best model and start asking who can afford to keep serving one.

// Related Articles

- [IND]

Circle’s Agent Stack targets machine-speed payments

- [IND]

IREN signs Nvidia AI infrastructure pact

- [IND]

Circle launches Agent Stack for AI payments

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods