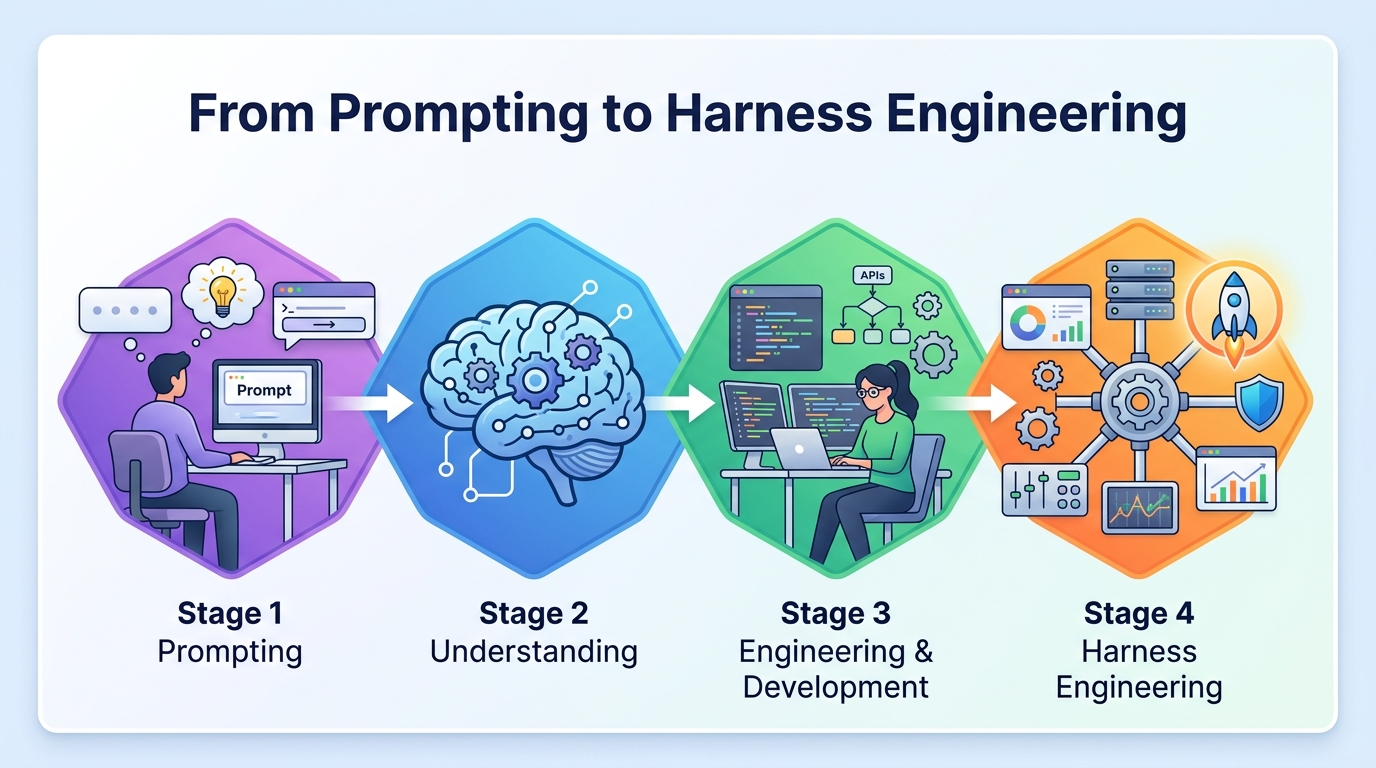

From Prompting to Harness Engineering

OpenAI says one team shipped a 1M-line product with 3 engineers and Codex, merging about 1,500 PRs in 5 months.

OpenAI says one team built a product with more than 1 million lines of code using Codex, three engineers, and five months of work. The team merged about 1,500 pull requests, which works out to roughly 3.5 PRs per engineer per day. That number matters because it hints at a deeper shift: the scarce skill is moving from typing code to shaping the system around the agent that writes it.

The word for that system is harness. In OpenAI’s framing, a harness is the environment, tooling, checks, and feedback loops that let an agent work reliably inside a real codebase. If prompting was the first wave of AI engineering, and tool use was the second, harness design is the next one.

That sounds abstract until you look at what actually changed. The best teams are no longer asking, “How do we get the model to produce code?” They are asking, “How do we make the model safe, fast, and useful inside our repo, our CI, and our review process?” That is a much harder question, and it is where the real engineering work is moving.

What OpenAI’s Codex team actually did

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

OpenAI’s Harness engineering post is interesting because it is packed with operational detail instead of marketing language. The team did not treat Codex like a chat interface. They treated it like a contributor that needed guardrails, fast feedback, and a workspace designed around agent behavior.

The headline numbers are the part people will remember, but the process is the part worth studying. Three engineers, five months, about 1,500 merged PRs, and a codebase that crossed 1 million lines. That is a lot of output for a small team, but it also implies a lot of invisible work: setting up tests, making failures legible, defining task boundaries, and deciding when the agent should stop and ask for help.

- Team size: 3 engineers

- Timeline: 5 months

- Pull requests merged: about 1,500

- Codebase size: more than 1 million lines

- Average output: about 3.5 PRs per engineer per day

Those numbers do not mean engineers are obsolete. They mean the bottleneck has moved. The hard part is no longer generating code at all costs. The hard part is creating a setup where generated code is worth merging.

Why the harness matters more than the prompt

Prompting helped people get useful outputs from large language models, but it was always fragile. A prompt can describe what you want, yet it cannot enforce test coverage, code style, repo conventions, or deployment safety. A harness can do those things because it is built into the workflow around the model.

This is why agent-first development is different from the old “ask the model for a snippet” habit. A good harness gives the agent access to the right files, constrains the task size, runs checks automatically, and feeds failures back into the loop. In practice, that means the model is not improvising in a vacuum. It is operating inside a system that narrows mistakes and makes correction cheap.

OpenAI’s post makes a subtle but important point: the best productivity gains come from engineering the environment, not from writing a better prompt. That is a big shift for teams that still think AI adoption means asking developers to chat with a model more often.

“The future of software development is not about replacing developers, but about augmenting them with AI.” — Satya Nadella, Microsoft Build 2023 keynote

Nadella’s quote is broad, but the Codex example gives it a concrete shape. Augmentation is not a vague promise here. It is a workflow where the human designs the system, and the agent executes inside it with measurable output.

How this compares with older AI coding workflows

For the last couple of years, most AI coding talk centered on copilots, autocomplete, and chat-based code generation. Those tools were useful, but they still assumed the human would do most of the integration work. The agent-first model changes the ratio.

Instead of asking a model for a function and then manually stitching it into a product, the engineer defines a task, lets the agent work, and reviews the result. That sounds similar on paper, but the numbers show the difference in practice. When a small team can merge around 1,500 PRs in five months, the review loop becomes a production line for validated changes, not a pile of half-finished suggestions.

- GitHub Copilot is mostly an inline assistant for writing code faster

- Codex in an agent setup can take on multi-step repo tasks

- Claude Code follows a similar agentic pattern for terminal-first coding

- Aider shows how much of the work can be pushed into a repo-aware loop

The difference is not cosmetic. Autocomplete helps you type faster. An agent with a good harness can work on a branch, run tests, fix failures, and come back with something reviewable. That is a higher level of abstraction, and it changes how teams think about staffing, scheduling, and code ownership.

What engineers need to build now

If harness engineering becomes the default pattern, then the job description for strong engineers changes fast. You still need people who understand architecture and debugging, but you also need people who can design agent-friendly workflows. That means clear task decomposition, reliable test suites, readable logs, and repo structures that do not confuse automated contributors.

It also means teams need to think about failure modes in a more disciplined way. An agent can be fast and still be wrong in expensive ways. The job is to make mistakes cheap to catch. That includes tighter CI, better diff review, stronger permission boundaries, and clearer instructions for what the agent should not touch.

For teams trying to adopt this style, the practical checklist is already visible:

- Break work into tasks the agent can finish in one pass

- Make tests fast enough that the agent can iterate without waiting forever

- Keep logs and errors readable for humans and machines

- Limit write access so the agent cannot wander across unrelated parts of the repo

- Measure merged output, not just generated output

That last point is the one many teams miss. Generated code is cheap. Merged code is what counts. A harness is good when it turns messy model output into code that survives review, tests, and deployment.

What this means for the next wave of AI teams

The most interesting part of OpenAI’s example is not that a model wrote code. Plenty of teams can get a model to write code. The interesting part is that a small group of engineers built a system where the model could keep producing acceptable changes over months, inside a real product workflow.

That suggests a near-term split in the market. Teams that treat AI as a chat assistant will get incremental gains. Teams that build harnesses around their agents will get compounding gains, because every better test, every clearer repo rule, and every cleaner review loop makes the next task easier.

My guess is that the next hiring signal will not be “prompt engineer,” which already feels dated. It will be people who can design agent workflows, debug model failures in production-like settings, and turn messy codebases into places where agents can work without constant rescue.

If you want to see where software engineering is heading, watch the teams that can merge more useful code with fewer humans, not the teams that can generate the longest prompt. The real question now is simple: when your agent writes code, what is the harness doing for it?

// Related Articles

- [IND]

Circle’s Agent Stack targets machine-speed payments

- [IND]

IREN signs Nvidia AI infrastructure pact

- [IND]

Circle launches Agent Stack for AI payments

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods