Why a Single Routing API Wins Model Serving

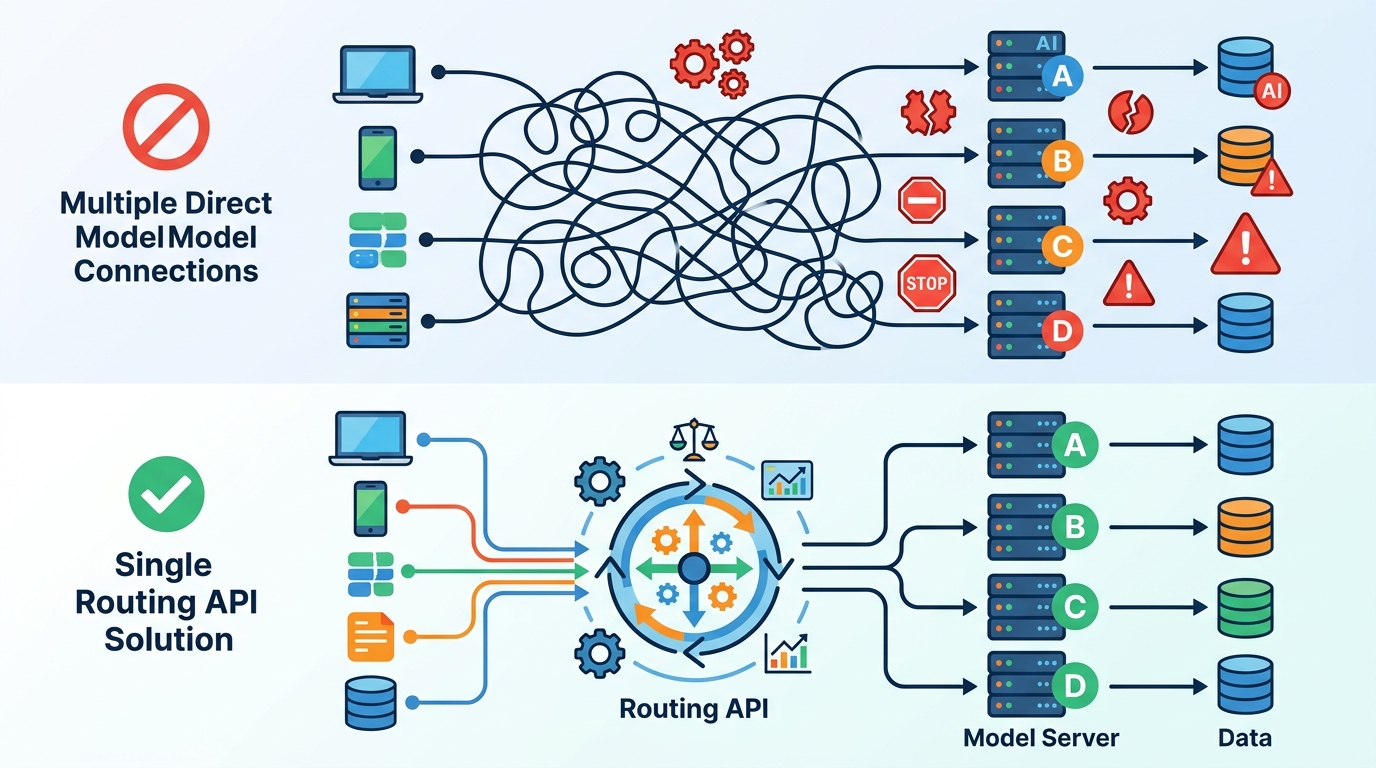

A single routing API is the right default for model serving platforms.

A single routing API is the right default for model serving platforms.

Netflix’s model serving experience points to a blunt conclusion: one entry point beats a patchwork of model-specific paths. The company says its singular API into the ML serving platform has significantly increased the speed of innovation for iterating on newer versions of existing ML experiences and for enabling completely new product experiences with ML. That is not a minor convenience. It is the difference between a platform that compounds and a platform that fragments.

First, a single route reduces the cost of change

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Every extra serving interface creates a tax on iteration. Engineers have to learn different request shapes, different deployment rules, different observability hooks, and different failure modes. When a platform standardizes the entry point, the team can change the machinery behind it without forcing every product team to relearn the contract. Netflix’s own result is the most important data point here: the singular API accelerated iteration on newer versions of existing ML experiences.

That speed matters because model serving is not a one-time launch problem. It is a continuous replacement problem. Models drift, features change, latency budgets tighten, and ranking logic gets revised. A single routing layer lets teams swap models, direct traffic, and test new versions without rebuilding the integration surface every time. The platform becomes a control plane, not a collection of one-off pipelines.

Second, routing centralizes product experimentation

Model-serving innovation stalls when every new idea requires a bespoke path to production. A unified routing API changes that by turning routing itself into a reusable capability. Instead of asking a product team to invent its own serving topology, the platform gives it one place to send traffic, one place to manage version selection, and one place to apply policy. That is how new ML-powered features get shipped faster.

The Netflix example is telling because the benefit is not limited to existing features. The same singular API also enabled completely new product experiences with ML. That is the real platform win. If a routing layer can support both incremental model upgrades and net-new experiences, then it is doing strategic work, not just operational work. It lowers the barrier to trying an idea, which increases the number of ideas that survive long enough to matter.

The counter-argument

The strongest case against a single routing API is that it can become a bottleneck. Different model classes have different needs. A recommender system, a vision model, and a large language model do not share identical latency, payload, or rollout requirements. A centralized entry point can look like one-size-fits-all governance, and governance often slows teams down. There is also a legitimate fear of coupling: if the routing layer is wrong, everything depends on it.

That concern is real, but it is not a reason to reject the pattern. It is a reason to design the API as a thin, stable contract rather than a rigid workflow engine. The right model is centralized routing with decentralized execution. Keep the entry point uniform, but let the platform expose enough policy and metadata to support different serving needs underneath. The bottleneck is not the single API itself. The bottleneck is building it as a monolith instead of a boundary.

What to do with this

If you are an engineer or platform owner, stop multiplying model-specific serving paths unless there is a hard technical requirement to do so. Build one routing surface, make versioning and traffic splitting first-class, and treat observability as part of the contract. If you are a PM or founder, optimize for the platform that makes new ML experiences cheaper to launch, not the one that looks most flexible on a diagram. In model serving, speed of innovation comes from standardization at the edge and freedom behind it.

// Related Articles

- [IND]

Why AI infrastructure is now the real moat

- [IND]

Circle’s Agent Stack targets machine-speed payments

- [IND]

IREN signs Nvidia AI infrastructure pact

- [IND]

Circle launches Agent Stack for AI payments

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions