Why AI infrastructure is now the real moat

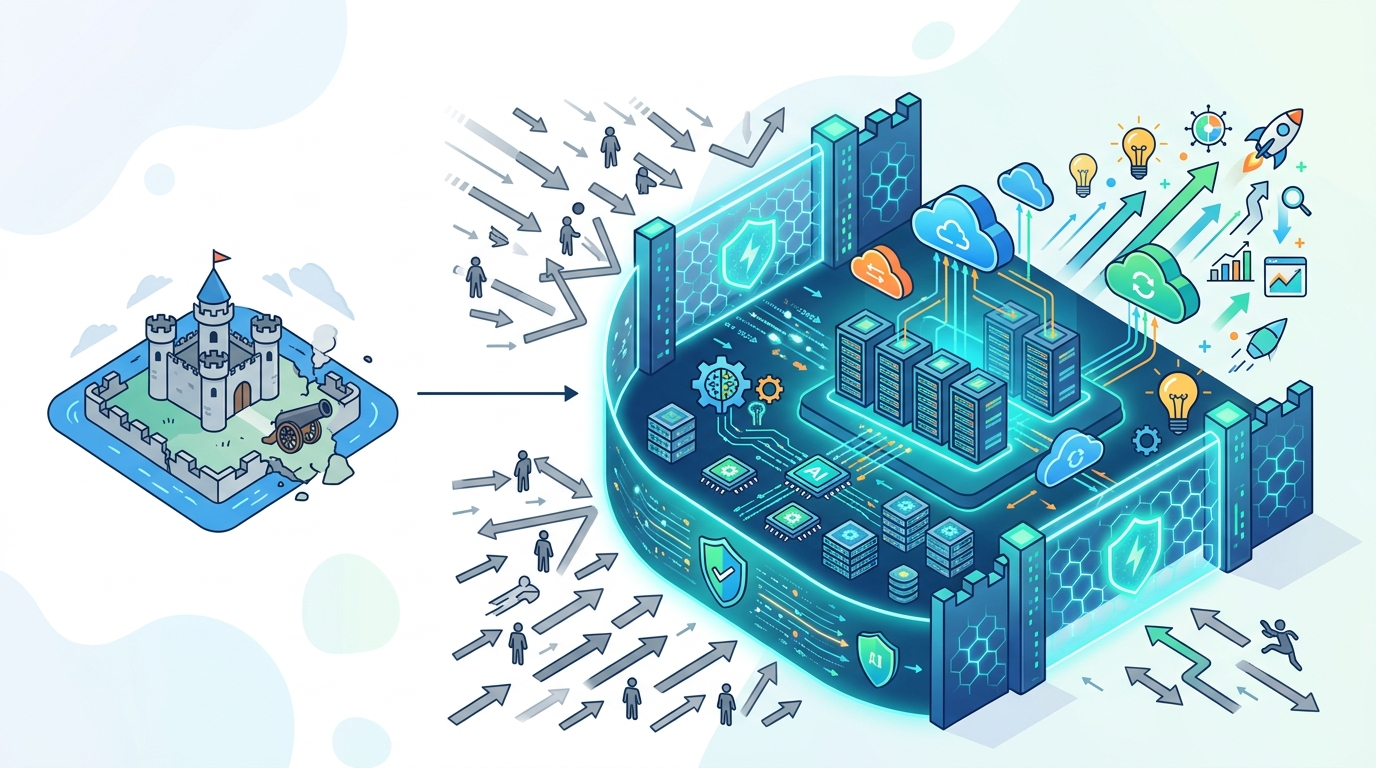

AI leadership now depends more on compute, distribution, and product limits than on model demos.

AI leadership now depends more on compute, distribution, and product limits than on model demos.

The latest AI roundup makes the real story plain: Anthropic is adding 300MW of compute with SpaceX, Doubao is pushing a multimodal upgrade, and new model launches keep arriving faster than users can evaluate them. That is not a race to the best benchmark slide. It is a race to who can secure power, ship reliably, and control usage at scale.

Compute, not cleverness, is now the bottleneck

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Anthropic’s reported deal with SpaceX for an extra 300MW is the clearest signal in the entire update. A company does not chase that kind of capacity unless demand is already outrunning supply. The implication is blunt: the next phase of AI competition is constrained by electricity, data center access, and deployment logistics, not by the number of clever prompts on a launch day.

This matters because compute changes the shape of strategy. When access to training and inference capacity becomes the scarce resource, model quality stops being the only differentiator. The winners are the teams that can lock in power, finance infrastructure, and keep serving users when everyone else hits a ceiling. In other words, infrastructure is the product.

Multimodal upgrades matter more than model names

Doubao-Seed-2.0-lite adding full multimodal support is a better example of value creation than yet another isolated benchmark win. Users do not buy “text-only intelligence” as a feature; they buy systems that can read, see, hear, and act across inputs. A model that crosses that boundary instantly becomes more useful in support, search, creation, and workflow automation.

Zyphra’s ZAYA1-8 launch fits the same pattern. The headline is not simply that another model exists. The real point is that the market keeps moving toward specialized, task-ready systems that compete on usability and integration. When multimodal capability becomes standard, the competitive edge shifts from raw model novelty to how fast a team can turn capability into an everyday tool.

The counter-argument

The strongest objection is that model quality still decides the market in the long run. Benchmarks, reasoning improvements, and better post-training can produce step changes that infrastructure alone cannot. If a model is dramatically smarter, customers will tolerate higher costs, weaker packaging, and even some friction. That is a serious argument, and it is not wrong.

But it misses the operating reality of this market. A breakthrough model that cannot be deployed cheaply, consistently, and at scale is a lab result, not a business. The 300MW headline is evidence that the frontier is now capital intensive by default. I accept that model quality remains necessary. I reject the idea that it is sufficient. In 2026, the moat is the combination of compute, distribution, and product limits, not the model alone.

What to do with this

If you are an engineer, optimize for systems that degrade gracefully under load and can absorb multimodal expansion without rewrites. If you are a PM, treat usage limits, latency, and cost controls as core product decisions, not back-office constraints. If you are a founder, stop pitching “we have a better model” as the main story and start building around access to compute, a clear workflow wedge, and a path to reliable scale.

// Related Articles

- [IND]

Circle’s Agent Stack targets machine-speed payments

- [IND]

IREN signs Nvidia AI infrastructure pact

- [IND]

Circle launches Agent Stack for AI payments

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods