Retrieval-Augmented Generation, Explained Simply

RAG lets large language models pull fresh facts from documents before answering, which cuts hallucinations and adds citations.

RAG lets large language models pull fresh facts from documents before answering.

Retrieval-augmented generation, or RAG, is one of the simplest fixes for a stubborn LLM problem: models can sound confident while getting facts wrong. The idea is old by AI standards, but it matters more now because teams want chatbots that can answer with current, source-backed information instead of frozen training data.

Wikipedia’s overview points to a practical pattern: retrieve relevant text first, then generate the answer. That sounds modest, but it changes how people build assistants for support, search, internal knowledge bases, and legal or medical workflows.

| Fact | Value | Why it matters |

|---|---|---|

| Term introduced | 2020 | RAG entered the literature in a paper that paired a parametric model with external memory. |

| Google Bard error | $100 billion | A wrong answer about JWST hit Google’s stock value hard. |

| Retrieval target | External documents | RAG pulls from databases, uploaded files, and web sources. |

| Common data form | Embeddings | Text is often turned into vectors for retrieval. |

Why RAG exists in the first place

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Large language models are good at pattern matching, but they do not automatically know what changed yesterday. That matters when a company policy, product spec, or regulatory rule shifts after training. RAG gives the model a way to look up the latest material before it writes a response.

The appeal is practical. If your support bot can read your help center, your sales assistant can quote product docs, and your internal assistant can search policy files, you do not need to retrain the model every time the source material changes.

That also helps with hallucinations, the polite term for confident nonsense. In the Ars Technica line quoted on Wikipedia, RAG improves LLM performance by blending the model with a search or lookup process so it sticks closer to the facts.

- It reduces dependence on stale training data.

- It can surface citations users can verify.

- It lowers the need for frequent retraining runs.

- It works with databases, PDFs, and web pages.

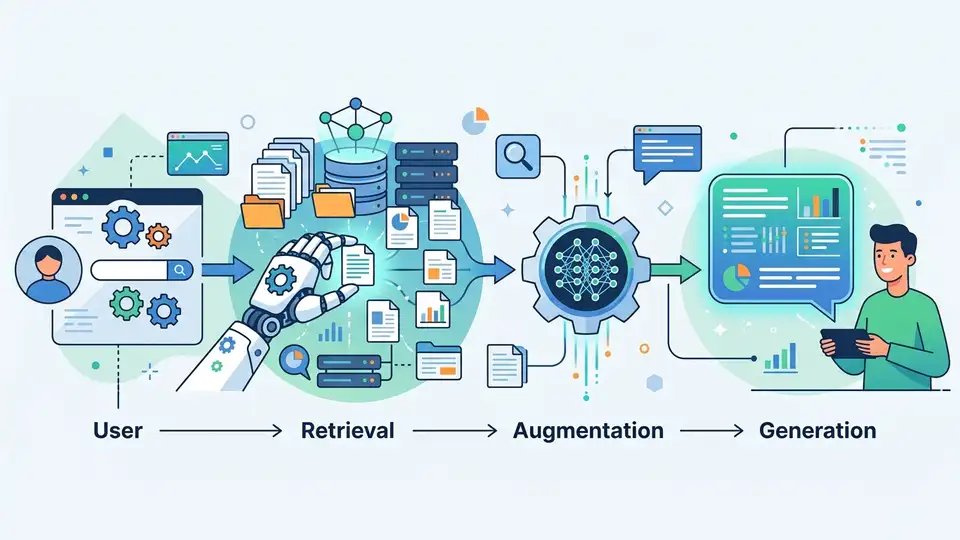

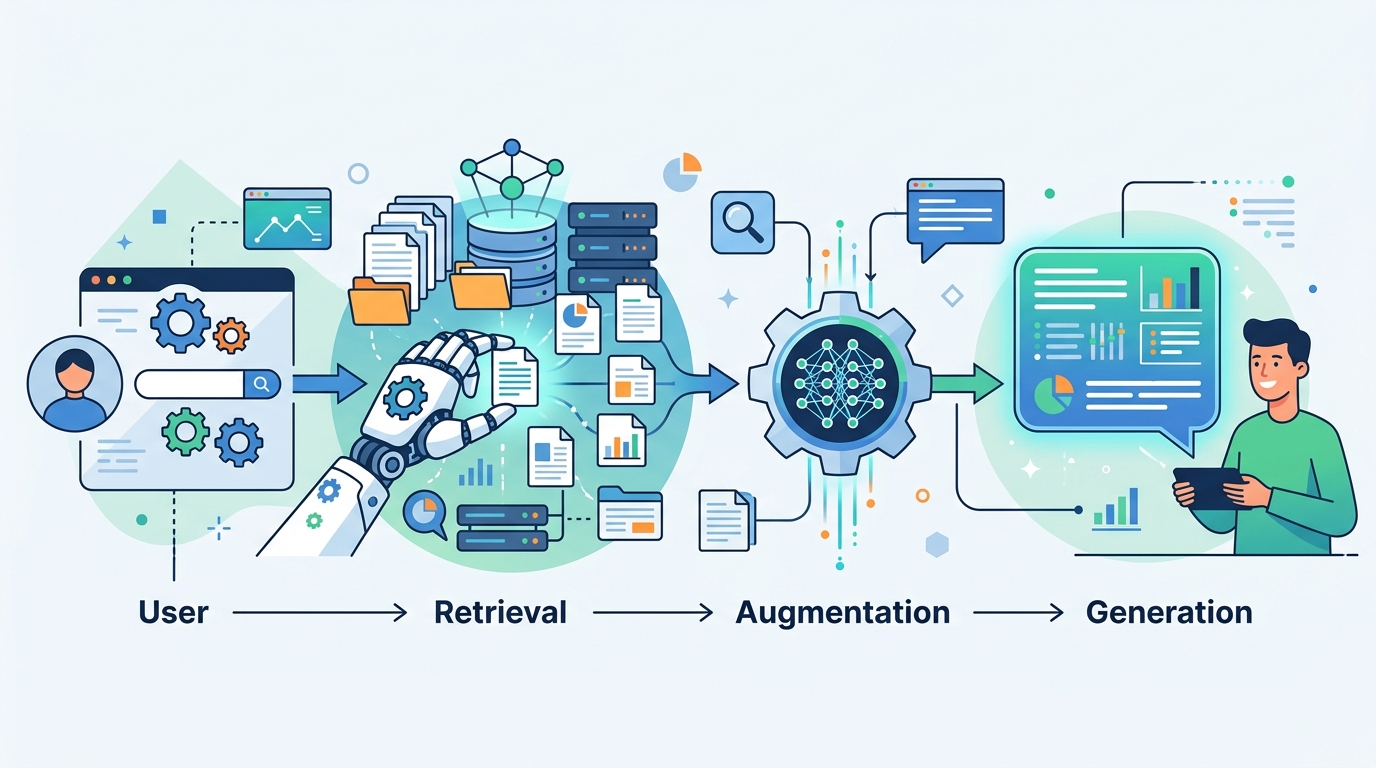

How the pipeline actually works

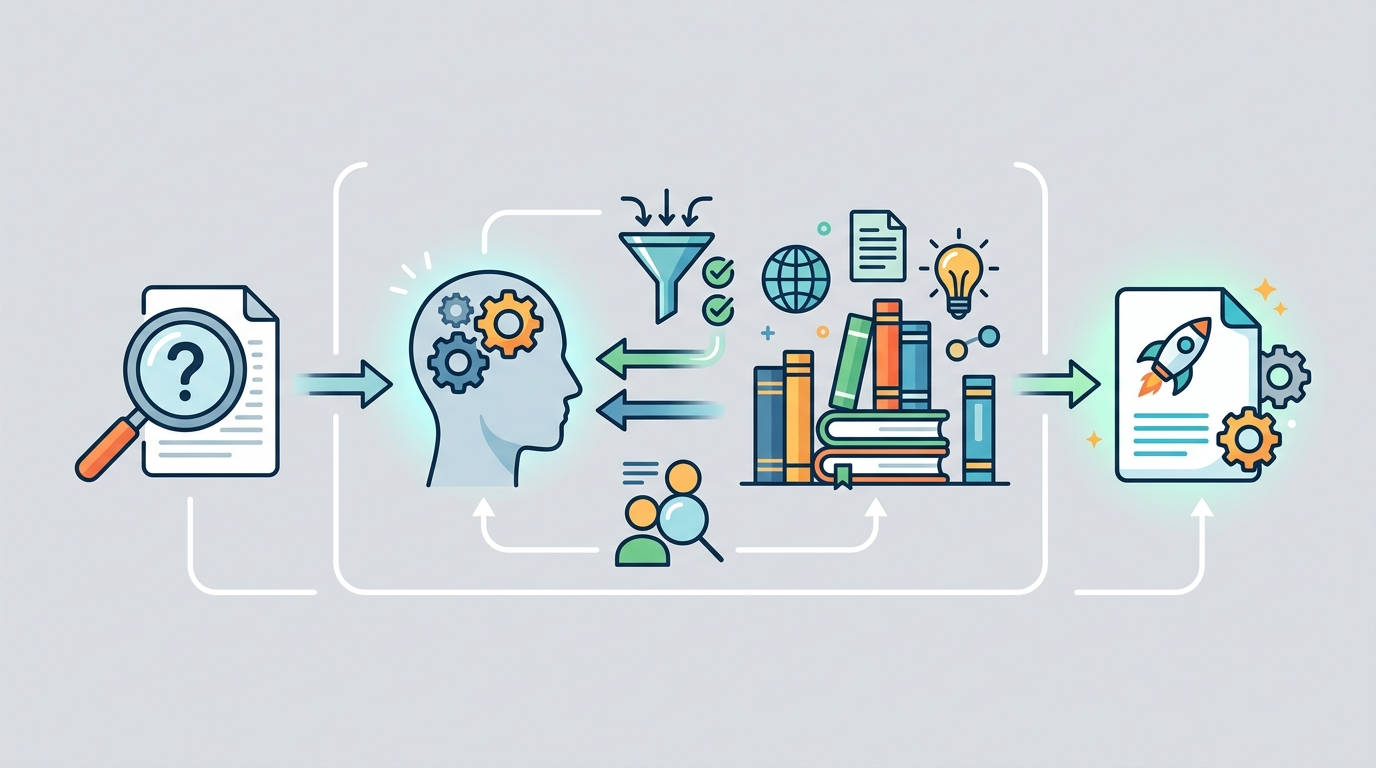

The standard RAG flow has a few moving parts, and each one can fail in a different way. First, documents are split into chunks and converted into embeddings. Those vectors are stored in a vector database, which makes similarity search possible at query time.

When a user asks a question, a retriever searches for the most relevant chunks. Those chunks are added to the prompt, and the language model generates an answer using both the user’s question and the retrieved context.

That sounds straightforward, but the details matter. Chunk size affects recall. Retrieval quality affects relevance. Prompt formatting affects whether the model actually uses the retrieved text.

"RAG is a way of improving LLM performance, in essence by blending the LLM process with a web search or other document look-up process to help LLMs stick to the facts." — Ars Technica

Wikipedia also notes that some systems add reranking, query expansion, memory, or self-improvement loops. Those extras are there for a reason: basic retrieval often finds near-matches, while production systems need the most useful passage, not just the closest vector.

That is why the best RAG systems usually mix dense vectors with sparse search, then rerank the results before generation. Pure vector search is fast, but it can miss exact terms, names, and numbers that matter in real questions.

Where RAG helps most

RAG shows up anywhere the answer needs to stay close to a source of truth. Search engines use it. Enterprise assistants use it. Customer support bots use it. So do recommendation systems and some healthcare tools, where the model needs grounding in a controlled knowledge base.

The strongest use cases are the ones where the source material changes often or where citations matter. A chatbot that answers from a policy handbook is much more useful if it can quote the exact section it used. A medical assistant is safer if it can point to the study or guideline behind the answer.

Here is the tradeoff: RAG can improve trust, but it does not magically make the model smart about context. If the retrieved source is misleading, incomplete, or framed oddly, the model can still produce a bad answer.

- Enterprise knowledge assistants need access to internal docs.

- Legal tools need source-backed citations.

- Healthcare systems need controlled medical references.

- E-commerce assistants need fresh product and inventory data.

RAG fixes one problem and exposes another

RAG was never meant to solve every LLM failure. It helps with freshness and sourcing, but it can still misread context. Wikipedia points to a MIT Technology Review example where a model pulled a book title that sounded like a factual claim and produced a false statement from it.

That is the subtle failure mode people miss. Retrieval can bring in the right document and still produce the wrong answer if the model fails to interpret what the document is saying. A good retriever is necessary, but it is not enough.

There is also the issue of prompt stuffing, where the retrieved context is inserted ahead of the user query so the model gives it more weight. That can help, but it also means the ordering and formatting of context become part of the product design.

For teams building these systems, the lesson is blunt: retrieval quality, chunking strategy, reranking, and prompt design all matter. If any one of them is weak, the whole stack gets shaky.

What the numbers say about the tradeoffs

Wikipedia’s overview includes a few concrete comparisons that explain why RAG drew so much attention. Google’s Bard mistake about the James Webb Space Telescope helped wipe roughly $100 billion from Google’s stock value. On the research side, the Retro family of models showed that a retriever-aware design can use a network about 25 times smaller while keeping perplexity competitive.

Those two numbers point in opposite directions. One shows how expensive a wrong answer can be in public. The other shows how much efficiency a retrieval-aware design can buy when the system is built around retrieval from the start.

There is a catch, though. Retro-style models train with retrieval in mind from the beginning, which means they give up one of RAG’s main advantages: the ability to bolt retrieval onto an existing model without retraining from scratch.

- Google Bard’s JWST error had an estimated $100 billion market impact.

- Retro reportedly used a network 25 times smaller than comparable models.

- RAG dates to a 2020 paper, not the current wave of chatbot hype.

- Some newer systems add query expansion across multiple domains.

RAG is becoming the default, but quality still wins

The real story here is not that RAG is magic. It is that product teams now expect language models to answer from live or private data, and RAG is the most practical way to get there without retraining every week. That makes it a default building block for serious AI apps.

If you are evaluating a RAG system, do not ask whether it uses retrieval. Ask what it retrieves, how it chunks, how it reranks, and whether it can show its work. Those details decide whether the assistant is useful or merely fluent.

For a related deep dive on model behavior and source grounding, see our explainer on LLM hallucinations. The next wave of RAG systems will probably be judged less by how clever the retrieval looks and more by whether the answer is correct, cited, and fast enough for real users.

// Related Articles

- [RSCH]

TurboQuant and the SEO Shift for Small Sites

- [RSCH]

TurboQuant vs FP8: vLLM’s first broad test

- [RSCH]

LLMbda calculus gives agents safety rules

- [RSCH]

A simpler beamspace denoiser for mmWave MIMO

- [RSCH]

Why AI benchmark wins in cyber should scare defenders

- [RSCH]

Why Linux security needs a patch-wave mindset